Meeting Automation for Marketing Teams: From Client Calls to Insights

Marketing teams run on external conversations: client calls, agency briefings, campaign reviews, research interviews, competitive positioning sessions. These conversations contain the raw material for better strategy, how clients actually describe their problems, which messages landed and which fell flat, what competitors came up in conversation, what the market is telling you before it shows up in analytics. Most of that content evaporates within 24 hours of the meeting ending.

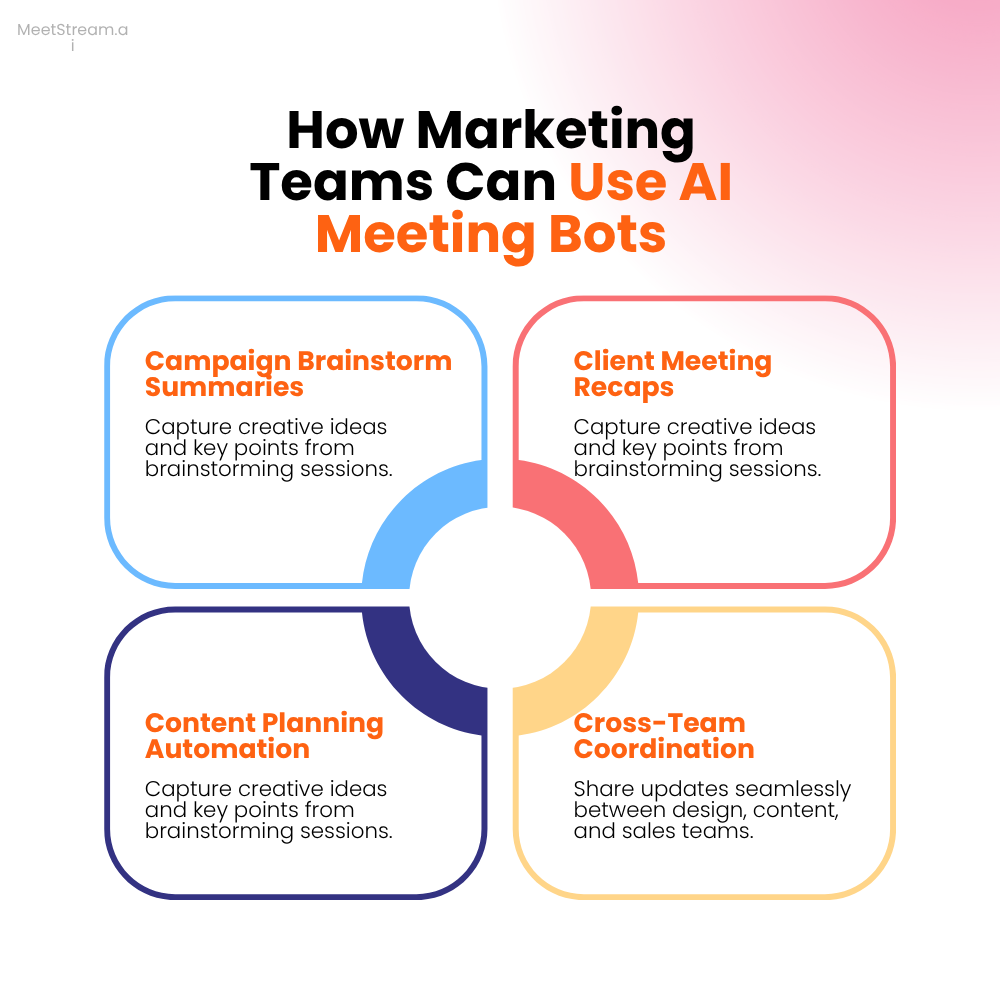

The gap is structural. A marketing meeting automation tool has to do something different from what generic note-takers do. Summarizing a client call as a paragraph is table stakes. Extracting and categorizing the specific feedback themes, tracking competitor mentions across all client conversations over a quarter, measuring whether a new messaging angle is resonating better than the previous one, that requires treating transcripts as structured data, not just text to summarize.

For agency teams, the stakes are even higher. A missed client deliverable expectation in a briefing call, a commitment the account manager made that never made it to the brief, a scope change discussed verbally but never written down, these are the friction points that damage client relationships. A system that automatically converts call content into structured artifacts reduces this risk significantly.

This guide is for developers building marketing intelligence tools, whether for a single marketing team or as a product for marketing agencies and teams. We'll cover four core capabilities, the technical pipeline for each, and how to position transcript data as the connective tissue between client conversations and marketing strategy. The recording and transcription infrastructure uses the MeetStream API; the intelligence layer is where the product differentiation lives.

Auto-Transcribing Client and Agency Calls

The first capability is the most basic: reliable transcription of every client call with speaker attribution. For a marketing agency handling 20 client accounts, this might be 40-60 meetings per week. For an in-house marketing team running continuous research interviews, the volume might be lower but the content density is higher.

Bot deployment follows the standard MeetStream pattern: POST https://api.meetstream.ai/api/v1/bots/create_bot with the meeting link, a descriptive bot name ("Acme Marketing Bot" for agency-specific deployments), and a callback_url. Set automatic_leave: true so cleanup is handled automatically.

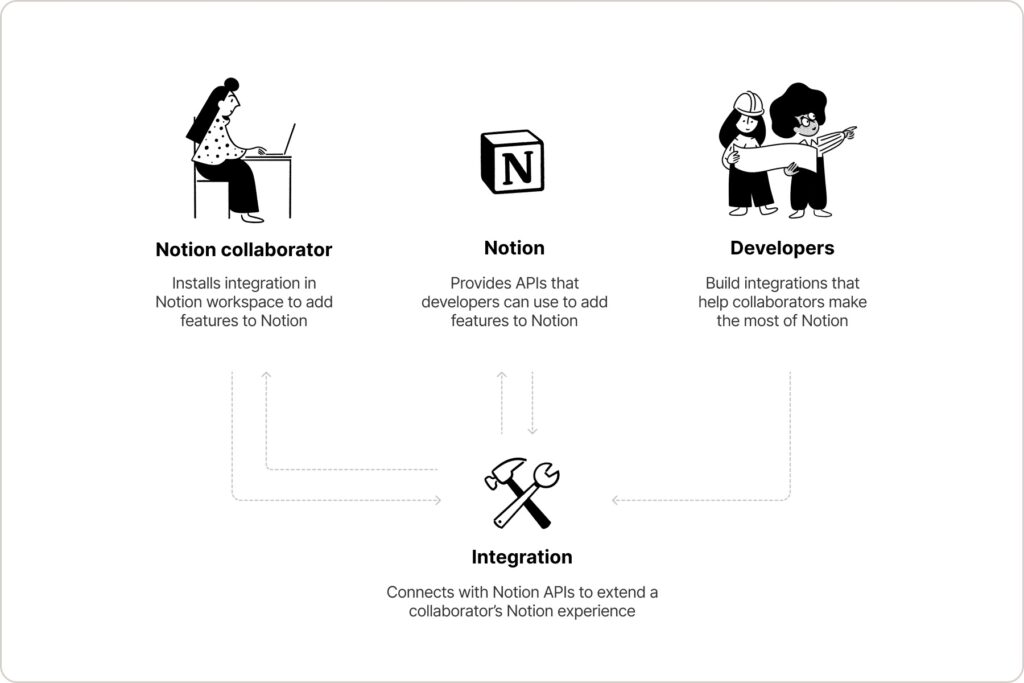

For agency contexts, multi-tenant bot deployment matters. Each client account should have its own bot configuration, with transcripts stored in a namespace specific to that client. This isn't a MeetStream API concept, it's a data model decision in your application. Associate each bot deployment with a client ID and a project ID before the call starts. When transcription.processed fires, write the transcript with those IDs in the metadata. Retrieval later is then scoped to the right client without requiring manual tagging.

Speaker labels from the transcription API will typically be "Speaker 0", "Speaker 1", etc. For client call transcription marketing contexts, resolving these to actual names and roles (agency lead, client stakeholder, client technical contact) improves downstream extraction quality significantly. Build a speaker resolution step that maps speaker labels to known attendees using calendar invite data or meeting link metadata.

One configuration worth setting up: recording_config with a retention policy appropriate for your client relationship. Some clients contractually require call recordings to be deleted after a certain period. Configurable retention at the platform level, rather than managing it as a manual process, avoids compliance gaps when relationships end.

Extracting Feedback Themes and Competitor Mentions

Transcript data at scale becomes useful when you can extract signal across many calls. A single client mentioning a competitor is interesting. Six different clients mentioning the same competitor in the same week is a market signal worth acting on. Getting from individual transcripts to aggregate themes requires structured extraction.

The extraction schema for marketing calls: feedback items (positive, neutral, negative), explicit feature or service requests, competitor mentions with context (evaluating? switching from? comparing?), messaging effectiveness indicators (which value propositions generated engagement vs. skepticism), and action commitments made by either party.

After extracting these categories from individual calls, the aggregate view is the product. Store each extracted item with the transcript segment, the speaker, the call date, the client, and the category. Then build query interfaces over the aggregate:

"Which competitors have been mentioned most frequently in client calls this quarter, and what was the context?", This surfaces competitive intelligence without requiring a dedicated competitive analyst listening to every call.

"Which messaging themes generated the most positive client responses across all new business calls this month?", This tells you which positioning is actually working before you've committed to a campaign budget.

"What feedback themes are appearing in calls with enterprise clients that aren't appearing with SMB clients?", This surfaces segmentation signals that might not show up in quantitative data until later.

For competitor mention extraction, entity recognition is important. "Recall" by itself might mean Recall.ai or might be a different noun. Build a competitor entity list and use that as a grounding reference for extraction prompts. A prompted model with an explicit list ("extract any mentions of the following competitors: [list], along with the exact context of the mention") produces cleaner output than asking a model to find all competitors generically.

Creating Shareable Call Summaries for Stakeholders

Marketing and agency teams routinely need to share call summaries with stakeholders who weren't on the call: the client's internal team, other agency departments, the account director reviewing the account, the strategist planning the next campaign phase. The current workflow is usually: the person who was on the call writes a recap email from memory, with varying levels of completeness and accuracy.

Automated shareable summaries replace this with a structured, consistent artifact generated from the actual transcript. The key design decision is audience awareness. A technical call recap shared with a non-technical client stakeholder should omit implementation specifics. A strategic briefing recap shared internally should emphasize decisions and open questions. A research interview recap shared with the product team should lead with insights, not logistics.

Build meeting type classifications into your extraction pipeline. When a bot is deployed, tag the meeting type: client briefing, campaign review, research interview, competitive analysis call, agency-internal. The extraction prompt is tailored per type, and the output template is different per type. A "client briefing" template emphasizes: deliverables agreed, timelines confirmed, scope items. A "research interview" template emphasizes: themes surfaced, hypotheses confirmed or refuted, follow-up research questions.

The shareable format matters for adoption. Stakeholders who receive a well-formatted summary with clearly delineated sections are more likely to read it than a wall of text. Use HTML structure in your outputs: a heading for each section, decisions bolded, action items formatted as a clear list with owner names. If you're pushing to Notion, use Notion's block API to create a properly structured page rather than dumping text into a single paragraph block.

-jpg.jpeg)

For agency teams, consider a client-facing share option: a lightweight web view of the call summary that the account manager can share with the client for confirmation. This replaces the email-based recap workflow and creates a natural touchpoint for clients to confirm their understanding of what was decided. It also creates an audit trail, clients can't later claim a deliverable expectation wasn't discussed if the summary was shared and not objected to.

Tracking Messaging Effectiveness Across Calls

This is the capability that distinguishes a genuine marketing intelligence tool from a fancy note-taker. Most marketing teams test messaging through campaigns and measure effectiveness by conversion rate, which is a lagging indicator with a long feedback loop. Call transcripts give you a leading indicator: how do prospects and clients react when your team presents a specific message in a live conversation?

The technical approach: maintain a messaging framework in your system, a list of value propositions, positioning statements, key claims. When analyzing call transcripts, detect when a team member presents each message and what the customer's response was. The response can be classified as: engaged (asked a follow-up question, made a positive comment), neutral (acknowledged without further engagement), skeptical (challenged or questioned), or negative (dismissed or objected).

Aggregate these response classifications across all calls for a time period. "Message X: 45% engaged, 30% neutral, 25% skeptical. Message Y: 65% engaged, 20% neutral, 15% skeptical." This is a measurement of messaging effectiveness derived from actual customer reactions, not a proxy metric. It's more reliable than A/B testing email subject lines because it captures the full conversational response, not just a click.

For meeting insights marketing tools, this is a genuinely differentiated capability. Competitors like Gong surface this for sales, but there's no equivalent product tuned for marketing team conversations. The extraction logic is more nuanced than sales (marketing conversations aren't as structured as sales calls) but achievable with a well-designed prompt that uses the messaging framework as a grounding reference.

Track messaging effectiveness over time. If you refresh your positioning every quarter, compare Q1 vs. Q2 response rates per message. Did the new messaging actually perform better? With 50+ calls in the sample, the answer is statistically meaningful rather than based on one person's intuition about how the quarter felt.

The Transcript-as-Data-Source Architecture

The mental model that makes all of these capabilities cohere is treating transcripts as a data source, not as documents. A document you read once and file. A data source you query repeatedly, aggregate over time, and use to answer new questions as they arise.

The technical foundation for this is a well-designed data model. At minimum: a meetings table (ID, date, type, client, project, attendees), a segments table (meeting ID, speaker, start time, end time, text, sentiment), and an extracted items table (meeting ID, type [feedback/competitor/message/action], content, speaker, sentiment, confidence score). This schema supports every query pattern above and is simple enough to implement in a weekend.

For the extraction pipeline, use a job queue. When transcription.processed fires, enqueue one job per extraction type. Feedback extraction, competitor mention extraction, messaging effectiveness analysis, and action item extraction run independently and write to the same extracted items table. Each job can be retried independently if it fails, and each can be updated with improved prompts without rerunning the others.

The query interface on top of this data is where the product experience lives. For an agency team tool, that might be a simple web dashboard with filters (client, date range, meeting type) and pre-built views (competitor mentions this quarter, feedback by client, messaging effectiveness by campaign). For an enterprise marketing tool, a natural language query interface that translates questions to database queries against your structured extraction table is worth the investment.

What Developers Should Standardize vs. Customize

If you're building this as a product for marketing teams rather than a one-off internal tool, the build vs. configure decision matters for scale:

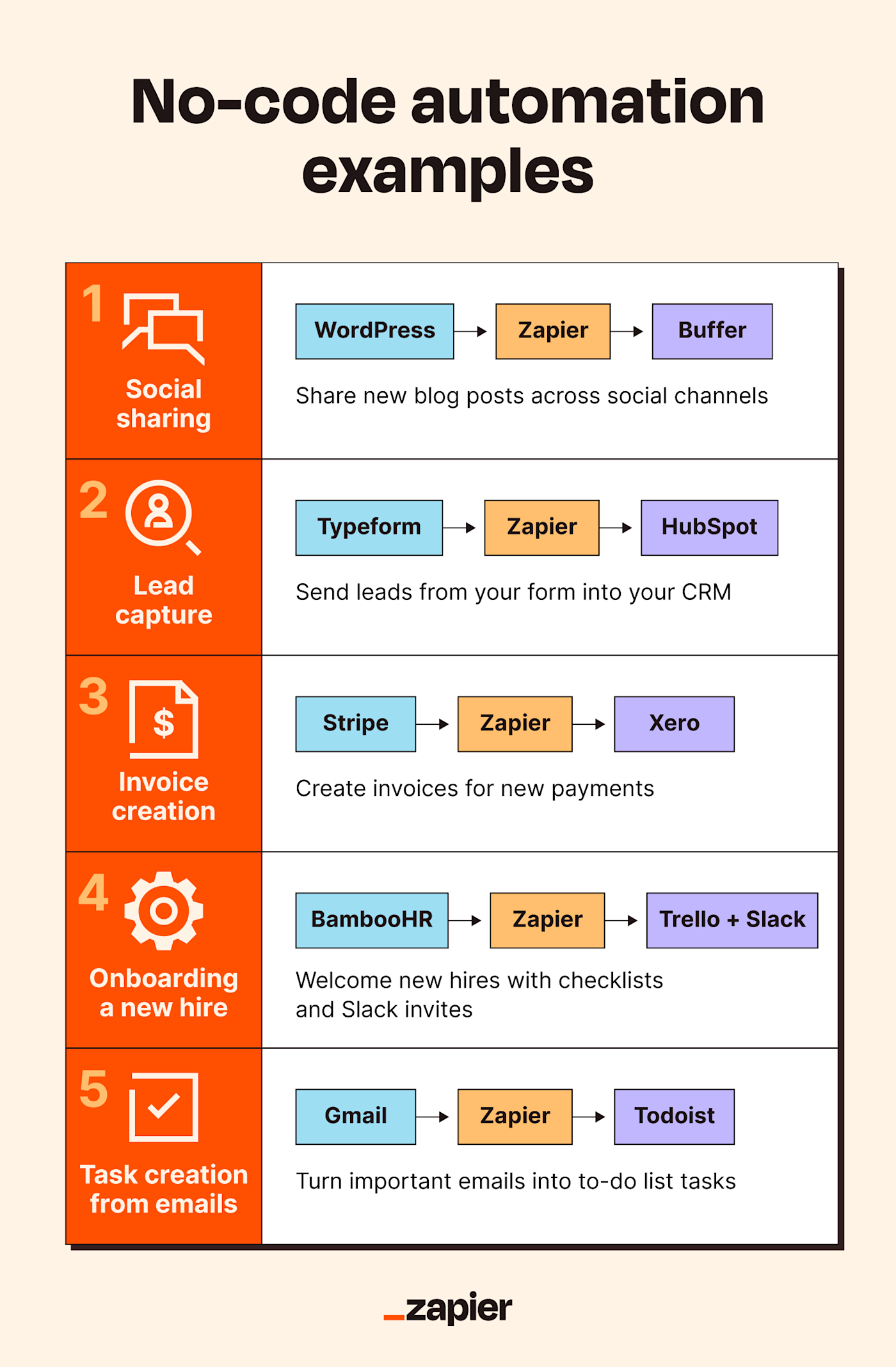

Standardize: the recording and transcription pipeline (always the same MeetStream API integration), the data model (the schema described above works for any team), the delivery mechanisms (Slack, email, Notion are the right defaults).

Customize per customer: the extraction categories (a B2B SaaS marketing team has different feedback taxonomies than a digital agency), the competitor entity list (obviously per customer), the messaging framework (per customer, updated each time they refresh positioning), and the share templates (per customer brand and formatting preferences).

Build the standardized pieces as your application core. Build the customization pieces as configuration that customers manage themselves. The fastest way to lose a marketing team as a customer is to require engineering involvement every time they add a new competitor to the tracking list.

Frequently Asked Questions

How do marketing agencies handle call recording consent across different clients?

Consent requirements vary by jurisdiction, but the consistent approach is: the agency includes call recording disclosure in their standard client agreement, and the bot name in the meeting UI provides visible in-call disclosure. For client calls with regulated industries (financial services, healthcare), check with your legal counsel on additional requirements. Store the consent status (based on agreement date) as part of your client record, and only deploy bots to clients who have active agreements that include recording consent.

How accurate is messaging effectiveness tracking when conversations are unstructured?

Accuracy depends on how explicitly your team presents messages in calls. Trained sales teams that deliver specific positioning statements verbatim are easy to detect. Marketing strategists in consultative conversations are more conversational and less scripted. For the latter, detecting the theme of what was communicated (even without exact wording match) requires semantic similarity rather than keyword matching. Using your messaging framework as embedding references and running cosine similarity against transcript segments works better for conversational contexts than exact match.

Can you track how competitor mentions change over time as a market signal?

Yes, and this is one of the highest-value aggregate signals in the system. Build a monthly trend view of competitor mention frequency and context (evaluating, switching from, comparing to). A competitor that appears in 5% of conversations in Q1 and 15% in Q3 is gaining presence in your market. The context of mentions tells you the narrative, are they being evaluated as an alternative, or are clients asking you to differentiate against them? These are different market dynamics requiring different responses. No survey or market research produces this signal as quickly or as accurately as your own client call data.

What's the right way to structure a shareable call summary for a client?

For client-facing summaries, the structure that works well is: 1) Meeting objective (one sentence), 2) Key decisions made (bullet points, max 5), 3) Action items with owners and dates, 4) Open items / next discussion topics. Keep it to one page or one Slack message. The goal is that a client stakeholder who wasn't on the call can read this in 90 seconds and know exactly what was decided and what happens next. If it requires reading more than that, it's too long. Most of the transcript detail should stay in the internal version, not the client-facing one.

How do you prevent transcript data from leaking across client accounts in a multi-tenant agency tool?

Row-level security in your database is the right approach. Every query that touches the transcripts or extracted items tables should include a mandatory filter on client_id scoped to the requesting user's account. Use your ORM's row-level security features (Postgres's RLS, or application-level enforcement if your ORM doesn't support it). Test cross-client data isolation explicitly in your test suite, this is a category of bug where a single mistake exposes sensitive competitive information to the wrong client, which is a business-ending incident for an agency.

If you're building a marketing team meeting tools product and want to test the transcript pipeline, the MeetStream API works across Google Meet, Zoom, and Teams with no platform changes required. The documentation includes working examples for the webhook pipeline and bot configuration parameters relevant to multi-tenant use cases.