How to Deploy a Meeting Bot: Architecture and Best Practices

You've built the logic. Your bot can join a meeting, capture audio, and pipe it somewhere useful. Then you try to deploy it and realize the hard part was never the bot, it was the infrastructure holding it up. A browser instance per meeting, persistent WebSocket connections, unpredictable meeting durations, and bursts of 50 concurrent calls on a Tuesday afternoon. Most teams spend more engineering time on deployment scaffolding than on the product itself.

Meeting bots aren't stateless HTTP handlers. They're long-running, resource-intensive processes with non-deterministic lifetimes. A 20-minute standup and a 3-hour board review look identical at dispatch time. That asymmetry breaks every assumption serverless platforms make about workload shape, and it's why naive deployments collapse under load.

There are three serious deployment models for meeting bots: serverless triggers (for orchestration, not execution), container-based fleets (Docker/Kubernetes), and persistent VM pools. Each has a distinct tradeoff profile around cost, latency, and operational overhead. The choice depends on your concurrency expectations, latency tolerance on join, and how much infrastructure you want to own.

In this guide, we'll cover the full architecture of each model, how to handle cold starts and concurrent load, state management across bot sessions, and how MeetStream's managed meeting bot infrastructure eliminates most of this complexity entirely. Let's get into it.

Why Meeting Bot Deployment Is Different

Standard web service deployment assumes short, stateless requests. Meeting bots violate every one of those assumptions. Each bot needs a headless browser or a native meeting client SDK (Chromium for Google Meet, Electron-based or SDK-based for Zoom), which consumes 300, 800 MB of RAM per instance before any media processing begins. WebSocket connections to the meeting platform must stay alive for the entire meeting duration. Media streams, typically 48kHz stereo audio plus 720p video, arrive continuously and must be processed in real time or buffered responsibly.

The result is that each active bot is effectively a small server process, not a request handler. This has direct implications for how you provision, schedule, and terminate bot instances.

Deployment Model 1: Serverless Triggers

Serverless functions (AWS Lambda, Google Cloud Functions, Azure Functions) are not suitable for running bot processes directly. A Chromium instance alone exceeds Lambda's 10 GB memory cap on many configurations, and the 15-minute execution limit breaks for any meeting longer than that. Serverless cold starts of 1, 3 seconds are also unacceptable when a bot needs to join before the first speaker finishes their intro.

Where serverless works well is in the orchestration layer, the thin layer that receives a trigger (calendar event, webhook, user action), dispatches a bot creation request to a pool or external API, and records the resulting bot ID. The actual bot process runs elsewhere.

# Lambda handler: trigger bot creation on calendar event

import json, os, urllib.request

def handler(event, context):

meeting_url = event['meeting_url']

payload = json.dumps({

"meeting_link": meeting_url,

"bot_name": "Notetaker",

"callback_url": os.environ['WEBHOOK_URL'],

"live_transcription_required": {

"webhook_url": os.environ['TRANSCRIPTION_WEBHOOK_URL']

}

}).encode()

req = urllib.request.Request(

"https://api.meetstream.ai/api/v1/bots/create_bot",

data=payload,

headers={

"Authorization": f"Token {os.environ['MEETSTREAM_API_KEY']}",

"Content-Type": "application/json"

},

method="POST"

)

with urllib.request.urlopen(req) as resp:

result = json.loads(resp.read())

return {"bot_id": result["bot_id"], "status": "dispatched"}

This pattern keeps Lambda responsible for dispatch logic only. The bot lifecycle, joining, recording, leaving, runs in a separate, persistent process environment.

Deployment Model 2: Containerized Bot Fleets

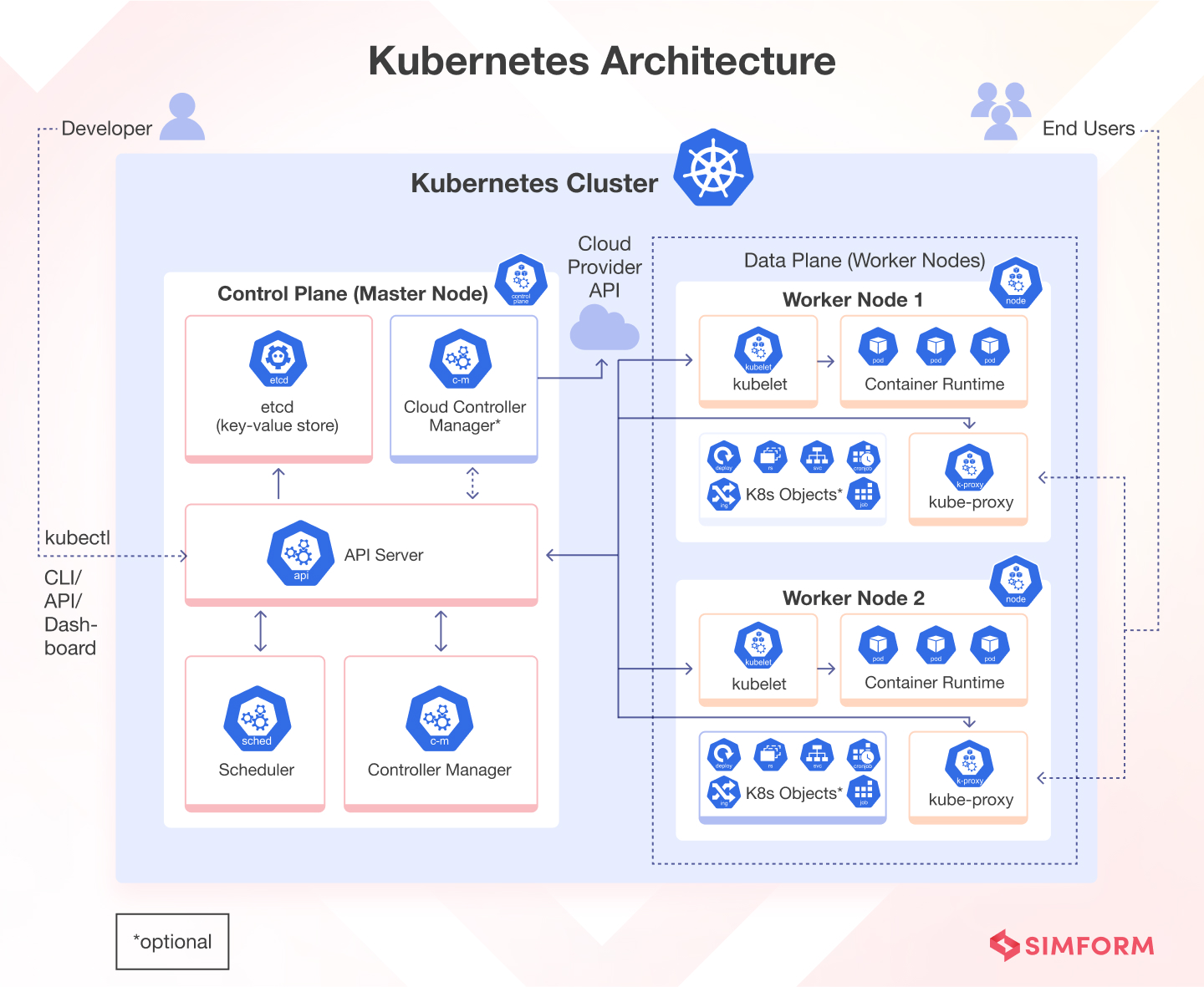

For teams running their own meeting bot deployment, containers are the most common production choice. Each bot instance runs in its own Docker container with a Chromium binary, the meeting platform's web app, and your processing code. Kubernetes or ECS handles scheduling, health checks, and restarts.

The core challenge is resource planning. A single Chromium-based bot needs approximately:

- 600 MB, 1 GB RAM for the browser process

- 1, 2 vCPU cores under active media capture

- Reliable network with low jitter (meeting platforms are sensitive to packet loss)

On a c5.2xlarge (8 vCPU, 16 GB RAM), you can safely run 8, 12 concurrent bots depending on media load. Budget 2 GB per bot as a safe default and monitor actual consumption before reducing headroom.

# docker-compose for a single bot runner

version: '3.8'

services:

bot-runner:

image: your-org/meeting-bot:latest

shm_size: '2gb' # Chromium requires large /dev/shm

environment:

- MEETING_URL=${MEETING_URL}

- API_KEY=${MEETSTREAM_API_KEY}

- CALLBACK_URL=${CALLBACK_URL}

deploy:

resources:

limits:

memory: 2G

cpus: '2.0'

restart: "no" # bots are ephemeral, don't restart after meeting ends

Note the shm_size: '2gb' directive. Chromium uses /dev/shm for shared memory between renderer processes. Without this, you'll see crashes on media-heavy pages. This is one of the most common silent failure modes in containerized bot deployments.

For Kubernetes, use a Job (not a Deployment) for each bot instance. Jobs terminate when the process exits, which maps cleanly to the bot lifecycle. A Deployment will try to restart the container after the meeting ends, wasting resources and polluting your logs.

Deployment Model 3: VM Pool with Pre-Warmed Instances

For latency-sensitive deployments, where a bot must join within 10 seconds of the trigger, pre-warmed VM pools are the most reliable approach. You maintain a fleet of idle VMs with Chromium already initialized, ready to receive a meeting URL and join immediately.

The tradeoff is cost. You're paying for idle capacity to buy cold-start headroom. The economics work at scale (100+ concurrent bots) but are hard to justify below that threshold.

A typical implementation uses a pool manager process that:

- Maintains N idle bot processes listening on a local job queue (Redis or SQS)

- Accepts a meeting URL + config from the orchestration layer

- Assigns the job to the next available idle instance

- Replenishes the idle pool by spawning a replacement after assignment

- Drains and terminates instances that have been idle past a TTL threshold

import asyncio, redis.asyncio as aioredis

class BotPool:

def __init__(self, min_idle=5, max_size=50):

self.min_idle = min_idle

self.max_size = max_size

self.idle_queue = asyncio.Queue()

self.active = {}

async def dispatch(self, meeting_url: str, config: dict) -> str:

bot = await self.idle_queue.get()

bot_id = await bot.join(meeting_url, config)

self.active[bot_id] = bot

asyncio.create_task(self._replenish())

return bot_id

async def _replenish(self):

if self.idle_queue.qsize() < self.min_idle:

bot = await BotInstance.create() # pre-warms Chromium

await self.idle_queue.put(bot)

Cold Start Handling

Cold starts in meeting bot infrastructure are particularly painful because meetings don't wait. If your bot takes 45 seconds to initialize Chromium, authenticate, and navigate to the meeting URL, it will miss the first few minutes of the call, which often contain the most important context (agenda, introductions, key decisions).

Strategies to reduce cold start impact:

- Pre-warm browsers: Initialize Chromium at container startup, before any meeting URL is provided. Keep the browser open at a neutral page.

- Use

join_atscheduling: If you know the meeting start time, schedule the bot to join 2, 3 minutes early. The MeetStream API'sjoin_atparameter (ISO 8601) handles this natively. - Waiting room timeout: Set

automatic_leave.waiting_room_timeoutto avoid bots lingering indefinitely if a host never admits them.

POST https://api.meetstream.ai/api/v1/bots/create_bot

Authorization: Token YOUR_API_KEY

Content-Type: application/json

{

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "Notetaker",

"join_at": "2025-06-15T14:58:00Z",

"callback_url": "https://yourapp.com/webhooks/meetstream",

"automatic_leave": {

"waiting_room_timeout": 300,

"everyone_left_timeout": 60,

"voice_inactivity_timeout": 600

}

}

State Management Across Bot Sessions

Each bot session has a lifecycle: bot.joining → bot.inmeeting → bot.stopped. Between these events, your system needs to track which bot ID corresponds to which meeting, user, or calendar event, and what to do when the bot stops.

Minimal state schema per bot session:

{

"bot_id": "b_abc123",

"meeting_url": "https://meet.google.com/...",

"user_id": "u_456",

"calendar_event_id": "evt_789",

"status": "InMeeting",

"created_at": "2025-06-15T15:00:00Z",

"transcript_webhook_received": false,

"audio_webhook_received": false

}

Store this in a fast key-value store (DynamoDB, Redis) keyed by bot_id. Your webhook handler looks up state by bot ID on every incoming event and updates accordingly. See the full API reference for the complete webhook event schema.

Scaling to Concurrent Bots

At low concurrency (under 20 simultaneous bots), a single well-resourced VM is usually enough. At 50+, you need horizontal scaling. The key insight is that bots don't share state during a session, each is independent so horizontal scaling is straightforward as long as your webhook handler is stateless and your state store is external.

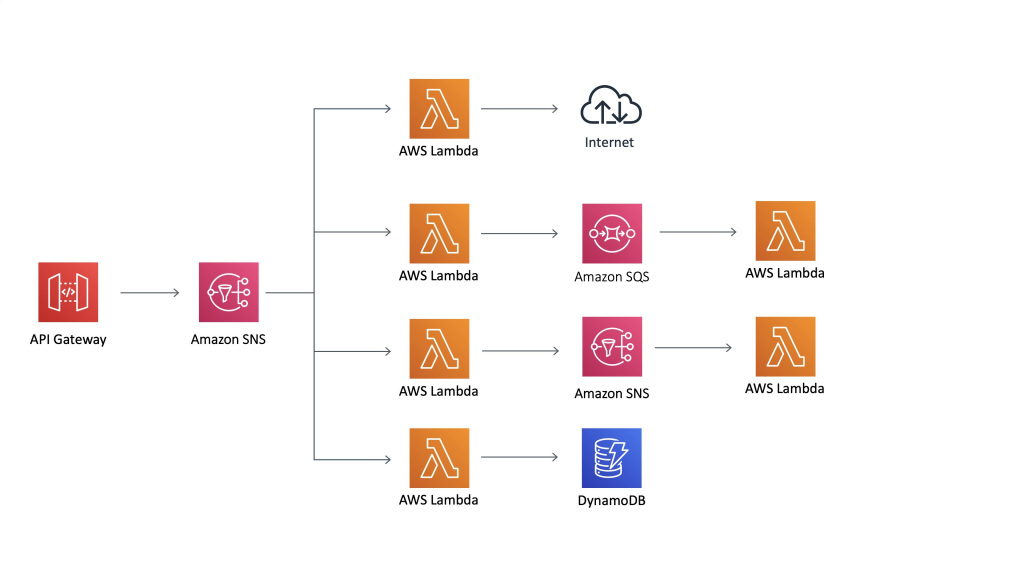

For a self-hosted fleet at this scale, the architecture looks like:

- A job queue (SQS, Redis Streams) receives bot creation requests

- A pool of worker VMs each consume from the queue and spawn bot processes

- Bot state is written to DynamoDB, not local memory

- Webhook delivery goes to an API Gateway endpoint that routes to a stateless Lambda handler

This is roughly 3, 5 weeks of engineering to build well and another 2, 3 weeks to operate safely. That's before handling platform-specific quirks like Zoom's OAuth bot framework (OBF), which requires App Marketplace approval and token refresh flows.

How MeetStream Fits

MeetStream's deploy meeting bot API abstracts the entire infrastructure layer described above. You POST a single request, meeting URL, bot name, optional callback and transcription config and MeetStream handles provisioning, cold starts, session state, and cleanup. Google Meet requires no platform setup; Zoom requires App Marketplace approval (which MeetStream provides); Teams requires no setup. Get started free at meetstream.ai.

Conclusion

Deploying meeting bots at any meaningful scale requires choosing a model that matches your concurrency profile and latency requirements. Serverless triggers handle orchestration well but can't run bot processes. Container fleets offer flexibility at the cost of operational overhead, particularly around Chromium's memory and /dev/shm requirements. Pre-warmed VM pools minimize cold starts but carry idle cost. In all cases, external state management and idempotent webhook handling are non-negotiable. If you want to skip the infrastructure entirely and focus on what your bot does rather than how it runs, see the full API reference at docs.meetstream.ai.

Frequently Asked Questions

What is the minimum infrastructure needed to deploy a meeting bot?

At minimum, you need a process environment that supports a headless browser (Chromium or equivalent), persistent WebSocket connections, and enough RAM to handle media streams, roughly 1, 2 GB per concurrent bot. A single VM works for low concurrency; container orchestration becomes necessary above 20, 30 simultaneous bots.

Why can't I run a meeting bot in AWS Lambda?

AWS Lambda's 10 GB memory limit and 15-minute execution timeout make it unsuitable for Chromium-based meeting bot processes. Lambda works well as an orchestration layer that dispatches bot creation requests to a managed API or external fleet, but the bot process itself needs a persistent runtime environment.

How do I handle meeting bot cold starts?

The most effective strategies are pre-warming the browser before receiving a meeting URL, scheduling bots to join 2, 3 minutes before the meeting starts using the join_at parameter, and maintaining a pool of idle pre-initialized instances for latency-sensitive deployments.

What happens when a bot session ends unexpectedly?

Your webhook handler will receive a bot.stopped event with a bot_status field indicating the reason, Stopped (normal), Denied (host rejected), NotAllowed (platform restriction), or Error. Handle each case in your state machine and retry or alert as appropriate for your use case.

How do I track which bot corresponds to which user or calendar event?

Store a mapping of bot_id to your internal identifiers (user ID, calendar event ID) in an external store like DynamoDB or Redis at dispatch time. When webhooks arrive with a bot_id, look up the associated context and process accordingly. Never store this mapping in local memory if you're running multiple instances.