Meeting Bot API: What It Is and How to Build with One

If you have ever tried to pull audio or transcript data out of a Zoom call programmatically, you know how fast it gets complicated. Zoom's SDK requires a desktop application context. Google Meet has no official media extraction API. Teams requires a Graph API integration with specific permissions. Each platform has different authentication requirements, different media formats, and different policies about what you can and cannot automate. Most developers who try to build something meeting-aware end up spending weeks on platform integration before writing a single line of product logic.

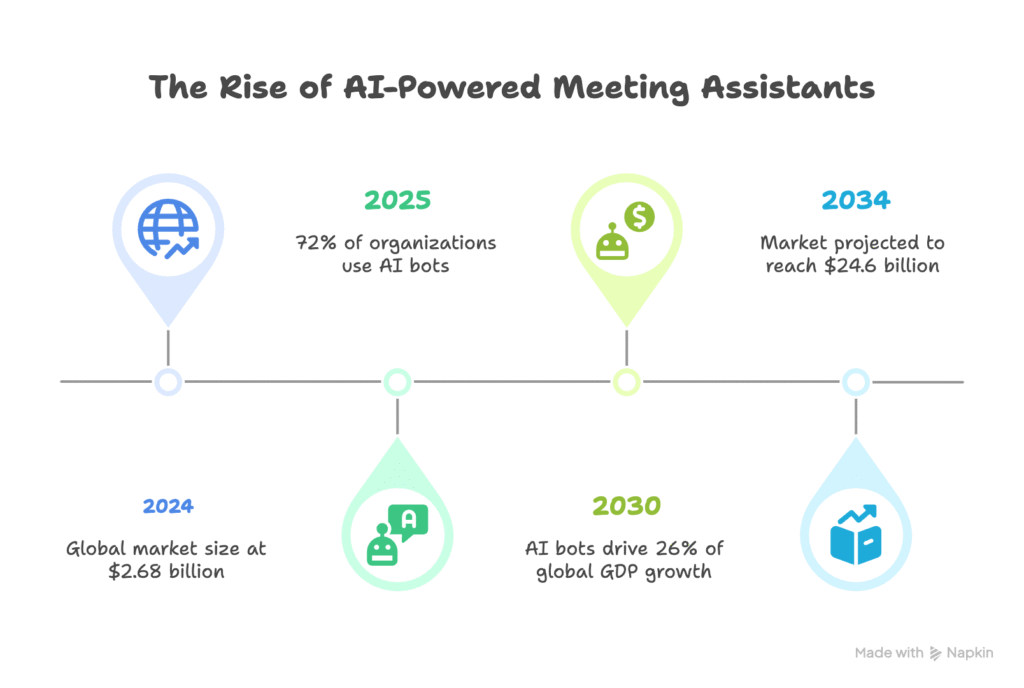

This is the problem a meeting bot API solves. It abstracts the platform complexity behind a single REST API, so you can focus on what you are building instead of how to get audio out of a browser-based video call.

This is not a consumer explainer. It is a technical guide for developers who want to understand what a meeting bot is under the hood, what you can build with one, and how to get started with MeetStream's API.

In this guide, we'll cover how meeting bots work technically, the API abstraction layer, what you can build, and a working API example. Let's get into it.

How Meeting Bots Work Technically

A meeting bot is a server-side process that joins a video conference as a participant and extracts media from the call. The implementation under the hood is more interesting than the name implies.

Meeting platforms like Zoom, Google Meet, and Teams are primarily web and desktop applications built on top of WebRTC for peer-to-peer media. They were not designed to be programmatically joined by automated processes. So meeting bots use a combination of approaches to get in the door.

Virtual display and browser automation. The most reliable method, and what production meeting bot infrastructure uses, is a headless browser (typically Chromium) running in a Linux container with a virtual framebuffer (Xvfb) and a virtual audio device (PulseAudio or equivalent). The browser navigates to the meeting URL, follows the same join flow a human would, and the virtual audio and video devices capture the call's media streams. The operating system's audio mixer receives the decoded audio from all participants, and the bot process reads from it.

Platform SDKs. Zoom provides a Meeting SDK that allows programmatic joining with a web or native client context. This is more integrated than browser automation but requires a registered Zoom App Marketplace app with appropriate permissions. Teams has a similar path via the Bot Framework and Graph API. Google Meet has no first-party SDK for media extraction as of 2026.

Media extraction pipeline. Once the bot is in the call with access to the audio stream, it can: record to file (mixing all participants), split into per-speaker streams (harder, requires diarization at the capture layer or post-processing), stream raw PCM audio over WebSocket to a downstream processing service, or pass audio to a transcription provider in real time.

The virtual display approach also captures video. Each participant's video can be captured as a separate stream if the layout is known, which is how separate-stream recording works.

The API Abstraction Layer

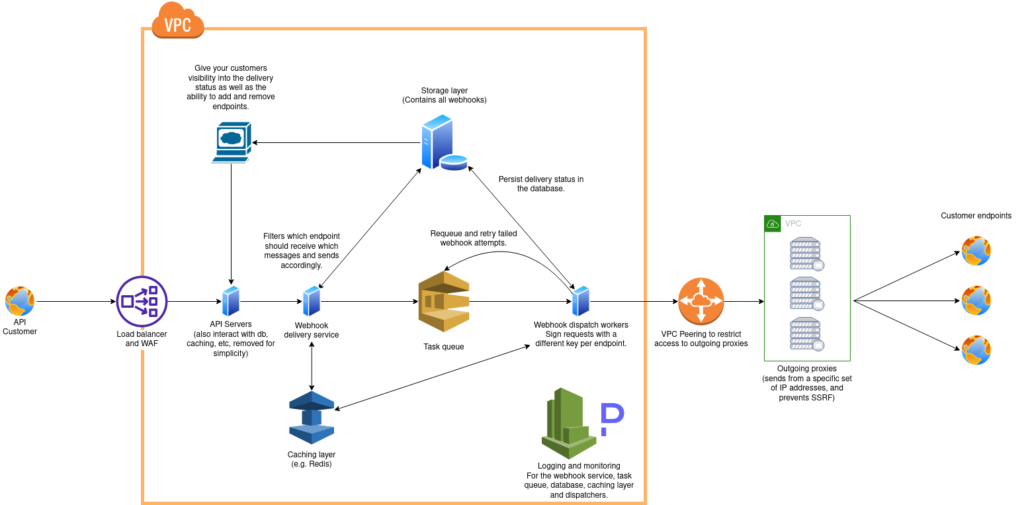

Building and maintaining this infrastructure yourself is a significant undertaking. You need to maintain platform-specific join logic for Zoom (App Marketplace approval, OAuth token management), Google Meet, and Teams separately. Platform policy changes, Zoom changing bot detection policies, Teams updating permission scopes, Google Meet adding waiting room behavior, all break your infrastructure and require immediate patches. You need to handle container orchestration for concurrent bots, audio device management, browser version upgrades, and memory leak monitoring in long-running browser processes.

The alternative is using a meeting bot API: a managed service that handles all of this and exposes a clean REST interface. You call one endpoint with a meeting URL. The service spins up the bot, joins the call, extracts media, runs transcription, and delivers results to your webhook. You never touch the browser automation layer.

This is a genuine abstraction boundary, not just convenience. The complexity below the line is different in kind from typical API integration work. Most teams that have tried to build this in-house report 6-12 months to reach production stability before building any product logic on top.

What You Can Build

The range of products and features you can build on a meeting bot API is broad. A few concrete categories:

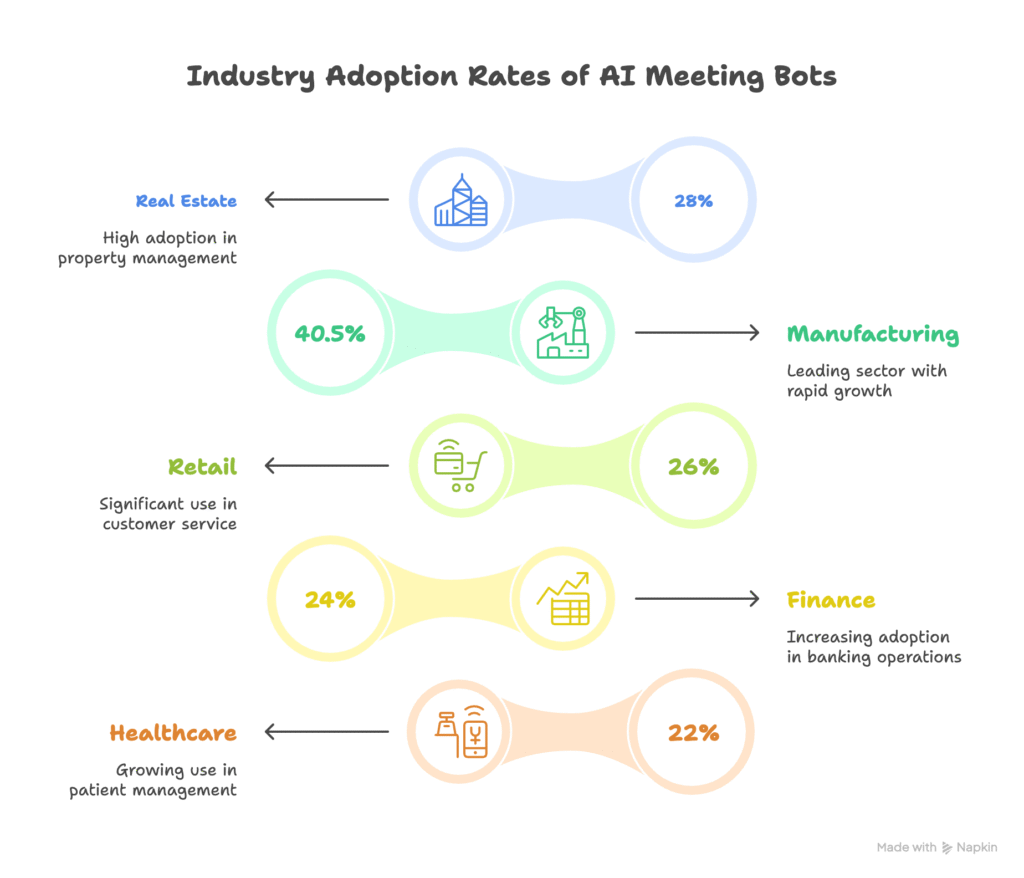

Meeting note-taking and summarization products. The classic use case. Bot joins the call, transcription runs, webhook delivers the transcript, LLM produces a summary and action items, and the output goes to Slack, Notion, or your CRM. This is a multi-day implementation, not multi-month.

Recording and compliance archival. Bot joins every call in a category (all customer calls, all hands meetings, board meetings), records audio and video, stores according to a retention policy, and makes the content searchable. Compliance and legal teams use this for audit trails.

Real-time meeting agents. This is the more interesting frontier. Using the WebSocket audio stream and live transcription, you can run an AI agent that listens to the meeting, understands context, and takes action in real time: looking up CRM data when a prospect is mentioned, surfacing relevant documentation when a technical question is asked, or responding verbally when addressed directly. The MeetStream In-Meeting Agent (MIA) provides the scaffolding for this, including voice, chat, and action response modes with MCP server connectivity for external tool access.

Sales intelligence and coaching. Post-call analysis of transcript data: talk-time ratios, objection detection, question frequency, competitor mentions. Feed this into a coaching dashboard or pipe it directly to your CRM as structured fields on the deal record.

Developer tooling. Some teams use meeting bots as part of their CI/CD or development workflow: recording all-hands updates, automatically documenting architecture discussions, generating ticket descriptions from engineering standups.

Getting Started with the MeetStream API

The base URL for all MeetStream API calls is https://api.meetstream.ai/api/v1/. Authentication uses a token header: Authorization: Token YOUR_API_KEY.

The core action is creating a bot. Here is the minimal working request:

curl -X POST https://api.meetstream.ai/api/v1/bots/create_bot \

-H "Authorization: Token YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "MeetStream Bot",

"callback_url": "https://your-server.com/webhooks/meetstream"

}'That request creates a bot that joins the specified meeting, records the call, and sends webhook events to your callback URL. The webhook events you'll receive:

bot.joining fires up to three times as the bot attempts to join. bot.inmeeting fires once when the bot successfully enters the call. bot.stopped fires when the bot leaves, with a bot_status field that can be Stopped, NotAllowed, Denied, or Error. After processing, audio.processed, transcription.processed, and video.processed fire as each media type becomes available.

The transcription.processed payload includes the transcript ID. To retrieve the full transcript:

curl https://api.meetstream.ai/api/v1/transcript/{transcript_id}/get_transcript \

-H "Authorization: Token YOUR_API_KEY"The response includes speaker-attributed segments with timestamps, ready to pass to your analysis layer.

Configuring Transcription and Real-Time Streaming

The create_bot endpoint accepts a recording_config object that controls transcription provider and real-time delivery. To use Deepgram nova-3 for post-call transcription:

"recording_config": {

"transcript": {

"provider": {

"name": "deepgram",

"model": "nova-3"

}

}

}

For real-time streaming transcription, add live_transcription_required with your WebSocket URL, and the bot will stream transcript segments as the meeting progresses. For raw PCM audio streaming, use live_audio_required. Raw audio arrives as binary frames: msg_type (1 byte) + sid_length (2 bytes, little-endian) + speaker ID + sname_length (2 bytes, little-endian) + speaker name + PCM data. Format is PCM16 little-endian, 48kHz, mono per speaker.

Platform Support and Tradeoffs

Google Meet and Microsoft Teams work with no additional setup beyond your API key. Zoom requires a registered Zoom App in their App Marketplace, because Zoom has tighter controls on automated participants. This is not a MeetStream limitation, it is Zoom policy. Any compliant meeting bot that uses the Zoom SDK path requires this. The browser automation path bypasses this requirement but is less stable and against Zoom's terms of service for production use.

Upcoming platform support includes Webex and Slack Huddles. SDK support covers Python, JavaScript, Go, Ruby, Java, PHP, C#, and Swift.

How MeetStream Fits In

MeetStream is the meeting bot infrastructure layer for developers who want to build meeting-aware products without maintaining platform integrations. One API, three platforms, configurable transcription, real-time audio streaming, and AI agent support. Your team focuses on the product logic above the abstraction boundary. See the full API reference at docs.meetstream.ai.

Conclusion

A meeting bot API abstracts the hard parts of meeting platform integration: virtual display management, platform-specific join flows, media extraction, transcription orchestration, and webhook delivery. What remains is your product logic, the analysis, the integrations, the user experience. That is a much better place to spend engineering time. Get started free at meetstream.ai.

Frequently Asked Questions

What is a meeting bot API and how does it work?

A meeting bot API is a managed service that joins video conferences programmatically and extracts media, audio, video, and transcripts, via a REST interface. Under the hood, it uses headless browsers with virtual display and audio devices to join calls as a participant. The API abstracts the platform-specific complexity of Zoom, Google Meet, and Teams behind a single endpoint.

Do I need to build meeting bot infrastructure myself?

Building meeting bot infrastructure from scratch requires virtual display management, platform-specific join logic (including Zoom App Marketplace approval), audio extraction pipelines, transcription orchestration, and webhook delivery. Most teams that attempt this report 6-12 months to reach production stability. Using a managed meeting bot API like MeetStream lets you skip the infrastructure layer and build directly on top of structured transcript and audio data.

What platforms does a meeting bot API support?

MeetStream supports Google Meet, Zoom, and Microsoft Teams via a single API. Google Meet and Teams require no additional setup. Zoom requires a registered App Marketplace application because Zoom's policies require bot participants to be registered apps. Webex and Slack Huddles are on the roadmap.

Can I use a meeting bot for real-time AI agent features?

Yes. Meeting bot APIs that support real-time audio streaming let you build AI agents that listen and respond during a call. MeetStream's MIA feature supports deploying an AI agent with voice, chat, and action response capabilities, with MCP server connectivity for external tool access. Real-time streaming uses a WebSocket delivering PCM16 audio per speaker at 48kHz.

What is the difference between an AI meeting notetaker and a meeting bot API?

An AI meeting notetaker is a consumer product: you install it, it joins your meetings, and it generates summaries. A meeting bot API is developer infrastructure: you call an endpoint with a meeting URL, and you receive structured transcript data via webhook to use however you want. The API gives you control over transcription providers, data storage, downstream integrations, and the ability to build custom features like real-time agents or compliance archival.