AI Meeting Notetaker vs Human Notes: An Honest Comparison

There is a version of this comparison that is mostly marketing: AI notetakers are instant, consistent, scalable, and they never miss an action item. Humans are slow, tired, and distracted. Case closed. But that version skips the failure modes that actually matter in production: the AI that missed every third sentence from the non-native English speaker, the transcript that labeled two participants as the same speaker because their voices were similar, the action item that required domain context the model did not have.

This is an honest comparison. AI meeting notetakers are genuinely useful and they are getting better fast. They also have real limitations that are worth understanding before you decide how to use them in your product or workflow.

The comparison matters both for people deciding whether to use an AI notetaking tool and for developers deciding whether to build AI-based meeting notes into a product vs. asking users to capture notes manually.

In this guide, we'll cover accuracy, speed, customization, scale economics, and the specific scenarios where each approach is better. Let's get into it.

Accuracy: Where AI Struggles and Where It Does Not

Word error rate (WER) for modern speech-to-text models on clean audio with native English speakers is under 5%. That is good enough that the resulting transcript is readable and usable without manual correction. That number tells an incomplete story.

Accented speech. WER climbs significantly on non-native English speakers with strong accents. Measurements vary across models and accents, but a realistic figure for an international sales call with participants from India, Spain, and Germany is WER in the 10-20% range on some participants. For a notetaker, that means sections of the transcript are garbled or missing. The model does not know what it does not know, it produces confident output even when the underlying audio was not reliably decoded.

Technical vocabulary. Out-of-vocabulary terms are a persistent problem. If your team discusses "CUDA kernels", "HPC clusters", or company-specific product names, the STT model may phonetically approximate these as something plausible-sounding but wrong. "CUDA" becomes "cuda," an internal product name becomes a common English word that sounds similar. Some providers support custom vocabulary lists that improve this, but it requires configuration.

Cross-talk. When two people speak simultaneously, transcription quality for both degrades. Models handle this differently, but overlapping speech is a hard problem for any STT system. In a heated discussion or an interruption-heavy call, cross-talk moments can produce transcript segments that are wrong for both speakers.

Audio quality. Echo, background noise, conference room microphone arrays, and participants dialing in from a car all degrade accuracy. Human notetakers adapt to poor audio by asking for clarification. AI transcribers do not.

Human note-takers, by contrast, bring two advantages: they can ask for clarification when they did not hear something, and they can apply domain knowledge to fill in gaps. A human who knows the team's product vocabulary will correctly transcribe a term they heard imperfectly. They will also flag when they missed something important rather than confidently writing down something wrong.

The honest summary: for clean audio with clear English speakers on general topics, AI transcription is accurate enough to replace human notes. For multilingual meetings, technical domains with specialized vocabulary, or poor audio conditions, AI accuracy is materially lower and the errors are not always obvious.

Speed: AI Wins, With a Caveat

Speed is where AI notetakers have a clear and genuine advantage. Post-call batch transcription completes within minutes of the meeting ending. Streaming transcription produces notes in real time during the meeting. A human notetaker, even a fast one, produces notes asynchronously, after the meeting, when attention has shifted, when some details have already faded.

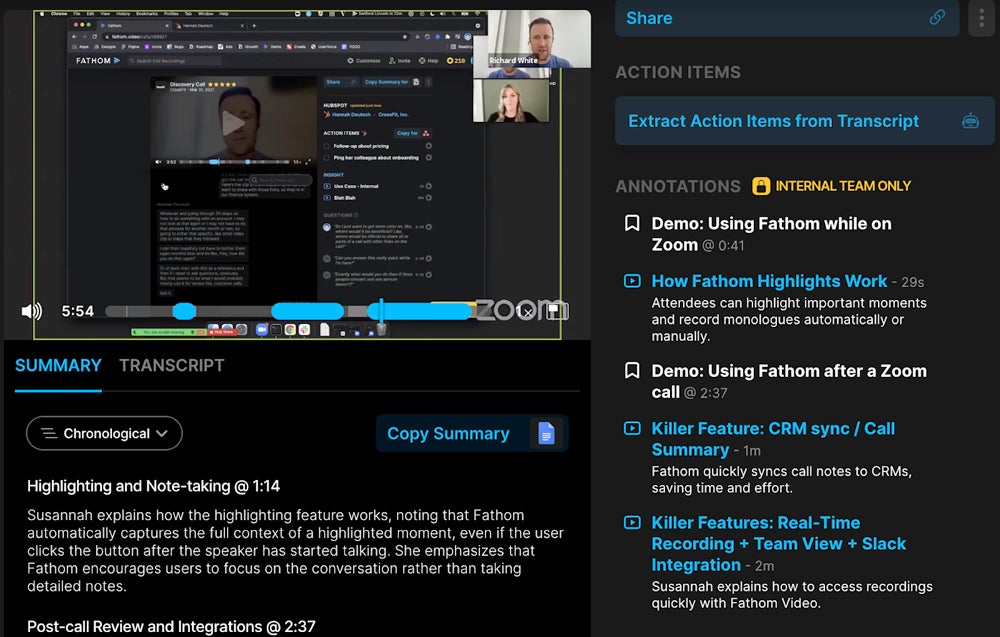

The caveat is that speed of transcript delivery is not the same as speed of useful output. A 60-minute meeting produces a long transcript. Getting from raw transcript to "here are the three things that need to happen before next week" still requires processing. If the AI extracts action items well, the end-to-end speed is still a win over human notes. If the AI extraction is mediocre or the transcript quality is low, someone needs to read through the transcript manually and at that point the speed advantage shrinks.

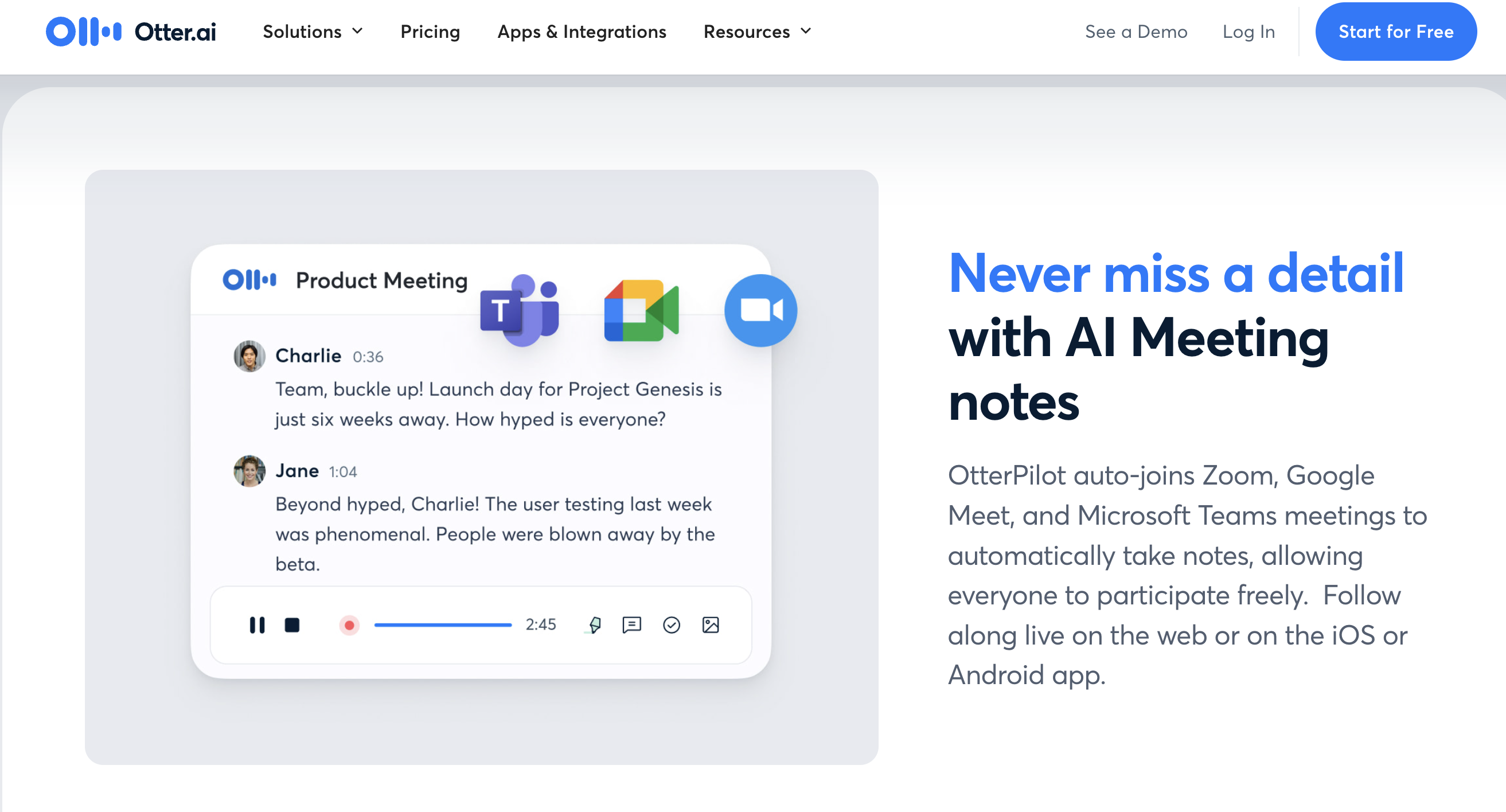

Real-time streaming transcription adds a different dimension: you can see what is being said as it is said, enabling live features that have no human-notes equivalent. Real-time coaching overlays, compliance keyword alerts, and in-meeting AI agents all require streaming infrastructure that AI uniquely enables.

Customization: What Each Approach Can and Cannot Do

AI meeting notetakers can be structured. You can specify the output format: summary paragraph, action items with owners, key decisions, open questions. LLMs are good at producing structured output from transcript input when the prompt is well-designed. The output is consistent across meetings, the same template every time, which makes it easier to parse programmatically or scan quickly.

What AI adds with difficulty is contextual judgment. A human notetaker who knows the company knows that when the CEO says "let's revisit this after the funding closes", that is a soft blocker on a significant decision, not a minor scheduling note. A general-purpose LLM may note it as a scheduling comment unless the prompt includes enough context to flag it correctly. Domain-aware prompts, fine-tuned models, and context injection from CRM data can close this gap, but it requires effort.

Human notes add context that was not in the transcript. They capture the significance of a pause, the energy behind a comment, the fact that the CTO looked uncomfortable when a technical concern was raised. This qualitative layer is genuinely useful and AI does not capture it. Whether it matters depends on what the notes are for.

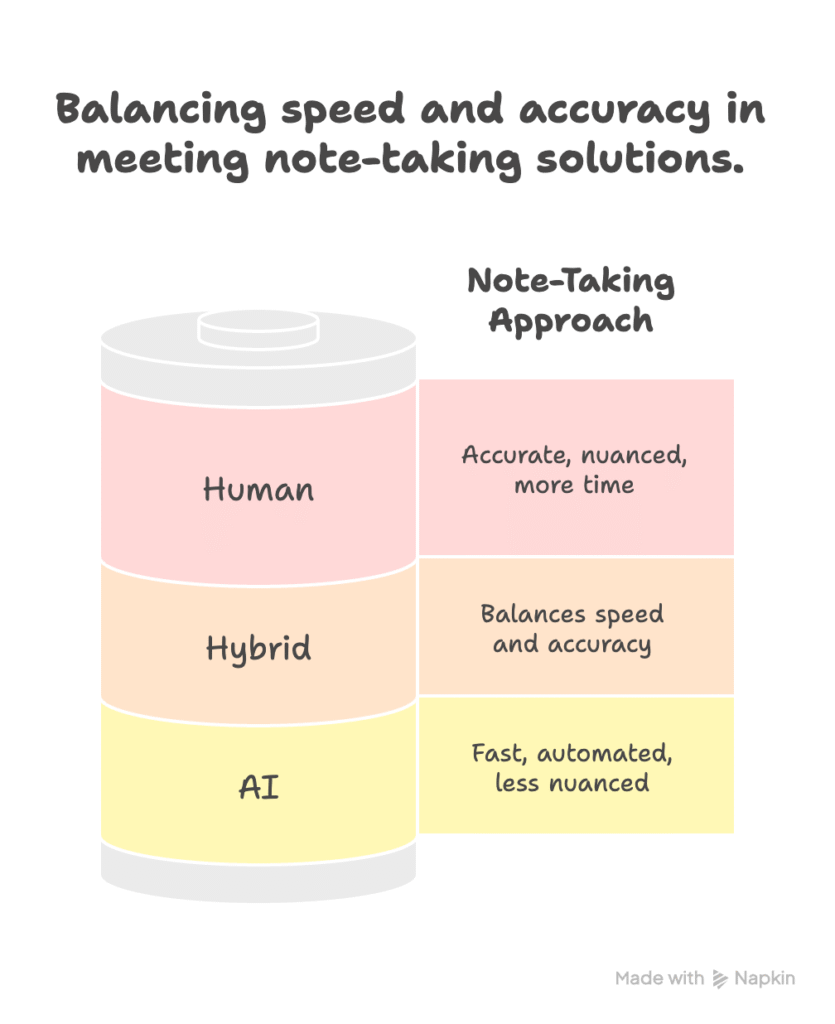

For structured, repeatable output, action items, decisions, key topics discussed, AI is the better tool. For nuanced synthesis that requires understanding unspoken context, human judgment still adds value.

Automated Meeting Notes at Scale: The Economics

At any meaningful scale, human notetaking is not viable. A sales team running 50 calls per week cannot have a dedicated notetaker on each call. Even if people take their own notes, consistency is low, format varies, and coverage depends on who is most alert on a given day.

Automated meeting notes via AI are a different cost model: the marginal cost of processing one more call is near-zero once the infrastructure is in place. This is the most compelling economic argument for AI notetaking at the product level. If you are building a product that processes thousands of calls per month, AI is not a trade-off, it is the only option.

The cost model for developer teams building this: the infrastructure layer (getting audio out of meetings reliably) is handled by a meeting bot API. The LLM layer (turning transcripts into structured output) is handled by your preferred model. The marginal cost per call is a function of meeting duration, your STT provider's rate, and your LLM token usage. At realistic call volumes for a sales intelligence product, the cost per call is low enough to be a non-issue compared to the value of the data.

When to Use Each Approach

This is not an either/or for most use cases. The practical question is what role each plays.

AI notes work best as a first pass: complete transcript, structured action items, meeting summary. Human review adds value for high-stakes decisions, sensitive conversations, and cases where the transcript quality is low. A hybrid workflow, AI produces the draft, the meeting owner reviews and edits, captures most of the speed advantage while retaining the accuracy and judgment benefits of human involvement.

For developer teams building notetaking into a product, the question is slightly different: should your product use API-based automated notes, or should you ask users to take notes and submit them manually?

The case for API-based automated notes: consistency, completeness, and the ability to process notes programmatically (search, aggregate, route to CRM). The case against: you are responsible for transcription quality, and your users will notice and blame your product when the AI gets it wrong.

The practical guidance: use API-based notetaking when the notes are primarily structured data (action items, decisions, topics) and when you have control over the quality of the upstream transcription. Build in a correction interface so users can fix AI errors without re-doing everything from scratch. Avoid full automation for calls where accuracy is high-stakes and audio quality is variable.

The Remaining Gaps

Acknowledging the current state honestly: multilingual meeting transcription is still inconsistent. Code-switched conversations (participants switching between English and another language mid-sentence) cause particular problems for most models. Speaker diarization accuracy degrades with more than four participants, similar voices, or frequent cross-talk. Technical vocabulary handling varies by provider and requires custom vocabulary configuration to reach acceptable accuracy. These are active areas of improvement, but they are real limitations today.

The most important limitation is confidence calibration: AI transcribers produce output for audio segments they decoded poorly with the same apparent confidence as segments they decoded correctly. Humans who missed something say "I missed that." AI systems produce something plausible-sounding. For use cases where accuracy of specific details matters (legal, medical, financial), this lack of uncertainty signaling is a material concern.

How MeetStream Fits In

For developers building automated meeting notes into a product, MeetStream handles the infrastructure layer: bot deployment to Zoom, Google Meet, and Teams; configurable transcription across AssemblyAI, Deepgram nova-3, and JigsawStack; speaker-attributed webhook delivery. Your product handles the LLM layer and the user experience. The API documentation covers the full parameter set for configuring transcription quality per call type.

Conclusion

AI meeting notetakers are the right default for most team workflows and most product use cases. They are fast, consistent, cheap at scale, and improving steadily. The real limitations, accented speech accuracy, technical vocabulary, confidence calibration, are worth knowing so you can design around them. For builders, the combination of API-based transcription and LLM-based extraction produces structured meeting notes that outperform manual capture on speed and consistency, with known accuracy tradeoffs that a good user experience can mitigate. Get started free at meetstream.ai.

Frequently Asked Questions

How accurate are AI meeting notetakers compared to human notes?

On clean audio with native English speakers, modern AI meeting notetakers achieve word error rates under 5%, which is accurate enough to replace manual notes for most purposes. Accuracy drops materially with accented speech (10-20% WER in some conditions), technical vocabulary, and cross-talk. Human notetakers add contextual judgment and can ask for clarification, which AI systems cannot. For structured output at scale, AI is the better choice; for nuanced synthesis of domain-specific or sensitive conversations, human review adds value.

What are the limitations of AI meeting notetaker tools?

The main limitations are: accuracy on non-native English accents, which can be materially lower than benchmark figures suggest; technical vocabulary handling, which requires custom vocabulary configuration to reach acceptable accuracy; speaker diarization quality that degrades above four participants or with similar voices; and confidence calibration, where AI systems produce plausible-sounding output even for poorly decoded audio without signaling uncertainty. These limitations are active areas of improvement but are real in production today.

When should I use automated meeting notes vs human-captured notes?

Use automated meeting notes for structured output at scale: action items, decisions, key topics, and CRM data entry. Use human review for high-stakes decisions, sensitive conversations, multilingual meetings, and any situation where accuracy of specific details matters and audio quality is variable. The most effective workflow combines both: AI produces a structured draft, and the meeting owner reviews and corrects rather than starting from scratch.

How do AI meeting notes work technically?

An AI meeting notetaker deploys a bot that joins the video call as a participant, captures audio via virtual audio devices, sends audio to an STT provider (Deepgram, AssemblyAI, or others) for transcription, and delivers a speaker-attributed transcript to a processing layer. An LLM then takes the transcript and extracts structured output, summary, action items, decisions, according to a configured prompt. The result is delivered to the user or pushed to a downstream system like Slack or a CRM.

Can I build meeting notes AI into my own product?

Yes. The standard architecture is: meeting bot API for capture and transcription, LLM for extraction and summarization, webhook delivery to your application. You control the transcription provider (which affects accuracy), the LLM prompt (which controls output structure), and the downstream integrations. Building on top of a meeting bot API like MeetStream handles the platform integration layer so your team focuses on the extraction logic and user experience.