Named Entity Recognition in Python for Meeting Transcripts

When a sales call ends, a good CRM update requires four things: who was in the conversation, what companies were mentioned, what products or integrations came up, and whether any dollar figures were discussed. A rep doing this manually after every call misses things. Most write incomplete notes. A few skip it entirely.

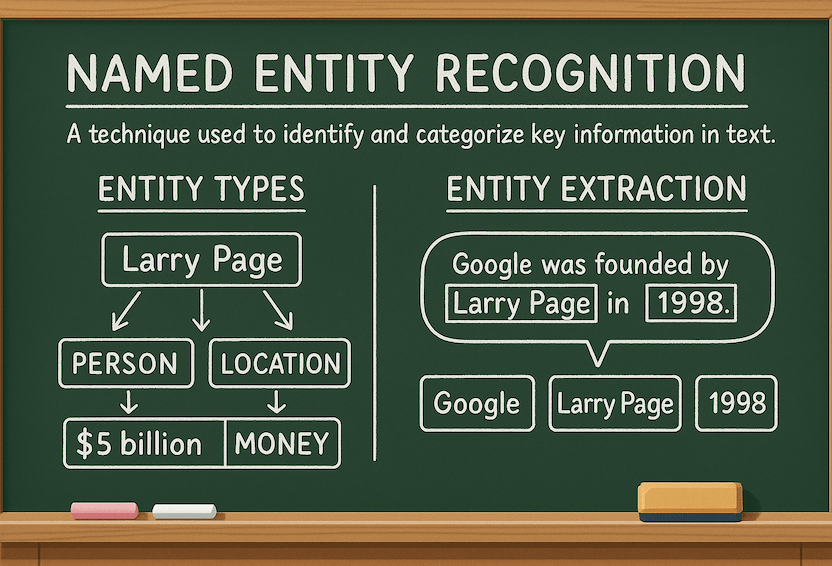

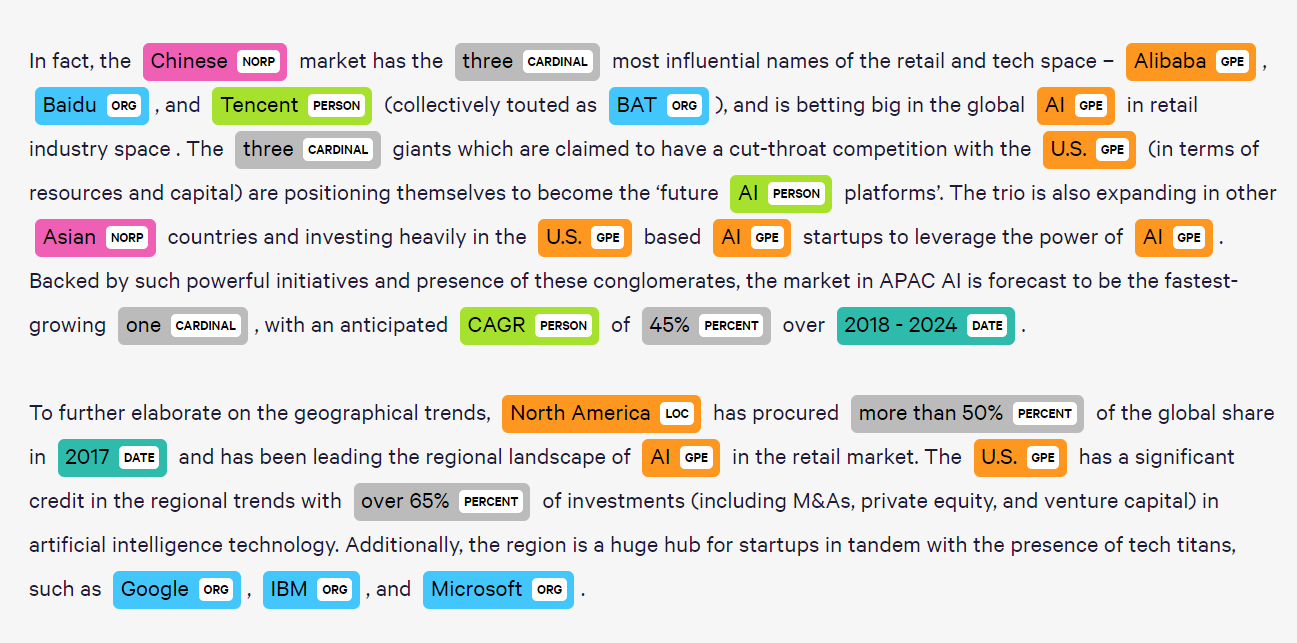

Named entity recognition (NER) in Python is the technique that pulls structured facts out of unstructured text automatically. Given a transcript, an NER pipeline identifies and labels spans like "Alice Chen" as PERSON, "Salesforce" as ORG, "Slack integration" as PRODUCT, and "$25,000" as DEAL_VALUE. The output is a structured record rather than a blob of text.

For meeting transcripts specifically, NER has some quirks. Speakers refer to colleagues and customers by first name only. Product names overlap with common words. Deal values appear in conversational forms ("somewhere around fifty K", "sub-five thousand", "250 a month"). Standard NER models trained on news or Wikipedia handle none of these gracefully. Building a meeting-specific NER pipeline requires combining a strong base model with custom entity rules that account for how people actually talk in meetings.

In this guide, you will build a full NER pipeline in Python using spaCy and Hugging Face transformers, apply it to MeetStream transcript output, and produce a structured JSON entity record per meeting. We will cover base model setup, custom entity types, pattern-based rules for meeting-specific entities, and integration with the transcript format from the MeetStream API. Let's get into it.

Setting Up spaCy with the Large English Model

spaCy's en_core_web_lg is the right starting point for meeting NER. The large model uses word vectors for disambiguation, which matters when the same word can be a person name or a common noun depending on context. The medium model works but misses more ambiguous cases.

# Install dependencies

# pip install spacy transformers

# python -m spacy download en_core_web_lg

import spacy

from spacy.language import Language

nlp = spacy.load("en_core_web_lg")

# Test on a meeting excerpt

text = "Sarah mentioned that Acme Corp is evaluating us against Gong. "

"They're running about 50 reps and their deal size is around $18K annually."

doc = nlp(text)

for ent in doc.ents:

print(f"{ent.text:25} {ent.label_:12} {spacy.explain(ent.label_)}")

# Sarah PERSON People, including fictional

# Acme Corp ORG Companies, agencies, institutions

# Gong ORG Companies, agencies, institutions

# 50 CARDINAL Numerals not under another type

# 18K MONEY Monetary values

The base model handles standard entities well. The gaps emerge with meeting-specific patterns: product names that look like common words, deal values expressed informally, and speaker references by first name only. These require custom rules built on top of the base model.

Adding Custom Entity Types for Meetings

Standard spaCy entity types cover PERSON, ORG, DATE, MONEY, and CARDINAL. For meeting analytics, you want additional types: PRODUCT (software tools and integrations mentioned), DEAL_VALUE (informal dollar and seat count references), and COMPETITOR (mentions of competing vendors). The cleanest way to add these is with an EntityRuler component inserted before the NER pipe.

from spacy.pipeline import EntityRuler

ruler = nlp.add_pipe("entity_ruler", before="ner")

# Product and integration patterns

product_patterns = [

{"label": "PRODUCT", "pattern": "Slack"},

{"label": "PRODUCT", "pattern": "Salesforce"},

{"label": "PRODUCT", "pattern": "HubSpot"},

{"label": "PRODUCT", "pattern": "Notion"},

{"label": "PRODUCT", "pattern": [{"LOWER": "slack"}, {"LOWER": "integration"}]},

{"label": "PRODUCT", "pattern": [{"LOWER": "crm"}, {"LOWER": "integration"}]},

]

# Informal deal value patterns

deal_patterns = [

# "around $18K", "sub-$5K", "fifty thousand"

{"label": "DEAL_VALUE", "pattern": [

{"LOWER": {"IN": ["around", "approximately", "sub", "about"]}},

{"LIKE_NUM": True},

{"LOWER": {"IN": ["k", "thousand", "million"]}, "OP": "?"}

]},

{"label": "DEAL_VALUE", "pattern": [

{"TEXT": "$", "OP": "?"},

{"LIKE_NUM": True},

{"LOWER": {"IN": ["k", "thousand", "million", "per", "a"]}, "OP": "*"}

]},

]

ruler.add_patterns(product_patterns + deal_patterns)

Hugging Face NER for Higher Accuracy

spaCy's rule-based additions cover known entities. For unknown organization and person names in transcripts, a Hugging Face transformer-based NER model outperforms the statistical spaCy model on ambiguous cases. The dslim/bert-base-NER model is a good default: it is fine-tuned on CoNLL-2003 and handles conversational text reasonably well.

from transformers import pipeline

hf_ner = pipeline(

"ner",

model="dslim/bert-base-NER",

aggregation_strategy="simple"

)

def extract_entities_hf(text: str) -> List[Dict]:

results = hf_ner(text)

return [

{

"text": r["word"],

"label": r["entity_group"],

"score": round(r["score"], 3),

"start": r["start"],

"end": r["end"]

}

for r in results

if r["score"] > 0.85

]

# Output for a meeting excerpt:

# [{"text": "Marcus", "label": "PER", "score": 0.997, "start": 0, "end": 6},

# {"text": "Datadog", "label": "ORG", "score": 0.991, "start": 25, "end": 32}]

The aggregation_strategy="simple" parameter merges subword tokens into full entity spans, which is critical for multi-word names. Without it, "Acme Corporation" becomes three separate tokens instead of one entity.

Building the Combined NER Pipeline

The production pipeline combines spaCy for rule-based entities with Hugging Face for statistical NER, then merges and deduplicates the results. Running both gives you coverage that neither provides alone.

from typing import List, Dict

from collections import defaultdict

def run_ner_pipeline(text: str) -> List[Dict]:

# spaCy pass (rules + statistical)

doc = nlp(text)

spacy_entities = [

{

"text": ent.text,

"label": ent.label_,

"start": ent.start_char,

"end": ent.end_char,

"source": "spacy"

}

for ent in doc.ents

]

# Hugging Face pass

hf_entities = [

{**e, "source": "hf", "label": e["label"].replace("PER", "PERSON")}

for e in extract_entities_hf(text)

]

# Merge: prefer spaCy for known types, HF for PER/ORG

all_entities = spacy_entities + hf_entities

# Deduplicate by character span overlap

seen_spans = set()

merged = []

for ent in sorted(all_entities, key=lambda e: (e["start"], -len(e["text"]))):

span = (ent["start"], ent["end"])

if not any(s[0] <= ent["start"] < s[1] for s in seen_spans):

merged.append(ent)

seen_spans.add(span)

return merged

Processing MeetStream Transcript Format

MeetStream's transcript endpoint returns a list of turn objects with speaker name, text, and timestamp. The NER pipeline processes each turn independently, then aggregates across the full meeting. Processing per turn rather than the whole transcript at once preserves speaker attribution for each entity mention.

import httpx

def fetch_and_extract_entities(bot_id: str, api_key: str) -> Dict:

response = httpx.get(

f"https://api.meetstream.ai/api/v1/transcript/{bot_id}/get_transcript",

headers={"Authorization": f"Token {api_key}"}

)

transcript = response.json()

meeting_entities = defaultdict(lambda: defaultdict(set))

for turn in transcript:

speaker = turn["speaker"]

text = turn["text"]

entities = run_ner_pipeline(text)

for ent in entities:

# Track which speakers mentioned each entity

meeting_entities[ent["label"]][ent["text"]].add(speaker)

# Convert to serializable structure

result = {}

for label, entity_map in meeting_entities.items():

result[label] = [

{"text": text, "mentioned_by": list(speakers)}

for text, speakers in entity_map.items()

]

return result

# Example output:

# {

# "PERSON": [{"text": "Sarah", "mentioned_by": ["Marcus", "Alice"]}],

# "ORG": [{"text": "Acme Corp", "mentioned_by": ["Marcus"]}],

# "PRODUCT": [{"text": "Slack", "mentioned_by": ["Alice", "Marcus"]}],

# "DEAL_VALUE": [{"text": "$18K", "mentioned_by": ["Marcus"]}]

# }

Speaker Name Resolution

First-name-only references are the hardest NER problem in meeting transcripts. "Can you get that to Alice?" references a participant, but the model has no way to resolve "Alice" to a full name without context. A lookup table built from the meeting participant list resolves this.

PARTICIPANT_LOOKUP = {

"alice": "Alice Chen",

"marcus": "Marcus Webb",

"sarah": "Sarah Lindqvist"

}

def resolve_person_entities(entities: List[Dict]) -> List[Dict]:

resolved = []

for ent in entities:

if ent["label"] == "PERSON":

full_name = PARTICIPANT_LOOKUP.get(ent["text"].lower())

if full_name:

ent = {**ent, "text": full_name, "resolved": True}

resolved.append(ent)

return resolved

For production deployments, populate the participant lookup from the MeetStream bot's meeting metadata, which includes participant names for Google Meet, Zoom, and Teams meetings. This makes resolution automatic without manual configuration per meeting.

Outputting Structured JSON for CRM Updates

The final output is a structured JSON object suitable for pushing to HubSpot, Salesforce, or Notion. Each field maps to a CRM property or note field.

def format_for_crm(entity_record: Dict, bot_id: str, meeting_title: str) -> Dict:

return {

"bot_id": bot_id,

"meeting_title": meeting_title,

"contacts_mentioned": [

e["text"] for e in entity_record.get("PERSON", [])

],

"companies_mentioned": [

e["text"] for e in entity_record.get("ORG", [])

],

"products_mentioned": [

e["text"] for e in entity_record.get("PRODUCT", [])

],

"deal_values_mentioned": [

e["text"] for e in entity_record.get("DEAL_VALUE", [])

]

}

Conclusion

Named entity recognition in Python turns meeting transcripts from text blobs into structured records. The combination of spaCy's entity ruler for domain-specific patterns and a Hugging Face transformer model for statistical NER covers most entity types you encounter in sales and engineering meetings. The MeetStream transcript format is clean enough to process directly, and the speaker attribution gives entity mentions the context they need to be useful in a CRM. If you want to run this against a real meeting, get started at app.meetstream.ai to deploy your first bot.

What is named entity recognition in Python and how does it work?

Named entity recognition (NER) is a natural language processing task that identifies and classifies named spans of text into predefined categories like PERSON, ORG, DATE, and MONEY. In Python, spaCy and Hugging Face transformers are the two main libraries. spaCy uses a combination of rule-based matching and statistical models. Hugging Face NER uses transformer models fine-tuned on labeled NER datasets, which produce higher accuracy on ambiguous or novel entity types at the cost of higher inference latency.

Which spaCy model should I use for meeting transcript NER?

en_core_web_lg is the recommended default. The large model uses 685k word vectors for context-sensitive disambiguation, which matters when meeting participants share names with common nouns. The medium model en_core_web_md works for high-volume workloads where inference cost matters more than marginal accuracy gains. Avoid en_core_web_sm for meeting analytics: it lacks word vectors and struggles with ambiguous spans.

How do I handle entity extraction for informal meeting language?

Informal meeting language requires custom rules on top of the base NER model. The most important additions are: informal deal value patterns ("around fifty K", "sub-five-hundred-dollar"), first-name-only person references resolved against a participant list, and product names that look like common words. The spaCy EntityRuler component handles these with token pattern matching and should be inserted before the statistical NER pipe so it takes precedence on known patterns.

Can I run NER in real time on a meeting in progress?

Yes. Use the MeetStream transcription.processed webhook with the end_of_turn flag to process each completed speaker turn. spaCy runs NER on short texts in under 10ms on CPU, making it fast enough to run synchronously on each turn. A Hugging Face transformer model adds 50-150ms per turn. Both are viable for real-time extraction. Accumulate entities in a session store keyed by bot ID and flush the final aggregated record when the bot.stopped event fires.

How is NER different from zero-shot classification for meeting transcripts?

NER extracts specific entity spans from within text and assigns them a type label. Zero-shot classification assigns a category to the entire text. They are complementary: NER tells you that the word "Salesforce" in a transcript refers to a company, and zero-shot classification tells you that the full turn is a "competitor mention" or "technical discussion". For complete meeting analytics, use NER to extract facts and zero-shot classification to label the semantic function of each turn.

Frequently Asked Questions

What entity types are most valuable to extract from meeting transcripts?

The highest-value entity types are person names (for action item assignment), organization names (for CRM matching), dates and times (for deadline extraction), product names (for competitive intelligence), and monetary amounts (for deal tracking). These five categories cover the majority of downstream automation use cases.

Which NER library works best for meeting transcript data?

spaCy with the en_core_web_trf transformer model provides the best accuracy for meeting transcripts, with F1 scores above 0.88 for standard entity types. For domain-specific entities like product names or internal project codes, fine-tune a spaCy model on a small labeled dataset of your own meeting transcripts using spaCy's training CLI.

How do I handle NER errors when entity spans cross sentence boundaries?

Use a sliding window approach with overlapping context. Process each sentence with 2 sentences of context on either side and take the entity prediction from the center window. This reduces errors for entities like "Director of Engineering at Acme Corp" where the title and organization span a sentence boundary.

How do I deduplicate entity mentions across a long meeting transcript?

After extraction, normalize entity strings using fuzzy matching (fuzz.token_sort_ratio from rapidfuzz) to merge "Google" and "Google Inc." into a single canonical entity. Then cluster mentions by entity type and canonical form to build a per-meeting entity graph that links all references to the same real-world entity.