AI Meeting Agent vs Transcription Tool: Key Differences

Most developers building on meeting APIs start with the same mental model: a bot joins the call, listens, produces a transcript, and leaves. That's a transcription tool. It's passive, it captures, and everything interesting happens after the meeting ends. That model covers a lot of use cases well, note-taking, CRM automation, compliance recording, analytics. But it's also a ceiling. When users want something that responds during the meeting, takes actions in real time, or retrieves information on demand mid-call, a passive transcription tool isn't the right abstraction.

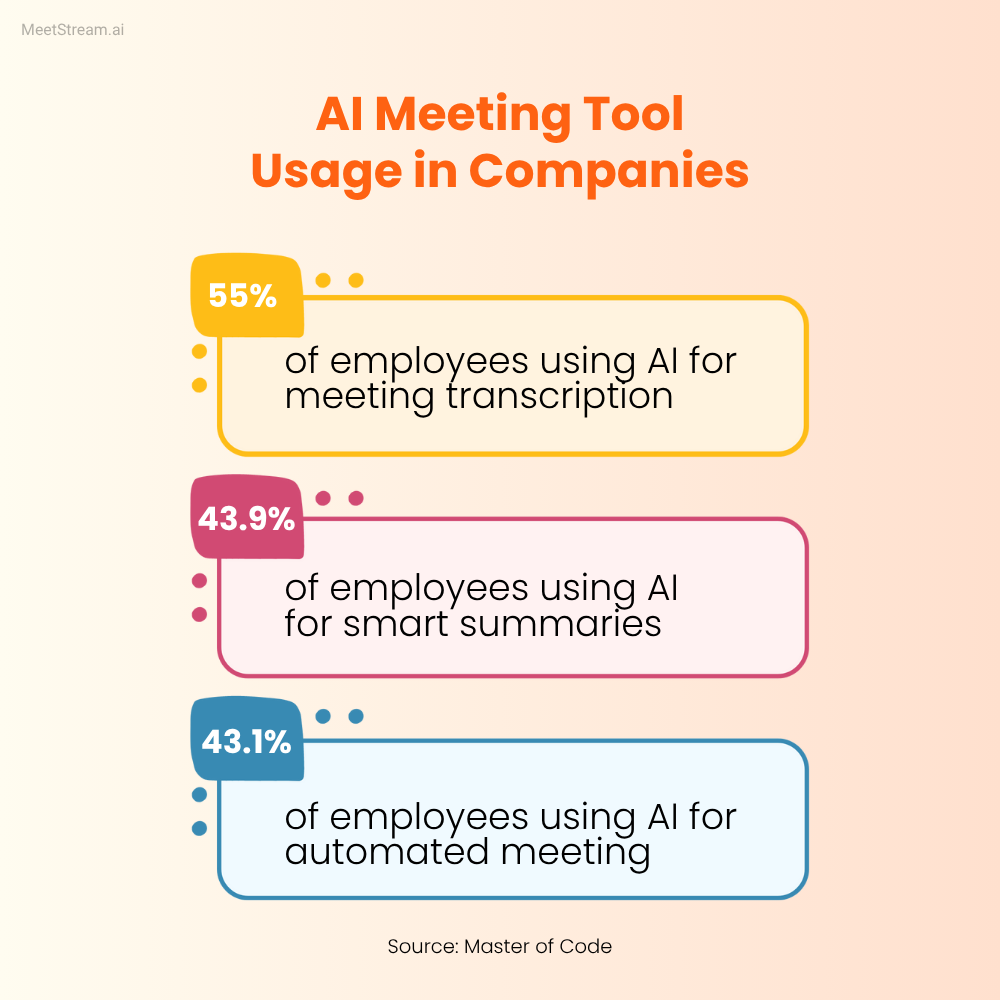

The ai meeting agent vs transcription tool distinction is becoming more consequential as the state of the art moves from post-call processing toward real-time interaction. Products are shipping that let an AI agent join a meeting and answer questions aloud, update a CRM record while the call is in progress, or retrieve a relevant document when a prospect asks about a specific case study. These aren't transcription tools with extra features, they're a different category of product with different architecture, different latency requirements, and different failure modes.

Understanding the distinction matters for product decisions and architecture decisions. If you're building a product that needs to process recordings after calls end, a passive transcription pipeline is the right architecture and simpler to build. If you're building something that needs to participate in meetings, you need an active agent model and the real-time infrastructure to support it. Building the wrong one for your use case wastes time and produces a product that doesn't satisfy the actual requirement.

This post draws a precise technical line between passive and active meeting bot vs notetaker use cases, covers the architecture for each, explains where MIA (Meeting Intelligence Agents) fits as the active agent model, and includes latency requirements and MCP tool integration for the agent use case. At the end, a comparison table maps use cases to the right model.

Passive Transcription: What It Is and What It's Good For

A passive transcription tool is a bot that joins a meeting, records audio and video, produces a transcript after the meeting ends, and delivers that transcript (and sometimes a recording) via webhook. It doesn't speak. It doesn't respond to what's said. It doesn't take actions during the meeting. Its entire value is the artifact it produces after the meeting concludes.

This is the right tool for a large class of use cases: automated note-taking and action item extraction, CRM automation (transcript triggers deal update after meeting ends), compliance recording (meeting content captured and retained), customer feedback extraction (customer language analyzed post-call), analytics (sentiment trends, competitor mentions, talk-time ratios). All of these use the transcript as input to a processing pipeline that runs after the meeting.

The architecture is straightforward: deploy a bot → bot joins meeting → meeting ends → transcription.processed webhook fires → your pipeline processes the transcript → output goes wherever it needs to go (CRM, Slack, database, Notion). The latency requirement is post-call, so you have minutes to hours to complete processing without affecting user experience. Errors are recoverable, if your pipeline fails, you can retry against the stored transcript.

The passive model is also operationally simpler. There's no need for real-time infrastructure, no sub-second latency requirements, and no complex state management across a live session. For most meeting automation use cases, passive transcription is the correct architecture and it's faster to build and easier to maintain.

Active AI Agents: What Changes

An active meeting agent participates in the meeting in real time. It listens to the audio stream as the meeting progresses. It can speak (Voice type), send chat messages visible to participants (Chat type), or take backend actions triggered by what it hears (Action type). The defining characteristic is that it operates during the meeting, not after it.

This changes the architecture in fundamental ways. You need a live audio stream, not just a post-call recording. You need low-latency processing, if the agent takes 15 seconds to respond to a spoken question, the conversation has moved on and the response is useless. You need state management across the duration of the meeting (what's been said so far, what context is live, what actions have been taken). And you need failure handling that degrades gracefully in front of live participants, a silent failure is better than a garbled audio response mid-call.

The use cases that require an active agent are specifically those where the value depends on timing within the meeting: answering a question asked during the call, surfacing a piece of information at the moment it's relevant, updating a record while the conversation is still in context, executing a command spoken by a participant. A post-call pipeline cannot do these things. An active agent can.

MIA: The Active Agent Model in MeetStream

MIA (Meeting Intelligence Agents) is MeetStream's framework for deploying active agents into meetings. When creating a bot, you include an agent_config_id in the payload that references a configured agent. That agent runs alongside the bot during the meeting, connected to the live audio stream.

MIA supports three agent action types. Voice agents can speak in the meeting, they receive the audio stream, process it, and synthesize a spoken response delivered back into the meeting audio. Chat agents send text messages to the meeting chat, visible to all participants. Action agents take backend actions triggered by what they hear, updating a database record, calling an external API, sending a notification, without any visible output in the meeting itself.

MIA operates in two modes. Realtime mode processes audio with sub-second latency, appropriate for interactive voice agents that need to respond to spoken questions within the natural rhythm of conversation. Pipeline mode processes audio in chunks with slightly higher latency but supports more complex processing, multiple LLM calls, external API lookups, multi-step reasoning, appropriate for agents that take actions or provide information that requires more computation.

The difference between these modes is a latency-capability tradeoff. Realtime mode is fast but has constraints on processing complexity. Pipeline mode can do more complex things but has higher latency. A voice agent that answers factual questions from a knowledge base might work in Realtime mode. An agent that looks up a prospect's CRM history and surfaces relevant deal context needs Pipeline mode to accommodate the API call latency.

Latency Requirements by Use Case

Latency is the primary engineering constraint distinguishing active agent products from passive ones. Here's the practical breakdown:

For voice response agents, the maximum acceptable latency from end of user utterance to start of agent speech is approximately 1.5 to 2 seconds. Beyond 2 seconds, the agent's response arrives in the awkward silence that occurs when meeting participants are waiting to see if anyone else will speak, which creates confusion. Under 1 second feels natural. This is a very tight latency budget for a pipeline that includes: audio capture → speech endpointing → transcription → intent classification → LLM inference → text-to-speech synthesis → audio delivery.

Achieving sub-1.5s voice response latency requires: streaming transcription (don't wait for the full utterance to be transcribed before starting processing), streaming LLM inference (start TTS while the LLM is still generating), a low-latency TTS model, and minimal network hops. This is achievable but requires careful pipeline optimization. Each stage needs a time budget, and the total must stay under threshold.

For chat agents, latency tolerance is higher, 3 to 5 seconds for a chat message to appear is acceptable because chat is a secondary channel in most video meetings and participants don't expect real-time conversation rhythm from it. This relaxed latency budget allows for richer processing: multiple sequential LLM calls, external API lookups, complex retrieval.

For action agents (backend actions triggered by meeting content), latency visible to the participant is often zero, the action happens in the background. The latency constraint is internal consistency: if an agent is supposed to create a CRM record when a certain phrase is spoken, the record should exist by the time the meeting ends and the sales rep goes to look at it. A few seconds of action latency is fine.

MCP Integration for Tool Use in Active Agents

The Model Context Protocol (MCP) is a standardized interface for connecting AI agents to external tools, databases, APIs, knowledge bases, communication services. For interactive meeting agents, MCP integration is what distinguishes an agent that can only answer questions from its training data from one that can retrieve live information, execute actions, and interact with the tools a user's organization actually uses.

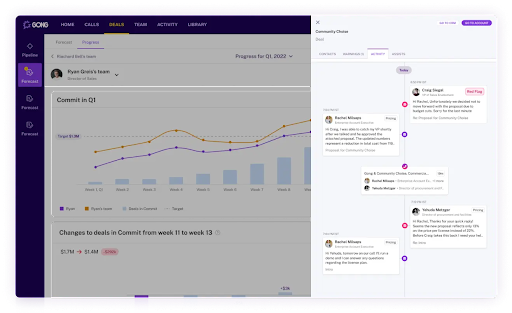

A sales agent with MCP integration can pull a prospect's current deal stage from HubSpot when asked "what's the status of this deal?" mid-call. A support agent can retrieve a customer's account history from Zendesk when a complaint is raised. A research agent can query a vector database of internal documents when a meeting participant asks a question that requires institutional knowledge.

The technical integration: your agent's action pipeline includes MCP tool calls as one of its output types. When the agent determines that answering a question requires a tool lookup, it invokes the MCP tool with the appropriate parameters and incorporates the result into its response. The response latency budget needs to account for the tool call round-trip time, a slow external API will push voice response latency over the acceptable threshold.

Tool selection matters for agent reliability. An agent with 50 available tools performs worse than an agent with 5 well-designed tools for a specific use case. The retrieval problem (which tool to use for which query) degrades as the tool count grows. Design meeting agents with a focused tool set, the tools that will actually be used in the specific meeting context, rather than connecting every available integration.

Comparison Table: Passive Transcription vs. Active Agent

| Dimension | Passive Transcription Tool | Active AI Agent (MIA) |

|---|---|---|

| When it operates | After the meeting ends | During the meeting in real time |

| Speaks in meetings | No | Yes (Voice type) |

| Sends chat messages | No | Yes (Chat type) |

| Takes backend actions | Post-call only | During and after (Action type) |

| Latency requirement | Minutes to hours | Sub-2s (voice), sub-5s (chat) |

| Infrastructure complexity | Low | High |

| Failure mode | Retry against stored transcript | Graceful degradation in live session |

| MCP tool integration | Post-call pipeline only | Real-time during meeting |

| Right for | Notes, CRM, compliance, analytics | Interactive Q&A, real-time coaching, voice commands |

When to Use Each: Product Architecture Decisions

The decision tree is simpler than it might seem. Ask one question: does the value of this feature require it to happen during the meeting, or is the same value achievable post-call?

If a sales rep needs coaching feedback, there are two approaches. Post-call: process the transcript, generate a coaching report, the rep reads it before their next call. During-call: the agent hears an objection and delivers a nudge in real time when it can still affect the outcome. These are different products with different values. The during-call version requires an active agent. The post-call version doesn't.

For most organizations adopting meeting automation for the first time, starting with passive transcription is the right call. The infrastructure is simpler, the failure modes are easier to handle, and the value (automated notes, CRM updates, structured summaries) is substantial and immediate. Passive transcription products ship faster, maintain more reliably, and cover the majority of high-value use cases.

Active agents make sense when you have a specific interactive use case, a user base that's asking for real-time capability, and the engineering capacity to build and maintain real-time infrastructure. They're also a stronger product differentiation story, post-call transcription is becoming commoditized; real-time interaction is harder to replicate.

State Management in Active Agent Sessions

One engineering challenge unique to active agents is session state. A passive transcription pipeline is stateless across the meeting duration, you process the transcript at the end and don't need to track anything mid-session. An active agent needs context across the entire meeting: what's been said, what questions have been asked, what actions have been taken, what information has been retrieved.

This session state needs to persist for the duration of the meeting (potentially 60-90 minutes), be accessible with low latency for each processing cycle, and be cleared or archived cleanly when the meeting ends. An in-memory store per active session (Redis or an in-process store) works for session state. The context window you maintain for the agent's LLM calls needs to be managed carefully, for a long meeting, you'll exceed context limits if you naively append every transcript segment to a growing context. A sliding window approach (keeping the last N minutes of conversation plus key summaries) balances context richness with context length.

The bot.stopped webhook event is your signal that the session has ended. Flush session state to persistent storage (or archive it), trigger any post-call processing that's waiting on the full transcript, and clean up in-memory resources. This cleanup step is important for multi-tenant systems where session state from one meeting should never be accessible in another.

Frequently Asked Questions

Can a single bot deployment do both passive transcription and active agent behavior?

Yes. When you include an agent_config_id in the bot creation request, the bot operates as an active agent. It simultaneously records and transcribes (passive function) while also processing live audio and taking actions (active function). The transcription.processed webhook fires after the meeting ends regardless of whether an agent was active. Many products start with passive transcription and add an agent config when they're ready to add real-time features, the transition doesn't require separate bot deployments.

What's the practical difference between Realtime and Pipeline mode in MIA?

Realtime mode is designed for low-latency interactive responses where the agent needs to respond within the natural conversational rhythm. Pipeline mode processes audio in chunks and allows for more complex reasoning and external tool calls but with higher latency. Realtime mode suits voice agents answering questions where a 1-2 second response time is required. Pipeline mode suits action agents that need to look up external data, run multi-step workflows, or coordinate with other systems where 3-10 second latency is acceptable. Choose based on your latency requirement, not on the complexity of your processing logic alone.

How do active meeting agents handle speaker identification errors?

Active agents receive speaker labels from the live transcription stream, which may be less accurate than post-call diarization. In Voice mode, the agent typically responds to any speaker without requiring precise identification. In Action mode, taking an action based on who said something (e.g., "log this for the sales rep, not the prospect") requires reliable speaker role identification, which works better when you pre-associate speaker identities from meeting attendee metadata rather than relying on real-time diarization alone. Design your agent logic to be tolerant of occasional speaker label errors rather than breaking when they occur.

What makes an active meeting agent different from a meeting notetaker with triggers?

A meeting bot vs notetaker distinction: a notetaker with triggers still operates post-call, it watches the transcript for trigger phrases and runs actions after the meeting. An active agent operates during the meeting, responds in the meeting's audio or chat channel, and can affect the course of the meeting. The latency of the response is the clearest differentiator: a triggered notetaker might take 30 seconds to act on something said in a meeting. A voice agent responds in under 2 seconds. For interactive use cases (answering questions, providing information on demand), that timing difference defines whether the feature is useful or not.

What infrastructure do you need to run a production-grade active meeting agent?

At minimum: a real-time audio processing pipeline with sub-500ms transcription latency, a fast LLM inference endpoint (self-hosted or a low-latency hosted model), a TTS service if using voice output, a session state store (Redis), MCP tool implementations for any external integrations, and a monitoring system that tracks per-session latency at each pipeline stage. The monitoring is non-optional, latency degradations in active agent pipelines produce bad user experiences that are hard to debug after the fact. Instrument every stage of the pipeline and alert on latency percentile degradation, not just error rates.

If you're evaluating the right architecture for your meeting product, the MeetStream documentation covers both the passive transcription pipeline and the MIA configuration for active agents. Both are available through the same API, the agent_config_id parameter is what switches you from passive to active. Start with MeetStream to test both modes against your use case before committing to the full architecture.