Real-Time Audio Processing in Python for Meeting Bots

When you build a meeting bot that transcribes in real time, you are not dealing with audio files. You are dealing with a continuous stream of raw PCM frames arriving over a WebSocket, each tagged with a speaker identifier, arriving faster than you can think about them. The latency budget from frame arrival to transcript output is typically under two seconds. Every unnecessary copy, every synchronous I/O call, every non-vectorized loop eats into that budget.

Audio processing in Python for real-time workloads is a discipline separate from batch audio processing. The tools are the same (NumPy, SciPy, PyAudio) but the constraints are different. You need to parse binary frame headers with the struct module, not a media library. You need to buffer incomplete frames across WebSocket messages. You need to resample from 48kHz to 16kHz without calling a subprocess. You need to feed Whisper with minimal overhead.

MeetStream's live audio WebSocket delivers binary frames with a specific layout: a one-byte message type, a two-byte little-endian speaker ID length, the speaker ID string, a two-byte little-endian speaker name length, the speaker name string, and then raw PCM int16 little-endian 48kHz mono audio. This is the format you will parse, process, and feed to a speech recognition model in this guide.

We will build a complete asyncio WebSocket server that receives this stream, parses frames with struct, runs NumPy operations on the audio data, resamples from 48kHz to 16kHz using scipy.signal, and feeds chunks to Whisper. Latency measurement is included throughout. Let's get into it.

Parsing the MeetStream PCM Frame Format with struct

The Python struct module unpacks binary data according to a format string. The MeetStream frame format requires sequential parsing because the speaker ID and speaker name are variable-length strings. You cannot unpack the whole frame in one call.

import struct

from dataclasses import dataclass

from typing import Optional

import numpy as np

@dataclass

class AudioFrame:

msg_type: int

speaker_id: str

speaker_name: str

pcm_data: np.ndarray # int16, 48kHz, mono

def parse_audio_frame(raw: bytes) -> Optional[AudioFrame]:

if len(raw) < 5:

return None

offset = 0

# 1 byte: message type

msg_type = struct.unpack_from("B", raw, offset)[0]

offset += 1

# 2 bytes LE: speaker_id length

sid_len = struct.unpack_from("

The np.frombuffer call with dtype=np.int16 is a zero-copy view into the bytes object on most platforms. This is important for throughput: at 48kHz, each 20ms frame contains 960 samples, and copying that data on every frame adds up.

NumPy Operations on PCM Audio Data

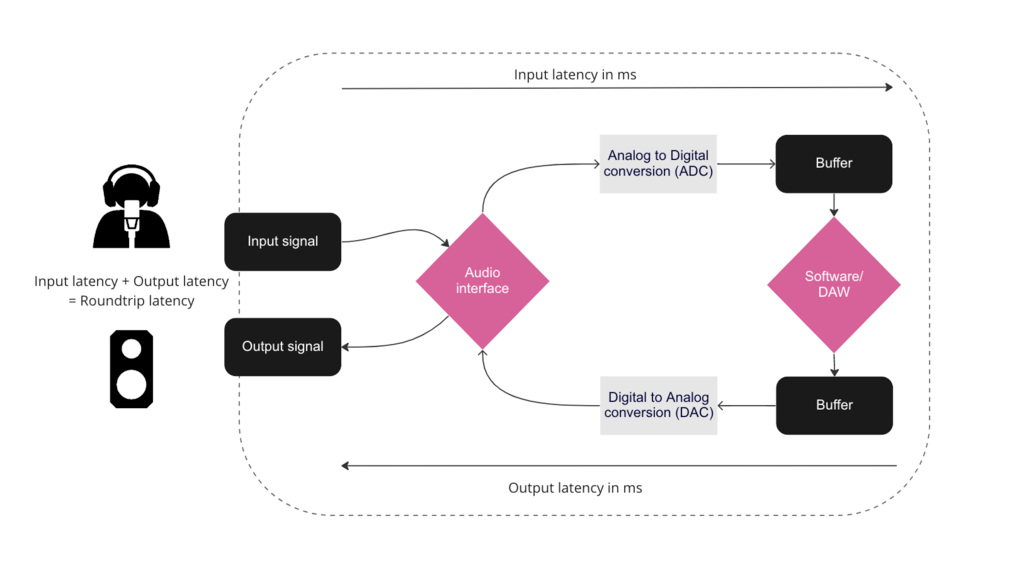

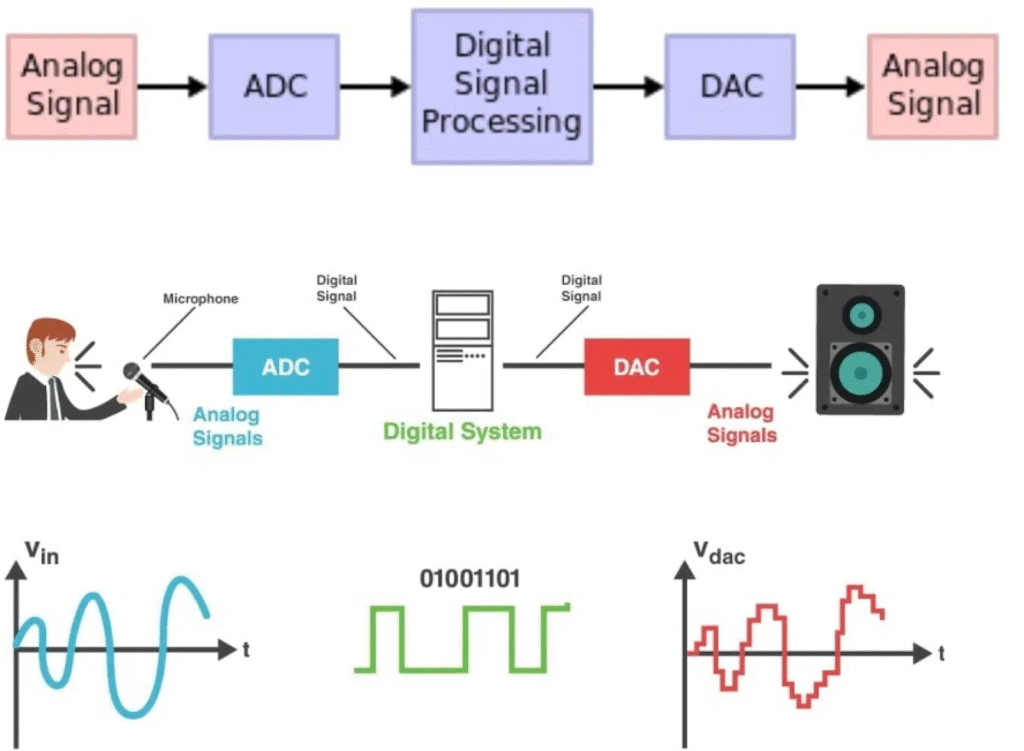

Raw int16 PCM data has a value range of -32768 to 32767. Whisper and most speech recognition models expect float32 normalized to -1.0 to 1.0. Normalize with a vectorized NumPy operation.

def normalize_pcm(pcm_int16: np.ndarray) -> np.ndarray:

# Convert to float32 and normalize to [-1.0, 1.0]

return pcm_int16.astype(np.float32) / 32768.0

def compute_rms(pcm_float32: np.ndarray) -> float:

# Root mean square: measure of signal energy

# Useful for voice activity detection

return float(np.sqrt(np.mean(pcm_float32 ** 2)))

VAD_THRESHOLD = 0.01 # Below this RMS, frame is likely silence

def is_speech(pcm_float32: np.ndarray) -> bool:

return compute_rms(pcm_float32) > VAD_THRESHOLD

The RMS-based voice activity detection (VAD) check is a fast first filter. Frames with RMS below the threshold are silence or background noise. Skipping these before resampling and recognition cuts your inference load by 40-60% in typical meeting audio where there are regular pauses between speakers.

Resampling from 48kHz to 16kHz with SciPy

Whisper requires 16kHz input. MeetStream delivers 48kHz audio. The resampling ratio is 1:3. The scipy.signal.resample function performs this with a polyphase filter, which is both fast and accurate.

from scipy import signal

SOURCE_RATE = 48000

TARGET_RATE = 16000

RESAMPLE_RATIO = TARGET_RATE / SOURCE_RATE # 0.333...

def resample_48k_to_16k(pcm_float32: np.ndarray) -> np.ndarray:

target_length = int(len(pcm_float32) * RESAMPLE_RATIO)

return signal.resample(pcm_float32, target_length)

# Alternative: polyphase resampling is faster for integer ratios

def resample_polyphase(pcm_float32: np.ndarray) -> np.ndarray:

# resample_poly(x, up, down) computes the resampling as up/down ratio

# For 48k -> 16k: down by 3, up by 1

return signal.resample_poly(pcm_float32, up=1, down=3)

Use resample_poly for integer ratios. It is significantly faster than resample because it avoids the FFT-based computation for cases where the ratio is a simple fraction. The 48kHz to 16kHz conversion is exactly 1/3, making this the right choice for MeetStream audio.

Buffering Frames for Whisper Inference

Whisper works best on audio chunks of 2-10 seconds. Individual PCM frames from MeetStream are typically 10-20ms. You need a buffer that accumulates frames and flushes to Whisper when it reaches a target duration, or when a speaker turn ends.

from collections import defaultdict

from typing import Dict, List

import time

class SpeakerAudioBuffer:

def __init__(self, target_seconds: float = 3.0, sample_rate: int = 16000):

self.target_samples = int(target_seconds * sample_rate)

self.buffers: Dict[str, List[np.ndarray]] = defaultdict(list)

self.sample_counts: Dict[str, int] = defaultdict(int)

self.last_activity: Dict[str, float] = defaultdict(float)

def add_frame(self, speaker_id: str, pcm_16k: np.ndarray) -> Optional[np.ndarray]:

self.buffers[speaker_id].append(pcm_16k)

self.sample_counts[speaker_id] += len(pcm_16k)

self.last_activity[speaker_id] = time.monotonic()

if self.sample_counts[speaker_id] >= self.target_samples:

return self.flush(speaker_id)

return None

def flush(self, speaker_id: str) -> Optional[np.ndarray]:

if speaker_id not in self.buffers or not self.buffers[speaker_id]:

return None

combined = np.concatenate(self.buffers[speaker_id])

self.buffers[speaker_id] = []

self.sample_counts[speaker_id] = 0

return combined

def flush_stale(self, timeout_seconds: float = 1.5) -> Dict[str, np.ndarray]:

now = time.monotonic()

stale = {}

for speaker_id, last in list(self.last_activity.items()):

if now - last > timeout_seconds and self.sample_counts[speaker_id] > 0:

flushed = self.flush(speaker_id)

if flushed is not None:

stale[speaker_id] = flushed

return stale

Feeding Buffered Audio to Whisper

The whisper Python package accepts a NumPy float32 array at 16kHz directly, without writing to disk. This is the fastest path to transcription from a live stream.

import whisper

model = whisper.load_model("base.en") # or "small.en" for higher accuracy

def transcribe_chunk(audio_float32: np.ndarray) -> str:

# whisper.transcribe expects float32 at 16kHz

result = model.transcribe(

audio_float32,

language="en",

fp16=False, # set True if CUDA available

no_speech_threshold=0.4

)

return result["text"].strip()

Complete asyncio WebSocket Server

The complete server receives the MeetStream WebSocket connection, parses frames, runs the audio processing pipeline, and emits transcripts. Latency measurements are embedded at each stage.

import asyncio

import websockets

import time

import json

buffer = SpeakerAudioBuffer(target_seconds=3.0)

async def handle_audio_stream(websocket):

print("Bot connected, receiving audio stream")

async for message in websocket:

t0 = time.monotonic()

if not isinstance(message, bytes):

continue

# Parse frame

frame = parse_audio_frame(message)

if frame is None:

continue

t1 = time.monotonic()

# Normalize to float32

pcm_float = normalize_pcm(frame.pcm_data)

# VAD check: skip silence

if not is_speech(pcm_float):

buffer.flush_stale() # check for stale speaker buffers

continue

# Resample 48kHz -> 16kHz

pcm_16k = resample_polyphase(pcm_float)

t2 = time.monotonic()

# Buffer and check if ready for inference

ready_audio = buffer.add_frame(frame.speaker_id, pcm_16k)

# Also check for stale buffers (pauses in speech)

stale = buffer.flush_stale(timeout_seconds=1.5)

if stale:

for speaker_id, audio in stale.items():

transcript = transcribe_chunk(audio)

print(f"STALE [{speaker_id}] {transcript}")

if ready_audio is not None:

t3 = time.monotonic()

transcript = transcribe_chunk(ready_audio)

t4 = time.monotonic()

print(f"[{frame.speaker_name}] {transcript}")

print(f" parse={1000*(t1-t0):.1f}ms resample={1000*(t2-t1):.1f}ms whisper={1000*(t4-t3):.0f}ms")

async def main():

async with websockets.serve(handle_audio_stream, "0.0.0.0", 8765):

print("WebSocket server listening on ws://0.0.0.0:8765")

await asyncio.Future() # run forever

if __name__ == "__main__":

asyncio.run(main())

Latency Profiling and Optimization

A typical latency breakdown for this pipeline on a modern laptop with CPU inference looks like: frame parsing under 1ms, normalization under 1ms, VAD check under 1ms, resampling 2-5ms, Whisper base.en inference 300-600ms per 3-second chunk. The dominant cost is Whisper inference. GPU acceleration brings this to 30-80ms, making the full pipeline sub-100ms end-to-end on GPU hardware.

# Optimization: run Whisper inference in a thread pool to avoid blocking the event loop

from concurrent.futures import ThreadPoolExecutor

executor = ThreadPoolExecutor(max_workers=2)

async def transcribe_async(audio: np.ndarray) -> str:

loop = asyncio.get_event_loop()

return await loop.run_in_executor(executor, transcribe_chunk, audio)

The thread pool approach keeps the asyncio event loop unblocked during Whisper inference. Without this, a 500ms Whisper call blocks frame parsing for all speakers, which causes frames to queue and audio to drop on high-traffic meetings.

Conclusion

Real-time audio processing in Python requires thinking at the byte level. The struct module for frame parsing, NumPy for vectorized operations, SciPy for resampling, and asyncio for non-blocking I/O compose into a pipeline that handles meeting audio at production throughput. MeetStream's WebSocket audio format gives you per-speaker PCM frames with a consistent binary structure that maps cleanly to this pipeline. If you want to test it with a live meeting, deploy your bot from app.meetstream.ai and point the WebSocket URL at your server.

What format does MeetStream use for live audio streaming?

MeetStream delivers audio as binary WebSocket frames containing a one-byte message type, two-byte little-endian speaker ID length, the speaker ID as UTF-8, two-byte little-endian speaker name length, the speaker name as UTF-8, and then raw PCM int16 little-endian audio at 48kHz mono. There are no container headers or codec wrappers. The audio data is raw samples that can be passed directly to np.frombuffer with dtype=np.int16.

How do I resample audio from 48kHz to 16kHz in Python?

Use scipy.signal.resample_poly(audio, up=1, down=3) for the 48kHz to 16kHz conversion. The polyphase resampler is faster than FFT-based resampling for integer ratios, and 48kHz to 16kHz is exactly a 1/3 ratio. Normalize the int16 audio to float32 before resampling by dividing by 32768.0. The output is float32 at 16kHz, which is what Whisper and most speech recognition APIs expect.

How do I use Whisper with a NumPy array instead of an audio file?

Call model.transcribe(audio_float32) directly with a NumPy float32 array at 16kHz. Whisper accepts in-memory arrays without writing to disk. Use whisper.load_model("base.en") for English-only transcription, which is smaller and faster than the multilingual models. Set fp16=True if you have a CUDA GPU available. The no_speech_threshold parameter (default 0.6, recommend 0.4 for meetings) controls how aggressively the model skips silent segments.

What causes audio processing latency in real-time meeting bots?

The dominant latency source in a Python audio pipeline is speech recognition inference. Whisper base.en takes 300-600ms per 3-second chunk on CPU, and there is no way to reduce this without GPU acceleration or a lighter model. Other sources are negligible by comparison: frame parsing is under 1ms, resampling is 2-5ms, and NumPy normalization is under 1ms. Run Whisper inference in a thread pool via asyncio's run_in_executor to prevent inference time from blocking the WebSocket receive loop.

How do I implement voice activity detection in Python for meeting audio?

Root mean square (RMS) energy is the fastest VAD approach: compute np.sqrt(np.mean(pcm ** 2)) on each normalized float32 frame. A threshold around 0.01 filters out silence and background noise effectively for meeting audio in typical office or home environments. For more robust VAD, the silero-vad model runs on PyTorch and provides segment-level speech/no-speech classification with very low false positive rate. It adds ~5ms per frame on CPU, which is acceptable for most latency budgets.

Frequently Asked Questions

What Python library is best for real-time audio processing?

PyAudio provides direct access to system audio hardware, but for meeting bot pipelines, you are typically processing network audio rather than microphone input. Use asyncio with aiohttp WebSocket clients and process audio chunks in an async generator to avoid blocking the event loop on I/O.

How do I minimize latency in a Python audio pipeline?

Avoid using threading.Thread for audio processing because the GIL introduces jitter. Instead, use asyncio for I/O-bound steps and push CPU-bound work like FFT or VAD into a ProcessPoolExecutor. Keep chunk sizes at 20-40ms to balance latency against the overhead of frequent function calls.

What is Voice Activity Detection and why does it matter for latency?

VAD detects whether an audio chunk contains speech or silence. By skipping transcription API calls for silent chunks, you reduce unnecessary network round trips and API costs. Libraries like WebRTC VAD (via py-webrtcvad) process a 10ms frame in under 1ms and are suitable for real-time use.

How do I benchmark audio processing latency in Python?

Use time.perf_counter() to measure the wall-clock time from chunk receipt to transcript delivery. Log percentile latencies (p50, p95, p99) rather than averages, since audio pipelines experience bursty latency spikes during speaker transitions. Aim for p95 under 400ms for a good user experience.