WebRTC vs RTMP for Meeting Bots: Protocol Comparison

When you build infrastructure that touches live meeting video, you will eventually have to make a decision about what happens to the stream after it leaves the meeting platform. That decision almost always comes down to two protocols: WebRTC or RTMP. They are not interchangeable, and the use cases that suit each one are more distinct than they might appear from the surface-level description of both being "video streaming protocols."

WebRTC (Web Real-Time Communication) and RTMP (Real-Time Messaging Protocol) were designed for different problems in different eras. WebRTC was standardized in 2011 to enable sub-second peer-to-peer communication directly in browsers without plugins. RTMP was developed by Macromedia in the early 2000s as the streaming backbone for Flash Player. One is browser-native and modern. The other is TCP-based and gradually being displaced, but still occupies a specific niche in broadcast infrastructure that nothing else has fully replaced.

For meeting bots specifically, understanding both protocols matters because meeting platforms use WebRTC internally, but there are legitimate reasons to re-stream meeting audio and video to RTMP destinations like YouTube Live, Twitch, Wowza, or a media server for CDN delivery. The decision point is not which protocol is better in the abstract. It is which protocol fits your specific use case.

In this guide, you will get a technical comparison of WebRTC vs RTMP covering transport, latency, signaling, browser compatibility, and use cases in the meeting bot context. Let's get into it.

How WebRTC Works: SDP, ICE, and UDP Transport

WebRTC is a peer-to-peer communication framework built into all modern browsers. It handles the full lifecycle of a real-time media connection: signaling, network traversal, codec negotiation, encryption, and media transport. Each of these is a separate subsystem.

The signaling layer uses Session Description Protocol (SDP), which is an offer/answer exchange between two peers describing their supported codecs, transport addresses, and media directions. SDP itself is not a transport. It is exchanged over any channel you choose (WebSocket is common) before the media connection is established.

Network traversal uses Interactive Connectivity Establishment (ICE), which collects candidate IP addresses from the local network, STUN servers (for NAT traversal), and TURN relay servers (for symmetric NAT). ICE negotiates the best path between peers. This process typically takes 100-500ms on first connection.

Media transport uses Secure Real-Time Transport Protocol (SRTP) over UDP. UDP means no retransmission of lost packets. For live media, this is intentional: a retransmitted video frame from 200ms ago is useless. WebRTC instead uses Forward Error Correction (FEC) and adaptive bitrate to maintain quality under packet loss. The result is sub-second end-to-end latency in typical conditions.

// Simplified WebRTC offer/answer example (browser)

const pc = new RTCPeerConnection({

iceServers: [{urls: 'stun:stun.l.google.com:19302'}]

});

// Add local media

const stream = await navigator.mediaDevices.getUserMedia({audio: true, video: true});

stream.getTracks().forEach(track => pc.addTrack(track, stream));

// Create offer (SDP)

const offer = await pc.createOffer();

await pc.setLocalDescription(offer);

// Exchange offer via your signaling channel, receive answer, set remote description

// ICE candidates are gathered and exchanged in parallel

How Meeting Platforms Use WebRTC Internally

Google Meet, Zoom, and Microsoft Teams all use WebRTC as the underlying transport for participant audio and video. The browser client uses standard WebRTC APIs to capture, encode, and transmit media. The meeting platform's media servers are Selective Forwarding Units (SFUs): they receive each participant's stream and selectively forward it to other participants without mixing or transcoding.

When a meeting bot joins a meeting, it participates in the WebRTC session as a standard peer. The bot receives the same encoded media streams that any browser participant receives. MeetStream handles this WebRTC participation internally and exposes the resulting audio and video through its own API (WebSocket audio frames, webhook transcriptions, and downloadable video files) rather than exposing the raw WebRTC connection to developers.

This is the right architecture for a bot API: WebRTC session management is complex and platform-specific. Exposing a stable, consistent API on top of it is more useful than exposing the raw WebRTC stack.

How RTMP Works: TCP, H.264, and Broadcast Architecture

RTMP is a TCP-based streaming protocol that chunks audio and video into small packets for transmission over a persistent connection to a media server. Originally designed for Flash Player, it survived the death of Flash because it became the de facto ingest protocol for live streaming platforms: YouTube Live, Twitch, Facebook Live, Wowza, and OBS all support RTMP ingest.

RTMP uses H.264 for video and AAC for audio almost universally. The stream flows from encoder to media server, which then re-encodes or packages for delivery to viewers via HLS or MPEG-DASH. This re-encoding step introduces latency: a typical RTMP-to-HLS pipeline has 10-30 seconds of end-to-end latency due to HLS segment sizes.

# Sending a stream to RTMP using FFmpeg (from a meeting bot)

ffmpeg \

-f rawvideo -pix_fmt yuv420p -s 1280x720 -r 30 -i /dev/stdin \

-f s16le -ar 48000 -ac 1 -i /dev/stdin \

-vcodec libx264 -preset veryfast -tune zerolatency \

-acodec aac -ar 44100 \

-f flv rtmp://a.rtmp.youtube.com/live2/YOUR_STREAM_KEY

ner-rtmp-webrtc-hls-1.png"/>

ner-rtmp-webrtc-hls-1.png"/>The -tune zerolatency H.264 parameter reduces encoding latency at the cost of slightly lower compression efficiency. For live streaming, this tradeoff is almost always worth it.

WebRTC vs RTMP: Side-by-Side Comparison

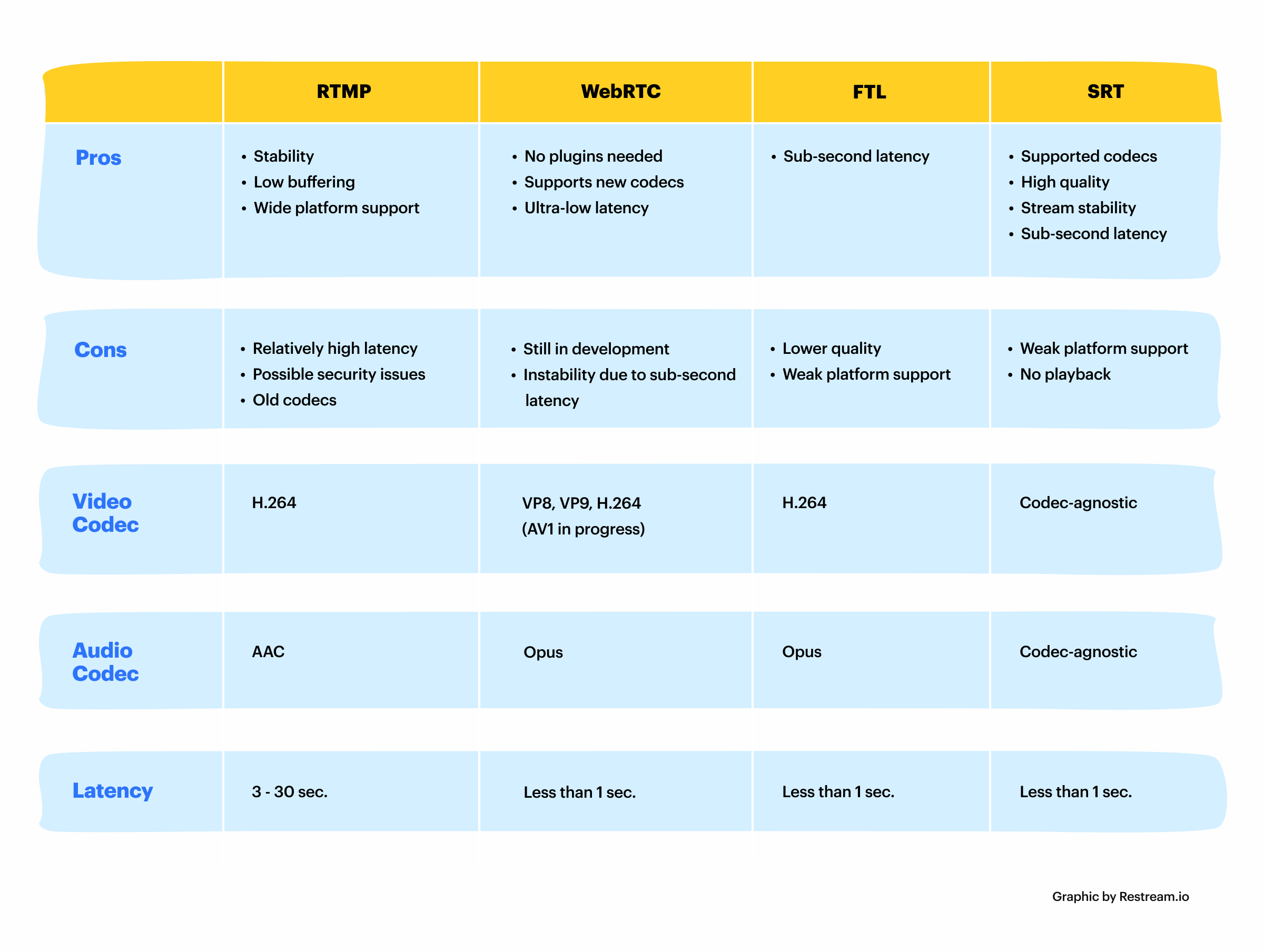

| Dimension | WebRTC | RTMP |

|---|---|---|

| Transport | UDP (SRTP/DTLS) | TCP |

| End-to-end latency | Under 500ms typical | 10-30 seconds (via HLS) |

| Low-latency RTMP | N/A | 2-5 seconds (RTMP direct) |

| Signaling | SDP offer/answer + ICE | Handshake + connect command |

| Browser native | Yes, all modern browsers | No (Flash dead, no browser support) |

| Codec flexibility | VP8, VP9, H.264, AV1, Opus | H.264 + AAC (de facto standard) |

| NAT traversal | Built-in via ICE/STUN/TURN | Requires firewall/port config |

| P2P capability | Yes (native) | No (server-mediated) |

| CDN delivery | Complex (WHIP/WHEP emerging) | Well-supported via HLS repackaging |

| Use in meeting platforms | Universal (Meet, Zoom, Teams) | Not used internally |

| Use for broadcast | Possible but non-standard | Standard ingest for YouTube/Twitch |

When to Use Each Protocol in a Meeting Bot Context

The use case determines the protocol. Most meeting bot use cases involve processing the meeting data after capture, not re-streaming it. For those cases, neither WebRTC nor RTMP is the right answer: what you want is the raw audio and transcript data from the meeting API, which is exactly what MeetStream provides.

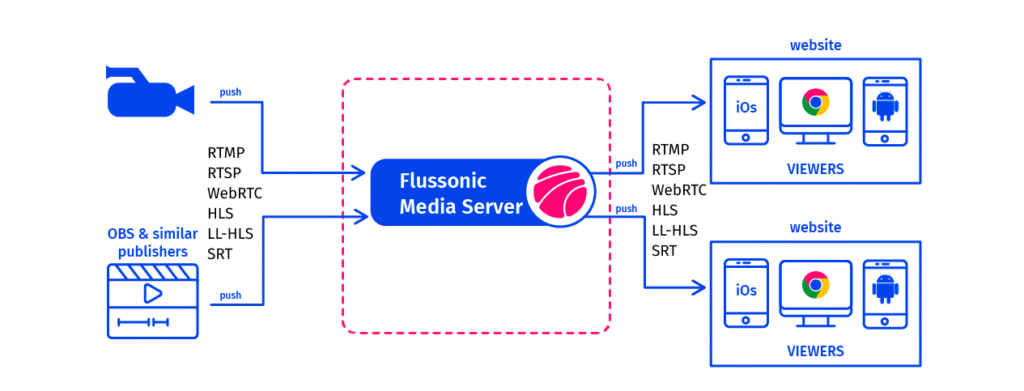

The RTMP use case for meeting bots is narrow but real: re-streaming a meeting to a broadcast destination. A company that wants to live-stream an all-hands meeting to YouTube Live, or record a product demo for a CDN-delivered replay, needs RTMP ingest. The workflow is: meeting bot captures WebRTC media internally, MeetStream delivers video or audio via its API, your application re-encodes and pushes to RTMP.

The WebRTC use case for custom development arises when you need real-time bidirectional communication: a voice agent that joins a meeting and speaks back, or a real-time audio analysis system with under 500ms latency. Meeting platforms expose this via WebRTC, and bots participate in it. MeetStream abstracts this complexity away for most use cases via the live audio WebSocket.

RTMP's Declining but Persistent Role

RTMP as a viewer delivery mechanism is effectively dead. Flash Player is gone. Browsers cannot play RTMP natively. Every modern streaming platform converts RTMP ingest to HLS or MPEG-DASH before delivering to viewers. RTMP is now exclusively an ingest protocol: the last mile between an encoder and a media server.

The industry is gradually adopting WHIP (WebRTC HTTP Ingest Protocol) as an RTMP replacement. Cloudflare Stream, Dolby.io, and a handful of other platforms already support WHIP ingest. WHIP offers sub-second ingest latency compared to RTMP's typical 2-5 seconds, and it uses browser-native WebRTC rather than a legacy TCP protocol. But RTMP still has a decade or more of operational life left in it because OBS, FFmpeg, and the majority of streaming hardware support it and nothing else.

Practical Guidance for Meeting Bot Developers

If you are building a meeting bot application and your goal is to process audio, generate transcripts, extract action items, or analyze meetings, you do not need to choose between WebRTC and RTMP. MeetStream handles the WebRTC participation internally and delivers audio via WebSocket and transcripts via webhook. Start with the MeetStream API.

If your goal is to re-stream meetings to a broadcast platform, the practical stack is: MeetStream bot captures the meeting, your server receives audio/video via MeetStream's delivery mechanisms, FFmpeg re-encodes and pushes to RTMP ingest. The RTMP layer is entirely on your side of the stack.

If you are building real-time bidirectional voice agents that need to respond within a meeting, the latency constraints require engaging with WebRTC directly. MeetStream's live audio WebSocket provides the lowest-latency audio access point for this without requiring you to manage the WebRTC session yourself.

Conclusion

WebRTC and RTMP solve different problems. WebRTC is the protocol that meeting platforms run on and that makes sub-second real-time communication possible. RTMP is the ingest protocol that broadcast infrastructure uses, increasingly on borrowed time but still dominant for live streaming ingest. For meeting bot development, understanding this distinction helps you choose the right tool for each part of your pipeline. Start building at app.meetstream.ai and let the MeetStream API handle the WebRTC complexity while you focus on what your application does with the data.

What is the main difference between WebRTC and RTMP?

WebRTC is a UDP-based peer-to-peer communication framework built into browsers that achieves sub-500ms latency. RTMP is a TCP-based protocol originally designed for Flash that runs encoder-to-server streams with 2-30 seconds of latency depending on delivery method. WebRTC handles NAT traversal automatically via ICE and STUN. RTMP requires direct TCP connectivity to a media server. WebRTC is used inside meeting platforms for live communication. RTMP is used as the standard ingest protocol for YouTube Live, Twitch, and similar broadcast platforms.

Do meeting platforms like Zoom and Google Meet use WebRTC?

Yes. Google Meet, Zoom, and Microsoft Teams all use WebRTC for participant audio and video transport. Each platform's browser client uses the standard WebRTC APIs. Their backend uses Selective Forwarding Units (SFUs) to route media between participants without transcoding. When a meeting bot like MeetStream joins a meeting, it participates in this WebRTC session as a standard peer, receiving and potentially sending media streams using the same protocol as any browser participant.

When would I use RTMP for a meeting bot application?

RTMP makes sense specifically for re-streaming a meeting to a broadcast destination: YouTube Live, Twitch, Wowza, or a CDN-delivered recording. The workflow is meeting bot captures the meeting, your server receives video via the bot API, FFmpeg or a media server re-encodes and pushes to RTMP ingest. For any use case that involves processing meeting audio or generating transcripts rather than broadcasting, RTMP is not involved.

What is WHIP and is it replacing RTMP?

WHIP (WebRTC HTTP Ingest Protocol) is an emerging standard that uses WebRTC as the ingest mechanism instead of RTMP. It offers sub-second ingest latency versus RTMP's typical 2-5 seconds, and it uses browser-native technology rather than a TCP legacy protocol. Cloudflare Stream and a handful of other platforms support WHIP ingest. Adoption is growing but slow because OBS, FFmpeg, and most encoding hardware support RTMP natively and WHIP requires newer software versions. RTMP will likely remain the dominant ingest protocol for several more years.

What latency should I expect from WebRTC in a meeting bot?

WebRTC peer-to-peer connections in typical office network conditions achieve 50-200ms one-way latency. SFU-based architectures (which all major meeting platforms use) add 50-150ms of additional switching latency. End-to-end latency from a speaker's microphone to a bot receiving the audio via WebRTC is typically 150-400ms. MeetStream's live audio WebSocket adds a small additional delay for frame packaging and delivery. For most processing use cases (transcription, NER, summarization), this latency is irrelevant. For real-time voice agents that need to respond in-call, it becomes a meaningful constraint to architect around.

Frequently Asked Questions

Why do most meeting bots use WebRTC instead of RTMP?

WebRTC is the native protocol for browser-based meetings (Zoom, Google Meet, Teams all use it internally), which means a bot using WebRTC can receive the original peer-to-peer audio and video tracks without transcoding. RTMP requires the meeting platform to re-encode and push media to an RTMP endpoint, adding latency and a dependency on the platform's streaming feature.

What are the advantages of RTMP for meeting recording use cases?

RTMP is simpler to implement because it uses a well-documented streaming protocol with broad infrastructure support. If you only need a composite recording (all participants in one stream) and your meeting platform supports RTMP out, RTMP pipelines are easier to operate than WebRTC media servers. Tools like Nginx-RTMP and AWS IVS handle RTMP ingestion reliably at scale.

How do I set up a WebRTC media server for a meeting bot?

Use mediasoup or Janus as your server-side WebRTC SFU. The bot connects to the meeting platform via WebRTC, and the SFU bridges the WebRTC connection to your processing pipeline. Each participant's audio and video track arrives as a separate RTP stream, which you forward to your transcription and recording services.

Which protocol has lower latency: WebRTC or RTMP?

WebRTC has lower latency, typically 100-300ms end-to-end, because it uses UDP with SRTP and avoids the buffering inherent in RTMP's TCP transport. RTMP latency typically runs 2-4 seconds due to the stream buffer required for smooth delivery. For real-time transcription, WebRTC's lower latency makes it the better choice.