Meeting Bot Infrastructure: What Developers Need to Know

One of the most consistently underestimated engineering projects in early-stage AI companies is building meeting bot infrastructure. The surface area looks small: join a meeting, get the audio, run it through an STT API. Teams estimate two or three sprints. Six months later, they are still debugging a memory leak in a long-running Chromium process, handling a breaking change from Zoom's OAuth policy, and trying to figure out why audio frames are dropping when the host's network degrades.

The gap between the apparent simplicity and the actual complexity is what makes this a good candidate for a managed API layer. But before you can make that decision intelligently, you need to understand what the infrastructure actually involves.

This article is a technical architecture breakdown of meeting bot infrastructure: what each layer does, where the complexity concentrates, and what a managed platform abstracts away vs. what you own regardless.

In this guide, we'll cover each layer of the stack from platform integration through webhook delivery, where the operational burden concentrates, and how to think about build vs. buy. Let's get into it.

Layer 1: Platform Integration

The foundation layer is getting a bot process into a live video call. Each major platform has different requirements and different approaches to media extraction.

Google Meet is the most accessible. Meeting URLs are public (no API key for joining as a guest), and a headless Chromium browser can join a Google Meet session without any special credentials. The bot navigates to the meeting URL, grants microphone and camera permissions to the virtual devices, and enters the call. There is no official media extraction API, but browser-based capture works reliably.

Microsoft Teams requires more setup. The Bot Framework approach uses Graph API permissions and a registered Azure app. The Teams client can also be automated via browser, but Teams' web client has historically been more resistant to headless automation. Production Teams support typically uses the official bot registration path for compliance and stability.

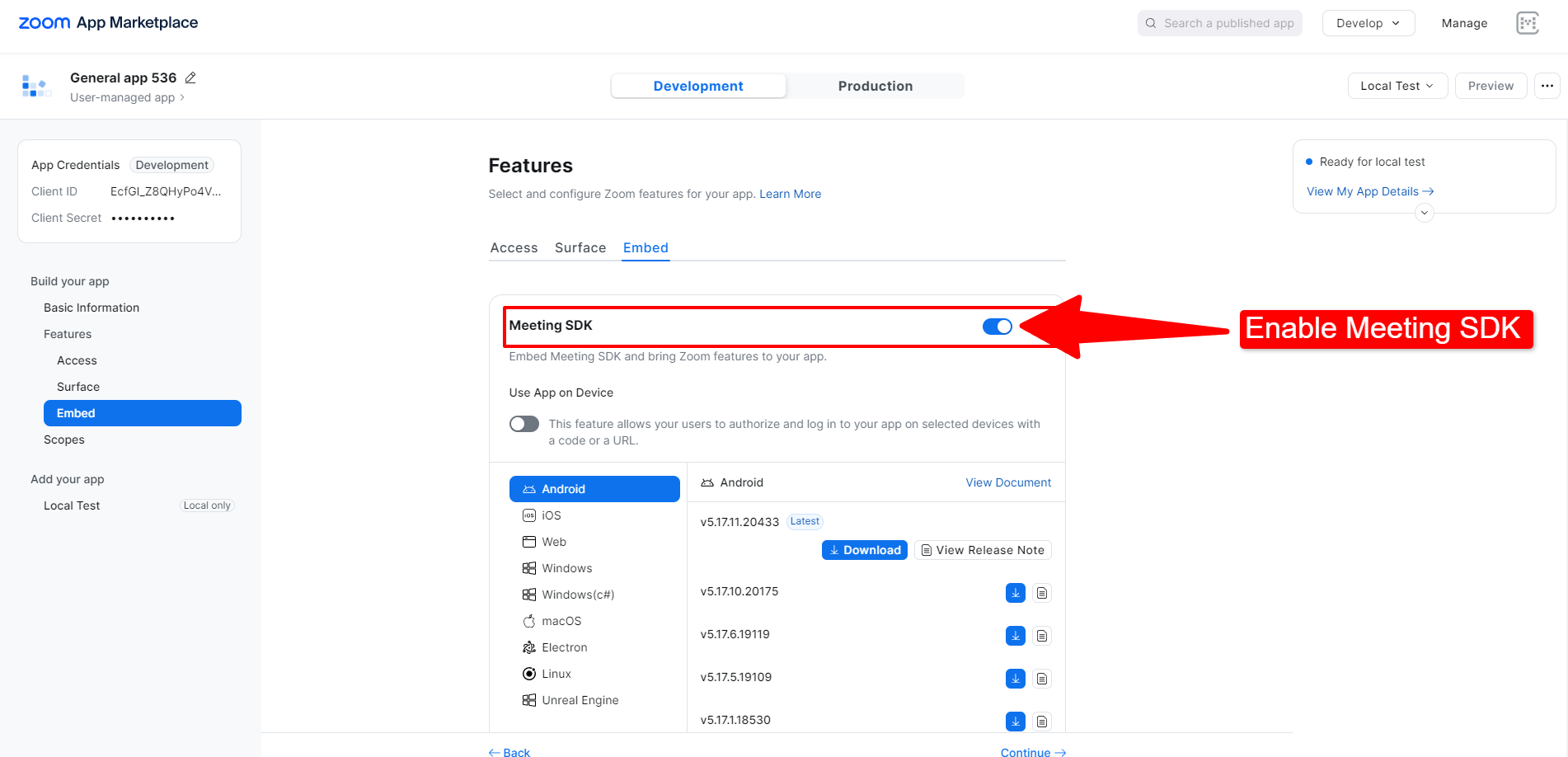

Zoom is the most constrained. Zoom explicitly requires that automated participants be registered applications in their App Marketplace. The official path uses Zoom's Meeting SDK (a JavaScript or native SDK that requires a client context) with an app that has been approved for production. There is a browser automation path that some teams have used, but Zoom actively works to detect and block automated participants that are not registered apps, and using this approach in production violates their terms of service.

This means any production meeting bot platform that claims Zoom support must have a registered Zoom App Marketplace integration. Maintaining this requires ongoing compliance with Zoom's app review processes and policy updates. Zoom has changed OAuth scopes, deprecate API versions, and update bot detection behavior multiple times over the past two years.

Maintaining these three separate integration paths, keeping current with each platform's policy changes, and handling the differences in session management, waiting rooms, and participant events is the first major ongoing cost of owning this infrastructure.

Layer 2: Virtual Display and Audio Environment

For the browser-based capture path, the bot process needs a complete virtual graphics and audio environment. In a Linux container, this means:

Xvfb (X Virtual Framebuffer) provides a virtual display. The browser renders meeting UI to this display, which allows video capture using tools like ffmpeg or the browser's media capture APIs.

PulseAudio or a similar virtual audio device receives the decoded audio from the browser's WebRTC pipeline. The operating system's audio mixer receives audio from all participants, and the bot process reads from a virtual audio sink.

Managing these virtual devices in containerized environments has its own failure modes. Xvfb instances leak memory in long-running sessions. PulseAudio can become unresponsive if the browser crashes or disconnects and reconnects. In a multi-tenant environment where hundreds of concurrent bots are running, these failure modes need to be detected and recovered automatically.

Browser memory usage in long meetings is also non-trivial. A Chromium instance running for two hours in a 10-participant call consumes significantly more memory than one that joined five minutes ago. Without active resource monitoring and graceful shutdown logic, containers run into OOM conditions that cause the bot to drop from the call without a clean exit event.

Layer 3: Media Extraction Pipeline

Once the bot is in the call with access to the audio environment, media extraction happens in two modes: post-call recording and real-time streaming.

Post-call recording captures the audio (and optionally video) from the virtual devices, encodes it, and stores it for later processing. This is the simpler path. The main decisions are: format (PCM wav for lossless, Opus for compressed), mixing strategy (single mixed file vs. per-speaker tracks), and storage destination.

Per-speaker audio extraction is significantly harder. Browser-based WebRTC gives you access to individual MediaStreamTrack objects per participant, but routing these to separate virtual audio sinks and recording them independently requires careful Web Audio API manipulation inside the browser context. The challenge is that participants joining and leaving mid-call require dynamic track management, and the mapping from MediaStreamTrack to named participant requires reading DOM state from the meeting UI.

Real-time audio streaming adds the requirement that audio frames be delivered to a downstream consumer with low enough latency to be useful. This means the extraction pipeline needs to buffer audio frames, encode them to the target format (PCM16 LE at 48kHz is the standard for STT compatibility), and deliver them over a WebSocket with minimal added latency. Network hiccups on either end need to be handled gracefully without corrupting the frame sequence.

Video extraction follows a similar pattern. Recording the full meeting as a single video is straightforward with ffmpeg capturing the Xvfb output. Per-participant video streams require identifying and cropping individual participant tiles from the meeting UI layout, which changes dynamically as participants join, leave, or toggle video.

Layer 4: Transcription Orchestration

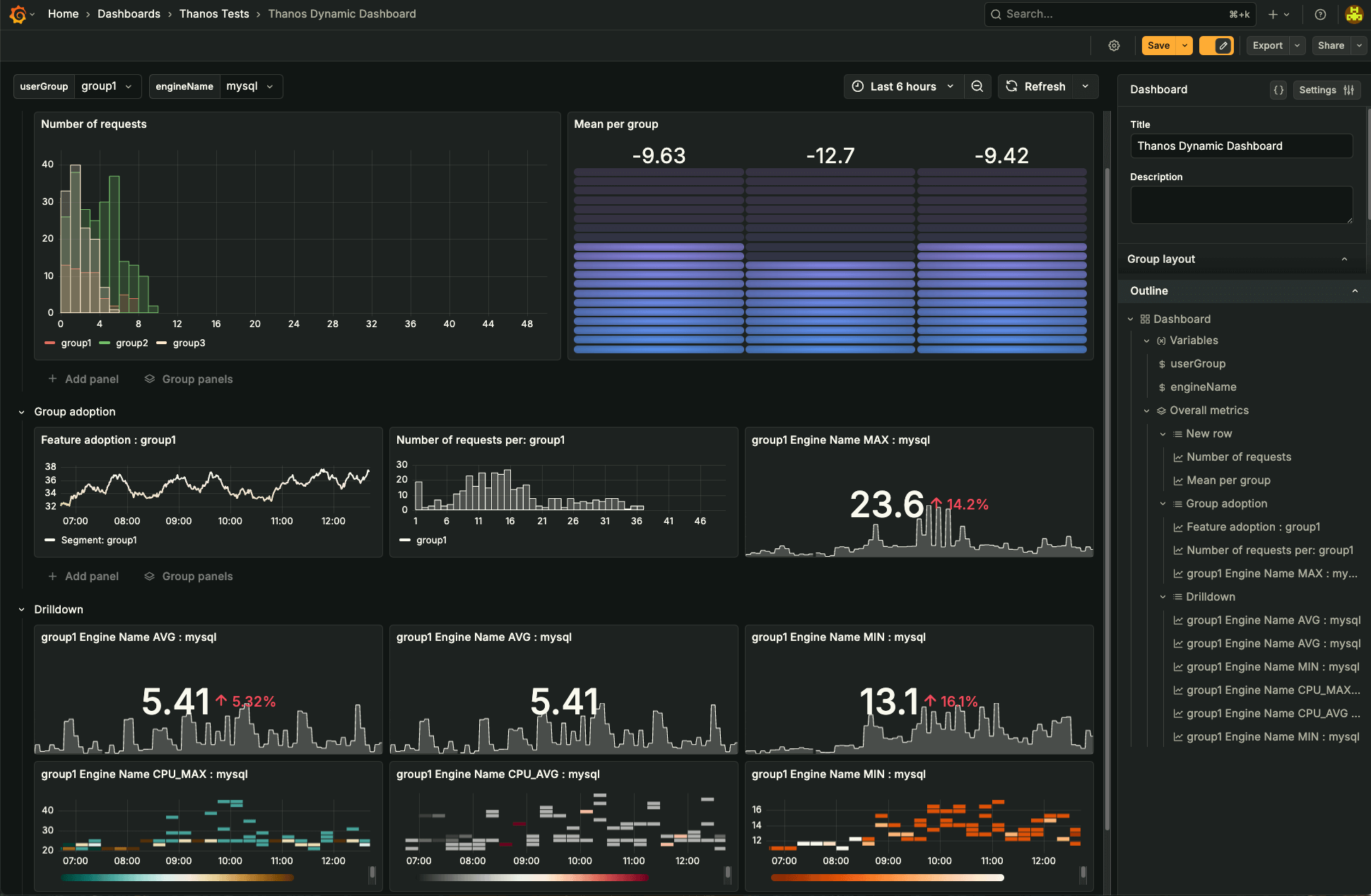

Audio gets transcribed. The orchestration layer sits between the raw audio and the STT provider and handles provider routing, retry logic, language detection, and result normalization.

Different STT providers have different authentication mechanisms, different audio format requirements (some want raw PCM, some want wav containers, some want Opus), different rate limits, and different output formats. A production transcription orchestration layer normalizes these differences so that the rest of the pipeline sees a consistent interface regardless of which provider processed a given call.

Retry logic matters more than it might seem. STT provider outages happen. API rate limits get hit during peak hours. A transcription orchestrator that fails silently leaves teams with missing transcripts and no way to recover. The production behavior should be: queue the audio, retry with backoff, fall back to an alternative provider if the primary is unavailable, and alert on repeated failures.

For real-time streaming transcription, the additional complexity is managing the WebSocket connection to the STT provider in parallel with the WebSocket delivering audio from the meeting. Frame sequencing, partial result handling, and the word_is_final / end_of_turn state machine all need to be implemented correctly to avoid double-counting words or missing segments.

Layer 5: Webhook Delivery

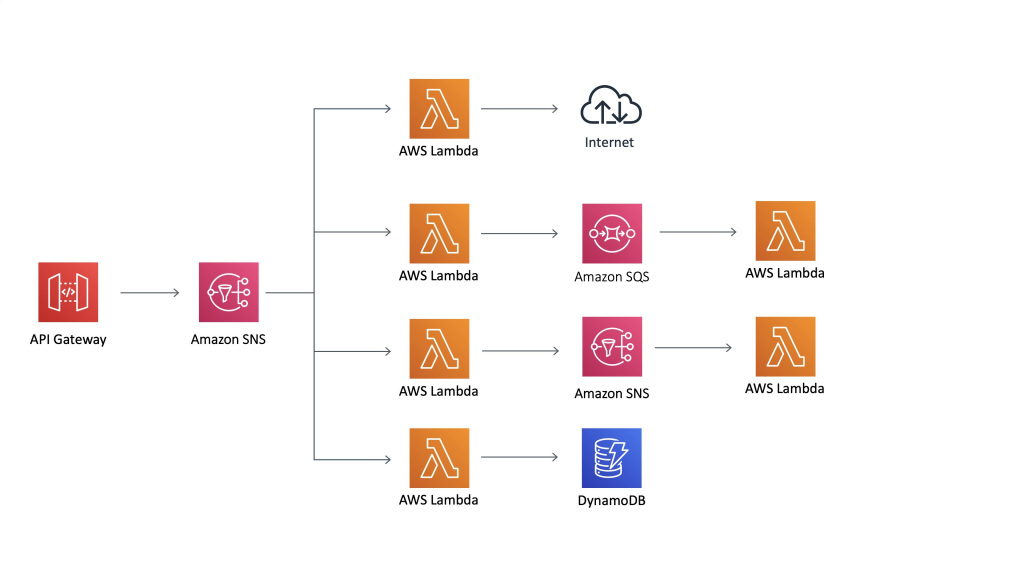

Processed results, transcripts, audio files, video files, status events, need to be delivered to the developer's webhook endpoint reliably. This sounds like a solved problem, but reliable webhook delivery has a long tail of failure modes.

Webhook endpoints go down. They return 500 errors. They time out after 30 seconds when the payload is large. They have misconfigured TLS. They are behind a load balancer that drops connections under load. A production meeting bot platform needs retry logic with exponential backoff, dead letter queuing for events that cannot be delivered after N retries, payload size limits with resumable delivery for large transcripts, and a delivery log that developers can query to debug missed events.

Event ordering matters too. If transcription.processed arrives before bot.stopped, the developer's handler might try to process a transcript for a bot that the system has not yet marked as completed. A well-designed webhook system delivers events in guaranteed order or includes enough metadata for the receiver to sort them correctly.

Layer 6: Scaling and Orchestration

A single bot runs fine. A hundred concurrent bots require container orchestration, resource scheduling, and autoscaling. Each bot is a resource-heavy process: a Chromium instance, virtual display, audio devices, and active WebSocket connections. Scheduling these across a container cluster without running into resource contention, while ensuring fast spin-up when a bot needs to join a meeting that starts in 30 seconds, requires real infrastructure engineering.

Geographic distribution matters for latency. A bot in a US data center joining a meeting with participants in Asia will see higher audio latency than a bot deployed in a regional edge location. Production platforms run bot infrastructure in multiple regions and route based on meeting participant geography where possible.

What a Managed Platform Abstracts

Every layer described above, platform integration, virtual display management, media extraction, transcription orchestration, webhook delivery, and container scaling, is what a managed meeting recording API abstracts. What you own regardless of which path you choose: your analysis logic, your data storage, your integration code to downstream systems, and your product experience.

The build-vs-buy decision comes down to whether you have the team to build and maintain all six layers, and whether doing so is differentiated for your product. For most teams, it is not. The differentiation lives above the abstraction boundary.

How MeetStream Fits In

MeetStream manages the full infrastructure stack described above, from platform integration through webhook delivery. The API exposes a clean interface: POST a meeting link, receive structured events. Per-speaker PCM audio streaming, configurable transcription providers, calendar integration, and in-meeting AI agent deployment are all available through the same interface. Get started free at meetstream.ai.

Conclusion

Meeting bot infrastructure is deeper than it looks from the outside. The six layers, platform integration, virtual display management, media extraction, transcription orchestration, webhook delivery, and scaling, each have their own failure modes and operational requirements. Understanding the full stack makes it easier to evaluate managed platforms intelligently and to make a build-vs-buy decision that accounts for the real engineering cost. See the full API reference at docs.meetstream.ai.

Frequently Asked Questions

What does meeting bot infrastructure involve technically?

Meeting bot infrastructure spans six main layers: platform integration (Zoom, Google Meet, Teams each require different approaches), virtual display and audio environment management for browser-based capture, media extraction pipelines for audio and video, transcription orchestration across STT providers, reliable webhook delivery with retry logic, and container scaling for concurrent bots. Each layer has distinct failure modes that require ongoing operational attention.

Why does building a meeting bot take 6-12 months?

The complexity concentrates in several places: Zoom requires App Marketplace registration and ongoing compliance with policy changes, per-speaker audio extraction requires careful Web Audio API manipulation with dynamic participant management, transcription orchestration needs provider failover and retry logic, and running hundreds of concurrent browser instances requires real container orchestration. Most teams underestimate the operational surface area and discover these challenges progressively over months.

What is the difference between post-call recording and real-time audio streaming?

Post-call recording captures audio to file during the meeting and makes it available after the call ends. Real-time streaming delivers audio frames to a WebSocket consumer as the meeting progresses, enabling live transcription, in-meeting AI agents, and real-time coaching features. Real-time streaming requires lower-latency frame delivery, streaming STT providers, and handling partial transcript results that get revised as the model hears more context.

What is meeting recording API infrastructure?

A meeting recording API is a managed service that handles the platform integration, media extraction, and delivery layers of meeting bot infrastructure. Developers call a single API endpoint with a meeting link and receive structured transcript data, audio files, and status events via webhook. The managed service handles virtual display management, Zoom App compliance, transcription provider orchestration, and container scaling, all the operational complexity below the developer's abstraction boundary.

How do meeting bot platforms handle Zoom's App Marketplace requirements?

Production meeting bot platforms that support Zoom maintain a registered Zoom App Marketplace application. This requires an initial app review process, ongoing compliance with Zoom's platform policies, and updating the integration when Zoom changes OAuth scopes or API versions. Platforms that bypass this via browser automation violate Zoom's terms of service and are unstable as Zoom updates its bot detection. Any compliant production platform supporting Zoom has gone through the App Marketplace process.