Automated Meeting Summaries: Building an NLP Pipeline

A meeting that does not produce a written record might as well not have happened for anyone who was not in the room. Within 24 hours, participants forget specific decisions. Within a week, the context of why a decision was made is gone entirely. Meeting notes solve this, but consistent note-taking is one of the most reliably skipped tasks in any team's workflow.

An automated meeting summary pipeline changes the economics of this. Instead of requiring someone to take notes during the meeting (which splits their attention) or reconstruct them afterward (which requires memory), the pipeline generates a structured summary automatically from the transcript. Decisions, action items, open questions, and a narrative summary are all produced without human input.

There are two fundamentally different approaches to meeting summarization in natural language processing. Extractive summarization picks the most representative sentences from the transcript itself. Abstractive summarization generates new text that captures the meaning without necessarily appearing in the source. For meeting summaries specifically, abstractive is almost always better: meeting transcripts contain false starts, filler words, and repetition that extractive approaches faithfully reproduce, producing summaries that read like unedited transcripts rather than useful documents.

In this guide, you will build a complete pipeline: MeetStream webhook receives the bot.stopped event, fetches the transcript from the MeetStream API, formats it for an LLM, extracts structured JSON with summary, decisions, action items, and open questions, then pushes to Slack and Notion. We will also cover the extractive BERT-based approach for environments where external LLM API calls are not an option. Let's get into it.

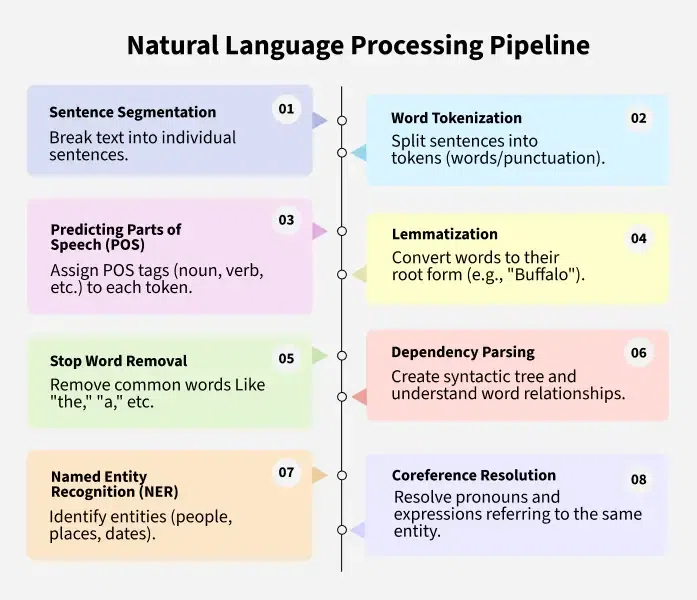

Extractive Summarization with BERT-Based Sentence Scoring

Extractive summarization ranks sentences by importance and returns the top-k. The classic TextRank algorithm uses graph-based ranking. A more accurate approach uses sentence embeddings to find sentences most similar to the overall document centroid.

from sentence_transformers import SentenceTransformer

import numpy as np

from typing import List, Dict

model = SentenceTransformer("all-MiniLM-L6-v2")

def extractive_summary(transcript: List[Dict], top_k: int = 5) -> str:

sentences = [turn["text"] for turn in transcript if len(turn["text"]) > 20]

if not sentences:

return ""

embeddings = model.encode(sentences)

centroid = embeddings.mean(axis=0)

# Score each sentence by cosine similarity to centroid

scores = [

np.dot(emb, centroid) / (np.linalg.norm(emb) * np.linalg.norm(centroid))

for emb in embeddings

]

# Take top-k sentences, sorted by original order

top_indices = sorted(

sorted(range(len(scores)), key=lambda i: scores[i], reverse=True)[:top_k]

)

return " ".join(sentences[i] for i in top_indices)

The output is readable but mechanical. The same sentences appear in the same order as the meeting, so context is preserved. The weakness is that repetitive discussions produce repetitive summaries, and filler turns score highly if the meeting topic is discussed frequently. For a quick recap, extractive works. For a shareable meeting document, abstractive is better.

Abstractive Summarization with a Structured LLM Prompt

The key to good abstractive meeting summarization is a prompt that constrains the output to a specific structure. Free-form summarization prompts produce variable-length, inconsistently organized summaries that are hard to parse programmatically. A structured prompt produces a JSON object with consistent fields every time.

MEETING_SUMMARY_PROMPT = """You are analyzing a meeting transcript. Extract the following fields as JSON:

- summary: A 2-4 sentence narrative summary of what was discussed and the overall outcome. Write in past tense.

- decisions: List of decisions made. Each decision should be a complete sentence starting with a past-tense verb. Include who made or approved the decision if clear.

- action_items: List of tasks assigned. Format each as {"owner": "Name", "task": "verb-first description", "deadline": "date or null"}.

- open_questions: List of unresolved questions or topics deferred to a future meeting.

- key_topics: List of 3-5 main topics discussed (short noun phrases).

If a field has no entries, return an empty list.

Do not include discussion items in decisions. Do not include agreed facts in open questions.

Transcript:

{transcript}"""

import openai

import json

client = openai.OpenAI()

def abstractive_summary(transcript: List[Dict]) -> Dict:

formatted = "\n".join(

f"{turn['speaker']}: {turn['text']}"

for turn in transcript

)

# Truncate if over token limit (~14,000 words for gpt-4o)

if len(formatted) > 50000:

formatted = formatted[:50000] + "\n[transcript truncated]"

prompt = MEETING_SUMMARY_PROMPT.format(transcript=formatted)

response = client.chat.completions.create(

model="gpt-4o",

messages=[{"role": "user", "content": prompt}],

response_format={"type": "json_object"},

temperature=0

)

content = response.choices[0].message.content

return json.loads(content)

Setting temperature=0 produces deterministic output. The response_format: json_object parameter eliminates parsing errors from markdown-wrapped JSON. For very long meetings, truncating the transcript before the prompt token limit prevents errors and keeps costs predictable.

Fetching the Transcript from MeetStream

The bot.stopped webhook fires when the bot leaves the meeting. At this point the full transcript is available. Fetch it immediately: the webhook payload includes the bot ID, and the transcript endpoint returns a list of speaker-labeled turns.

import httpx

MEETSTREAM_API_KEY = "YOUR_API_KEY"

async def fetch_transcript(bot_id: str) -> List[Dict]:

async with httpx.AsyncClient() as client:

response = await client.get(

f"https://api.meetstream.ai/api/v1/transcript/{bot_id}/get_transcript",

headers={"Authorization": f"Token {MEETSTREAM_API_KEY}"}

)

response.raise_for_status()

return response.json()

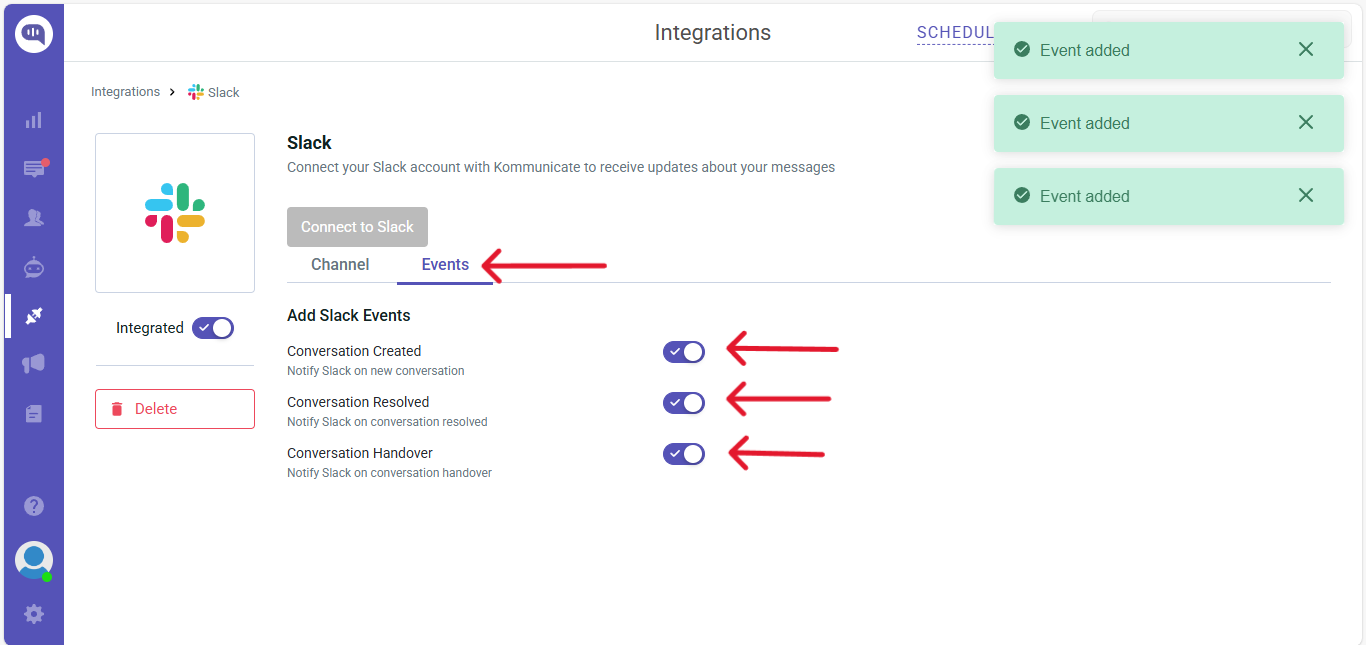

Pushing Summaries to Slack

A Slack message with meeting summary fields formatted as blocks is readable without needing to leave Slack. Use the Slack Block Kit format for structured output.

import httpx

SLACK_WEBHOOK_URL = "https://hooks.slack.com/services/YOUR/WEBHOOK/URL"

async def push_to_slack(summary: Dict, meeting_title: str):

action_items_text = "\n".join(

f"- [{item.get('owner', 'TBD')}] {item['task']}"

for item in summary.get("action_items", [])

) or "None"

decisions_text = "\n".join(

f"- {d}" for d in summary.get("decisions", [])

) or "None"

blocks = [

{

"type": "header",

"text": {"type": "plain_text", "text": f"Meeting Summary: {meeting_title}"}

},

{

"type": "section",

"text": {"type": "mrkdwn", "text": summary.get("summary", "")}

},

{

"type": "section",

"fields": [

{"type": "mrkdwn", "text": f"*Decisions*\n{decisions_text}"},

{"type": "mrkdwn", "text": f"*Action Items*\n{action_items_text}"}

]

}

]

if summary.get("open_questions"):

oq_text = "\n".join(f"- {q}" for q in summary["open_questions"])

blocks.append({

"type": "section",

"text": {"type": "mrkdwn", "text": f"*Open Questions*\n{oq_text}"}

})

async with httpx.AsyncClient() as client:

await client.post(SLACK_WEBHOOK_URL, json={"blocks": blocks})

Pushing Summaries to Notion

Creating a Notion page per meeting gives your team a searchable archive of decisions and action items. The Notion API accepts rich block content in a POST to the pages endpoint.

NOTION_TOKEN = "YOUR_NOTION_TOKEN"

NOTION_DATABASE_ID = "YOUR_DATABASE_ID"

async def push_to_notion(summary: Dict, meeting_title: str):

children = [

{

"object": "block",

"type": "paragraph",

"paragraph": {

"rich_text": [{"type": "text", "text": {"content": summary["summary"]}}]

}

}

]

if summary.get("decisions"):

children.append({

"object": "block",

"type": "heading_2",

"heading_2": {"rich_text": [{"type": "text", "text": {"content": "Decisions"}}]}

})

for decision in summary["decisions"]:

children.append({

"object": "block",

"type": "bulleted_list_item",

"bulleted_list_item": {

"rich_text": [{"type": "text", "text": {"content": decision}}]

}

})

if summary.get("action_items"):

children.append({

"object": "block",

"type": "heading_2",

"heading_2": {"rich_text": [{"type": "text", "text": {"content": "Action Items"}}]}

})

for item in summary["action_items"]:

task_text = f"[{item.get('owner', 'TBD')}] {item['task']}"

if item.get("deadline"):

task_text += f" (by {item['deadline']})"

children.append({

"object": "block",

"type": "to_do",

"to_do": {

"rich_text": [{"type": "text", "text": {"content": task_text}}],

"checked": False

}

})

async with httpx.AsyncClient() as client:

await client.post(

"https://api.notion.com/v1/pages",

headers={

"Authorization": f"Bearer {NOTION_TOKEN}",

"Notion-Version": "2022-06-28"

},

json={

"parent": {"database_id": NOTION_DATABASE_ID},

"properties": {

"title": {

"title": [{"text": {"content": meeting_title}}]

}

},

"children": children

}

)

Complete Webhook Handler

The full webhook handler ties all pieces together. It handles the bot.stopped event, fetches the transcript, generates the summary, and fans out to both Slack and Notion in parallel.

from fastapi import FastAPI, Request

import asyncio

app = FastAPI()

@app.post("/webhook/meetstream")

async def handle_meetstream_event(request: Request):

body = await request.json()

if body.get("event") != "bot.stopped":

return {"status": "ignored"}

bot_id = body["bot_id"]

meeting_title = body.get("meeting_title", f"Meeting {bot_id[:8]}")

transcript = await fetch_transcript(bot_id)

if not transcript:

return {"status": "no_transcript"}

summary = abstractive_summary(transcript)

# Push to Slack and Notion in parallel

await asyncio.gather(

push_to_slack(summary, meeting_title),

push_to_notion(summary, meeting_title)

)

return {"status": "ok", "decisions": len(summary.get("decisions", []))}

Conclusion

An automated meeting summary pipeline eliminates the most consistently skipped task in team workflows. The structured LLM approach produces consistent, parseable output that routes cleanly to Slack, Notion, and CRM systems. The MeetStream webhook lifecycle makes integration straightforward: a single bot.stopped event triggers the entire pipeline. If you want to test this with a real meeting, create a bot at app.meetstream.ai and point the webhook URL at your server to see summaries flowing within minutes of your next call.

What is the difference between extractive and abstractive meeting summarization?

Extractive summarization selects and returns actual sentences from the transcript ranked by importance. Abstractive summarization generates new text that captures the meaning. For meeting transcripts, abstractive is generally more useful because transcripts contain filler words, false starts, and repetition that extractive approaches preserve. Abstractive summaries read like written documents. Extractive summaries read like edited transcripts. The tradeoff is that abstractive summarization requires a capable LLM or a fine-tuned sequence-to-sequence model, while extractive approaches can run with sentence embeddings and cosine similarity on local hardware.

How long does GPT-4o take to summarize a one-hour meeting?

A one-hour meeting transcript is typically 8,000 to 15,000 words. GPT-4o processes this in 3-10 seconds depending on server load and output length. The structured JSON output prompt produces shorter completions than a free-form summary prompt, which reduces both latency and cost. If latency is critical, run the summarization asynchronously after the webhook responds: the webhook returns immediately, and the summary is pushed to Slack and Notion a few seconds later.

Can I generate meeting summaries without sending data to OpenAI?

Yes. Self-hosted options include Mistral-7B, Llama-3-8B, and similar open models running via ollama or vLLM. These models are smaller than GPT-4o and produce less accurate summaries for complex meetings, but handle straightforward decision and action item extraction well. You can also use the extractive approach with sentence-transformers, which runs entirely locally with no external API calls and no data leaving your infrastructure.

How do I handle very long meeting transcripts that exceed the LLM context window?

For meetings longer than about two hours, the transcript may exceed the context window of your model. Two strategies work: chunked summarization (split the transcript into segments, summarize each, then summarize the summaries) and hierarchical prompting (pass only the most information-dense turns, filtered by extractive scoring, to the LLM). Chunked summarization is simpler to implement. Hierarchical prompting produces better results when the key decisions are concentrated in specific parts of a long meeting.

What fields should a structured meeting summary include?

A summary covering four fields handles most use cases: a 2-4 sentence narrative summary for human readers, a decisions list for record-keeping, an action items list with owner and deadline for task management, and an open questions list for follow-up tracking. Additional fields like key topics (for search and tagging) and participant names (for CRM association) add value without adding much prompt complexity. The prompt structure should enforce these fields explicitly rather than asking for a "complete summary", which produces inconsistent output.

Frequently Asked Questions

What NLP approach produces the most accurate meeting summaries?

Abstractive summarization using a fine-tuned T5 or BART model outperforms extractive methods for meeting content because meetings contain conversational disfluencies that make direct sentence extraction incoherent. Fine-tune on meeting-specific datasets like AMI or ICSI to improve domain accuracy compared to general-purpose summarization models.

How do I handle very long meeting transcripts that exceed model context limits?

Use a hierarchical summarization approach: split the transcript into 2000-token segments, summarize each segment independently, then summarize the segment summaries. Alternatively, use retrieval-augmented generation (RAG) to pull the most relevant passages based on a summary query rather than processing the entire transcript at once.

How do I extract action items separately from the general summary?

Fine-tune a sequence labeling model to classify each sentence as action, decision, or general discussion. Alternatively, prompt an LLM with a structured output schema requesting a JSON object with keys for summary, action_items, and decisions. Post-process the action items to extract assignee names using NER.

What is the best way to evaluate summary quality programmatically?

Use ROUGE-L scores to measure overlap with human-written reference summaries, targeting ROUGE-L above 0.35 for meeting domain data. Supplement with BERTScore for semantic similarity, which handles paraphrasing better than n-gram overlap metrics. Run these evaluations automatically in CI when you retrain or fine-tune the summarization model.