AI Meeting Bot Use Cases: 7 Ways Developers Are Building with Meeting APIs

Most developers discover meeting APIs when someone on the team complains about manual note-taking. You build a basic AI meeting bot that joins calls and produces a transcript, ship it in a week, and everyone's happy. Then someone asks: can it also update Salesforce? Can it flag when a prospect sounds frustrated? Can it pull up a customer's history mid-call?

That's when you realize the transcript is just the beginning. Meeting data, audio streams, transcripts, speaker timecodes, sentiment signals, is a rich data source that most products are barely touching. The calls your users take every day contain intent signals, product feedback, competitive intelligence, and compliance evidence that typically vanishes into a recording nobody watches.

A 2023 Gartner survey found that less than 20% of enterprise meeting recordings are ever reviewed by a human. The data is there. The gap is tooling to make it useful automatically. That's the space developers are building into right now.

This post walks through seven concrete ai meeting bot use cases, what the product does, which API features it uses, and what data flows where. Each one is something teams are shipping today using meeting APIs as infrastructure. At the end, there's a summary table mapping use cases to API capabilities.

1. Sales Coaching Bot: Real-Time Objection Detection

Sales reps handle dozens of calls a week. Managers can review maybe one or two. The result: coaching is reactive, not systematic. A sales coaching bot flips this by analyzing every call automatically and surfacing patterns a manager would otherwise miss.

The technical implementation starts with deploying a bot using live_transcription_required: true and a callback_url. As the call progresses, the bot streams transcript segments to your backend via webhooks. You process each segment through an NLP model, either a fine-tuned classifier or a prompt against a model like GPT-4, looking for objection patterns: pricing pushback, competitor mentions, timeline hesitation, technical doubt.

When an objection fires, you can do two things. First, log it with the speaker label, timestamp, and transcript context for post-call review. Second, if you're using MIA (Meeting Intelligence Agents), you can push a real-time nudge to the rep's interface, a suggested response, a relevant case study, a pricing comparison, without interrupting the call audio. MIA's Chat type action handles this: the agent sends a message visible only to the rep's sidebar tool.

Post-call, the transcription.processed webhook delivers the full transcript. Your system generates a coaching report: objection frequency by type, talk-time ratio, question count, filler word density. Store this against the rep's ID and you have longitudinal coaching data. Deal stage updates in your CRM happen automatically via the same webhook pipeline.

Data flow: live_transcription stream → objection classifier → rep sidebar (real-time) + coaching report (post-call) → CRM activity log.

2. Note-Taking Agent: Structured Action Items with Notion Push

The note-taking use case looks simple on the surface but gets interesting when you push past "generate a summary." The real value is structured extraction: not a paragraph summary, but a typed schema of decisions, owners, deadlines, and blockers that can be written directly into a project management tool.

Implementation: deploy a bot with live_transcription_required: false (you only need the final transcript) and recording_config set to store the audio for reference. When the transcription.processed webhook fires, send the full transcript to your extraction pipeline. A well-designed prompt extracts action items as structured JSON: { "owner": "string", "task": "string", "due": "date|null", "context": "string" }.

The Notion push is straightforward via the Notion API. Each action item becomes a database row. Meeting metadata (participants, date, duration) gets written to a "Meetings" database with a relation to each action item. The result: every meeting automatically produces a linked set of tasks that appear in the right person's Notion view without anyone manually entering them.

Edge cases worth handling: speaker diarization errors (two speakers blending together), action items assigned to a name not in your user database, and ambiguous deadlines ("next week" vs. a specific date). Running a normalization pass on the extracted JSON before writing to Notion catches most of these.

Data flow: transcription.processed → extraction LLM → structured JSON → Notion API → task database rows.

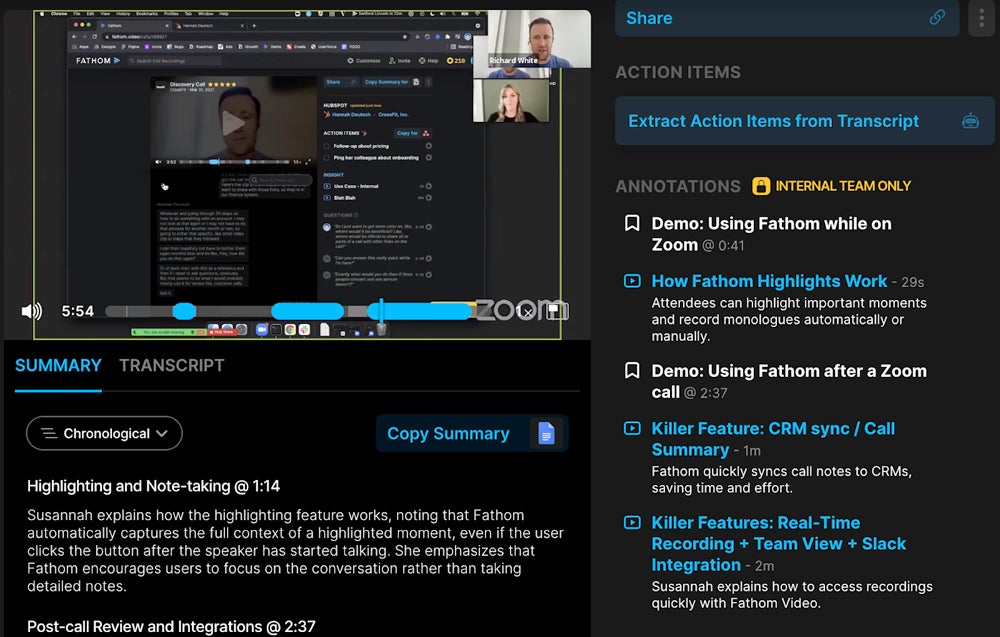

3. CRM Automation: Transcript to Deal Update

Sales teams lose a significant portion of pipeline visibility because reps don't log call notes. It's not laziness, it's that logging a call in a CRM after a 45-minute demo takes 10 minutes of manual work per call. If a rep takes five calls a day, that's nearly an hour of admin.

The automation pipeline here processes the transcript post-call and writes directly to your CRM. On the MeetStream side, you associate the bot deployment with a CRM deal ID (passed as metadata in the bot creation request). When transcription.processed fires, your handler extracts: next steps, prospect objections, expressed timeline, competitor mentions, and deal stage signals ("we're evaluating three vendors" vs. "ready to move forward next quarter").

Each extracted field maps to a CRM field. HubSpot's API, Salesforce REST API, and Pipedrive all accept these updates programmatically. You also create a call activity with a summary as the body, so the deal timeline shows a clean record of every conversation without manual entry.

For deal stage inference, a simple classifier works well: train it on your historical transcript-to-stage progression data. Accuracy improves quickly because you're working with a constrained domain. The classifier outputs a suggested stage change, which goes through a human-in-the-loop queue rather than updating automatically, CRM hygiene matters too much to automate blindly from day one.

Data flow: bot metadata (deal ID) → transcription.processed → extraction + classifier → CRM API (activity log, field updates, stage suggestion queue).

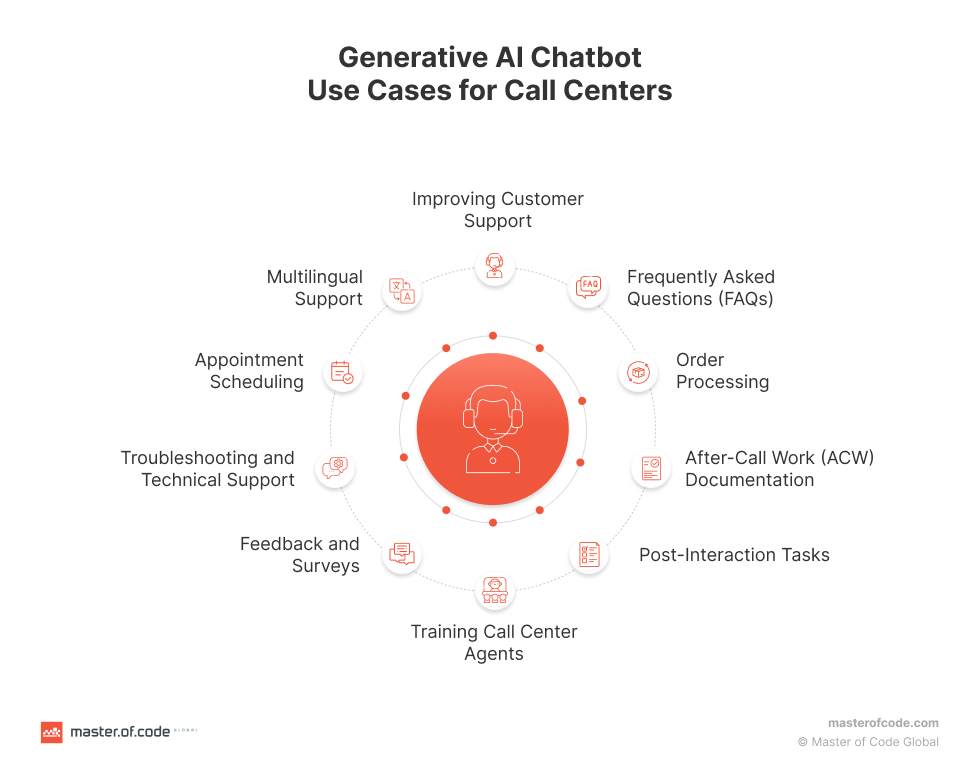

4. Customer Success Analytics: Sentiment Trends Over Calls

Customer success teams manage accounts across many calls over months. A single unhappy call might be noise. Three calls with declining sentiment in a row is a churn signal. The tooling to detect this systematically doesn't exist in most CS platforms, you have to build it.

The data model is per-account, per-call sentiment scoring. Each time a bot joins a customer call (QBR, onboarding, support escalation), the transcript gets scored by speaker for sentiment polarity and intensity. You store a time series: account ID, call date, speaker role (customer vs. CS rep), segment-level sentiment scores, and overall call sentiment.

With 10+ calls per account, patterns emerge. A customer whose sentiment was neutral for six months and has trended negative for the last three calls is a flag. A customer who has asked "how does this compare to [competitor]" twice in recent calls is another flag. Surface these signals as a risk score in your CS dashboard, and the team knows where to focus without manually reviewing recordings.

The MeetStream webhook pipeline handles the recording and transcription layer. Your analytics backend handles the scoring and aggregation. The split is clean: MeetStream gets the data out of the meeting reliably across Google Meet, Zoom, and Teams; you own the analytics logic.

Data flow: transcription.processed → sentiment scoring → account time series store → CS dashboard risk scores + alerts.

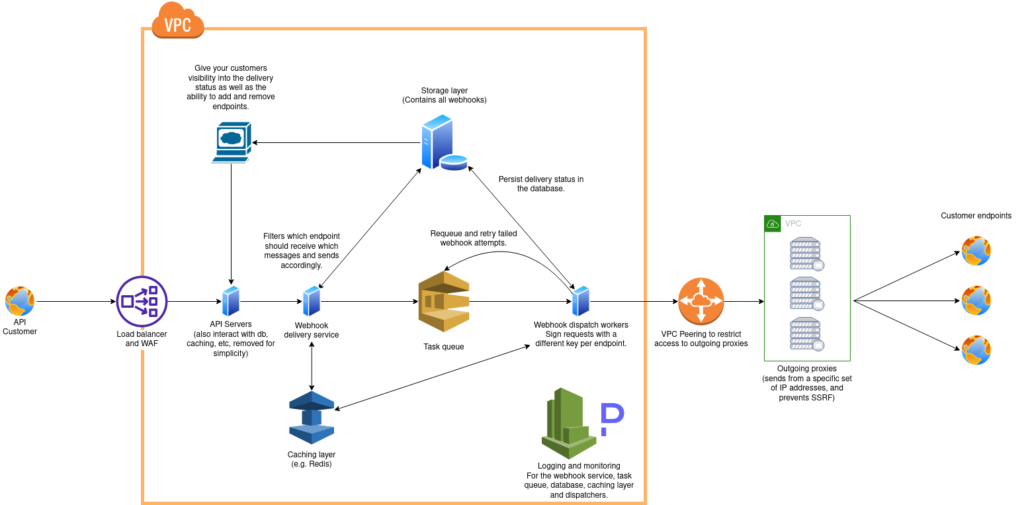

5. Compliance Recording: Regulated Industries

Financial services, healthcare, and legal teams have mandatory call recording requirements. The problem with using native platform recordings is chain of custody: you need to prove the recording wasn't tampered with, was retained for the required period, and can be produced on demand for audit.

Building on a meeting automation API gives you explicit control over the recording lifecycle. When creating the bot, set recording_config with retention period and storage target (your own S3 bucket or a compliant object store). The recording goes to infrastructure you control, not a third-party platform's opaque storage.

Every bot lifecycle event, bot.joining, bot.inmeeting, bot.stopped, gets written to an immutable audit log. You record who initiated the recording, when the bot joined, when it left, and the SHA-256 hash of the stored recording file. If there's ever an audit, you can produce: the recording, the metadata, the hash, and the access log showing who retrieved it.

Data retention automation is worth building early: a scheduled job that checks recording age against the required retention period and moves files to cold storage or triggers deletion, logging each transition. This is the kind of thing that gets missed in a manual process and causes compliance failures at the worst possible time.

Data flow: bot events → immutable audit log → encrypted recording → compliant object store → retention scheduler → audit trail.

6. Voice-Controlled CRM: Spoken Commands to CRM Fields

This is a less common use case but one with strong product potential: a rep can say "log this as a high-priority follow-up, remind me in three days" during a call and have it happen automatically. No context switching, no manual entry.

The implementation uses MIA in Pipeline mode with a Voice type agent. The agent listens to the audio stream with a wake word or command prefix ("Hey CRM" or a custom phrase). When it detects the pattern, it sends the subsequent utterance through a command parser that maps natural language to CRM actions: create follow-up, update deal stage, add note, tag contact.

The tricky part is not the NLP, modern models handle command parsing well. The tricky part is latency. A rep saying "log this as closed lost, they went with Salesforce" expects to hear confirmation within a second or two. This requires an optimized pipeline: short audio buffer, fast transcription (Whisper or similar), low-latency model inference, and immediate CRM write. End-to-end latency under 1.5 seconds is achievable with a well-tuned stack.

The agent can confirm via chat message or a brief audio response ("Got it, logged as Closed Lost with note: went with Salesforce"). The rep gets confirmation without breaking the call flow. The MeetStream API's real-time audio capability is what makes this latency achievable, polling a recording after the fact wouldn't work for interactive commands.

Data flow: live audio stream → wake word detection → command parser → CRM API write → confirmation message via MIA Chat action.

7. Meeting Intelligence Platform: Cross-Meeting Analytics

All the previous use cases work per-call. The highest-value product is one that aggregates across calls and surfaces patterns you can't see in any single conversation: how often does a specific competitor come up across all your sales calls this month? Which customer success rep has the highest average call sentiment? Which product features get mentioned most often in churn calls vs. expansion calls?

Building this requires treating transcripts as a structured data source from the start. Each transcription.processed event writes to a database with consistent schema: meeting ID, participants, timestamps, speaker-attributed segments, and extracted entities (product names, competitors, feature mentions, sentiment scores). Index this table well and you can run cross-meeting queries in milliseconds.

The product layer on top is a combination of pre-built dashboards (trend charts for common metrics) and a query interface that lets users ask questions in natural language ("Which deals mentioned pricing concerns in the last 30 days?"). The NLP-to-SQL translation for this class of query is well-solved by current models when your schema is clean and constrained.

Scaling the bot deployment side is straightforward with the MeetStream API, you're using the same create_bot endpoint regardless of volume. The complexity is in the data pipeline: making sure every transcript lands in your database reliably, handling webhook retries, and managing storage costs as your corpus grows.

Data flow: all transcripts → structured database → aggregation layer → analytics dashboards + NL query interface.

API Features Summary

Here's how each use case maps to MeetStream API capabilities:

-jpg.jpeg)

| Use Case | Key API Features | Webhook Events Used |

|---|---|---|

| Sales Coaching | live_transcription_required, MIA Chat action |

bot.inmeeting, live transcript stream |

| Note-Taking Agent | recording_config, callback_url |

transcription.processed |

| CRM Automation | Bot metadata, automatic_leave |

transcription.processed, bot.stopped |

| CS Analytics | callback_url, speaker diarization |

transcription.processed |

| Compliance Recording | recording_config with retention, bot events |

All lifecycle events |

| Voice-Controlled CRM | live_audio_required, MIA Voice + Pipeline mode |

Live audio stream |

| Meeting Intelligence | All of the above + cross-meeting aggregation | transcription.processed, audio.processed |

Choosing Your Starting Point

If you're evaluating which use case to build first, the decision usually comes down to where your users are losing the most time. Note-taking automation has the shortest feedback loop, you can ship something useful in a weekend and users feel the value immediately. CRM automation has the most obvious ROI for sales-focused products. Compliance recording has the most defined requirements, which makes scoping easier.

The meeting bot applications that generate the most durable value tend to be the ones that aggregate across calls rather than optimizing a single call. Per-call note-taking is a feature. Cross-meeting intelligence is a platform. Build the data model to support cross-meeting queries from day one even if your first release is just single-call notes, retrofitting the schema later is painful.

One thing worth noting: all seven of these use cases work across Google Meet, Zoom, and Microsoft Teams through the same API. You write the bot deployment logic once. The platform differences (Zoom's OAuth requirements, Teams' meeting join behavior) are handled at the infrastructure layer, not yours.

Handling Edge Cases in Production

A few failure modes that catch developers off guard when moving from prototype to production:

Bot join failures happen. Meetings get cancelled, links get rotated, hosts haven't admitted bots to their organization. Your bot.joining → bot.inmeeting state transition should have a timeout handler. If the bot doesn't reach inmeeting within a reasonable window, surface the failure to the user rather than silently dropping it.

Transcript quality varies by speaker environment. A rep on a noisy coffee shop WiFi will produce a noisier transcript than someone in a quiet office with a good headset. Build tolerance for transcription errors into your extraction prompts, asking the model to "extract action items even if the transcript contains minor transcription errors" improves robustness significantly.

For real-time use cases, design for the bot being removed from a meeting mid-session. Hosts can remove bots. Your live processing pipeline should handle a bot.stopped event gracefully, flushing any buffered state and producing a partial output if possible.

Frequently Asked Questions

What are the most common AI meeting bot use cases for enterprise products?

The most common enterprise ai meeting bot deployments are compliance recording, CRM automation, and customer success analytics. Compliance recording addresses a mandatory requirement, so it has the clearest buyer. CRM automation saves measurable time for sales teams. CS analytics surfaces churn risk before it's too late to act. All three are production use cases with existing paying customers in the market today.

How does live transcription differ from post-call transcription in terms of what you can build?

Live transcription enables interactive use cases: real-time coaching nudges, voice commands, mid-call alerts. Post-call transcription (the transcription.processed webhook) is sufficient for note-taking, CRM updates, analytics, and compliance. Most meeting automation products start with post-call transcription because it's simpler and covers 80% of use cases, then add live transcription for specific interactive features.

Can a single meeting bot handle multiple platforms (Zoom, Meet, Teams) with the same code?

Yes. The MeetStream API abstracts platform differences. You call the same POST /api/v1/bots/create_bot endpoint with a meeting link regardless of platform. Zoom requires the MeetStream app to be installed in your Zoom account; Google Meet and Teams work without platform setup. Your application code doesn't change per platform.

What data does a meeting bot actually produce that's useful for analytics?

Beyond the raw transcript, a meeting bot produces: timestamped speaker-attributed segments (who said what, when), audio quality metadata, meeting duration and participant count, join/leave times per participant, and (with additional processing) sentiment scores and entity extractions. For meeting bot applications in analytics, the speaker attribution and timestamps are particularly valuable because they let you analyze individual speaker patterns across many calls over time.

How do I handle bot deployment for meetings I don't schedule (inbound calls)?

For inbound or ad-hoc meetings, you typically use a meeting link capture hook in your product: when a rep pastes a meeting link into your app, or when a calendar integration detects a new meeting with a link, you trigger bot deployment automatically. The automatic_leave parameter handles cleanup. This pattern works well with Google Calendar webhooks or HubSpot meeting creation hooks as the trigger, with MeetStream handling the actual bot lifecycle.

If you're evaluating which meeting API to build on, you can start building with MeetStream today. The API documentation covers all the parameters above with working examples, and the bot creation endpoint is live and testable in minutes.