Zoom Recording API: Automate Recording and Transcription

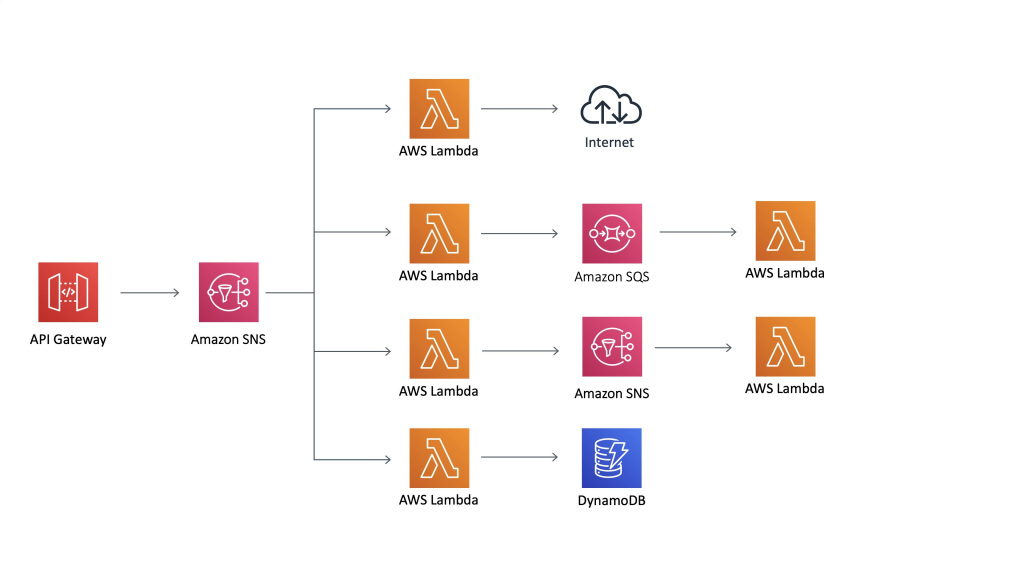

Your sales team has 200 Zoom calls a week. Manually downloading recordings and running them through a transcription tool isn't going to scale. Neither is asking reps to copy notes into the CRM after each call. The only way to make this work at volume is a fully automated pipeline: recording starts automatically, transcript is produced without intervention, and data flows into downstream systems via webhook.

Zoom's native API can get you partway there, but it comes with account-level restrictions, Marketplace approval requirements, and a data model that assumes you own all the accounts involved. Most teams building revenue intelligence tools, coaching platforms, or compliance archives hit these walls quickly.

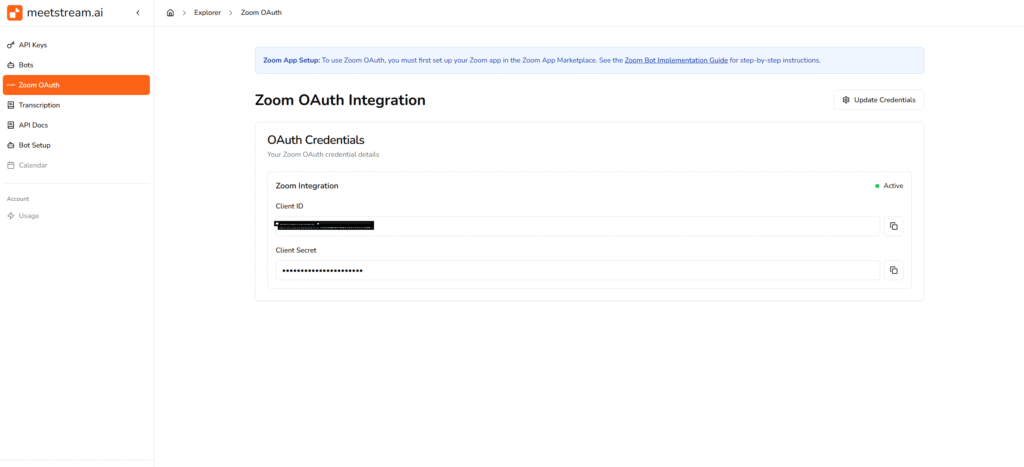

This tutorial walks through a complete automated workflow using the MeetStream Zoom recording API: create a bot, track it through the meeting lifecycle via webhooks, and retrieve the full transcript when processing completes. Code examples in both curl and Python throughout.

In this guide, we'll cover the Zoom App Marketplace requirement context, the full create_bot call with recording configuration, the webhook event sequence from join to transcript, and the GET call to retrieve the final transcript. Let's get into it.

Why automate Zoom recording via bot API

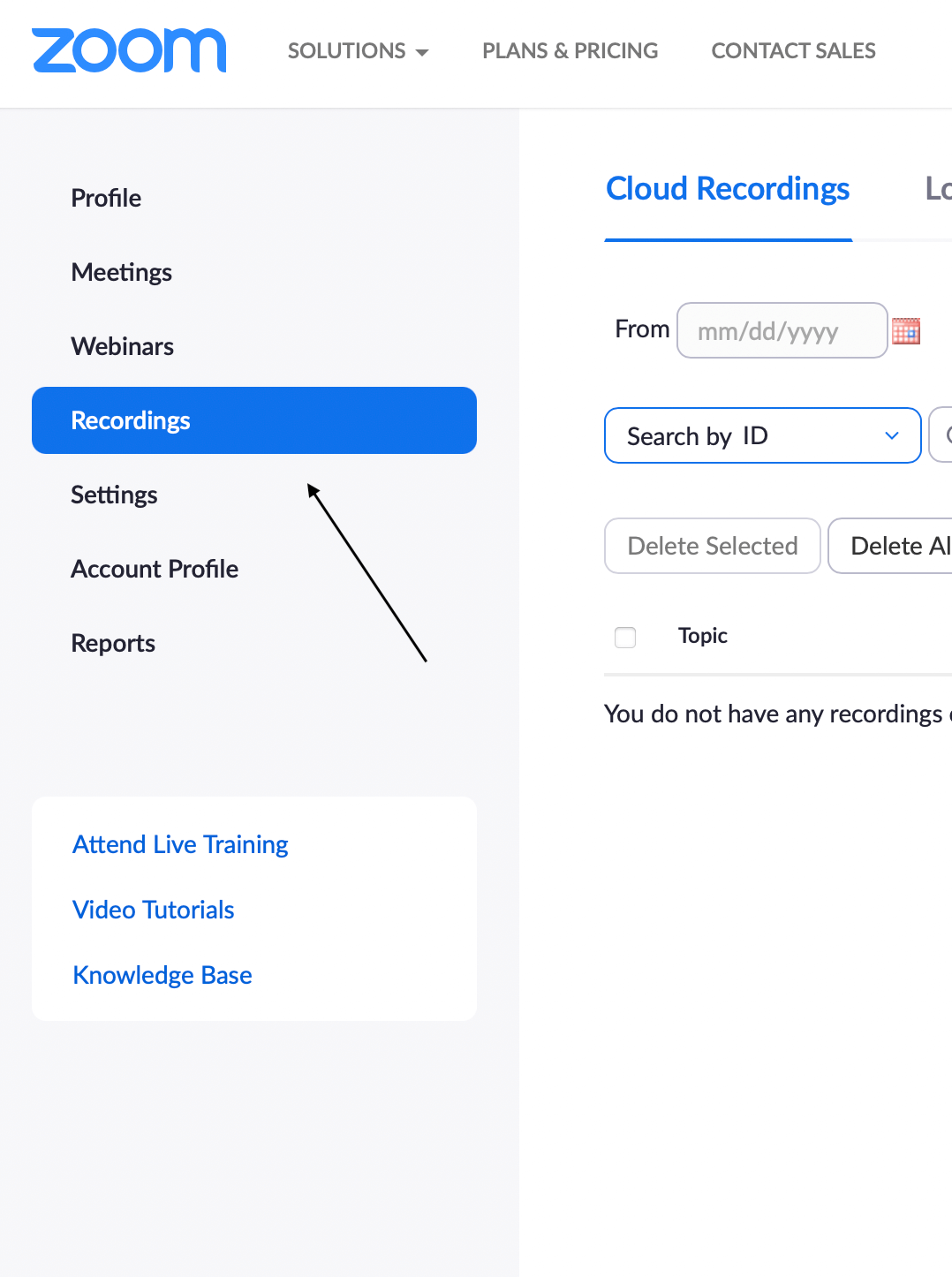

Zoom's Cloud Recording API is designed around account ownership. You can retrieve recordings from meetings hosted on accounts you control, using Server-to-Server OAuth credentials tied to that account. This works well for internal tooling on a single Zoom account, but falls apart for products where users bring their own Zoom accounts or where meetings are spread across many workspaces.

The Zoom App Marketplace path lets you build an OAuth application that users install into their own Zoom accounts, granting you access to their recordings. But this requires marketplace listing, security review, and ongoing Zoom policy compliance. The review timeline alone can be weeks.

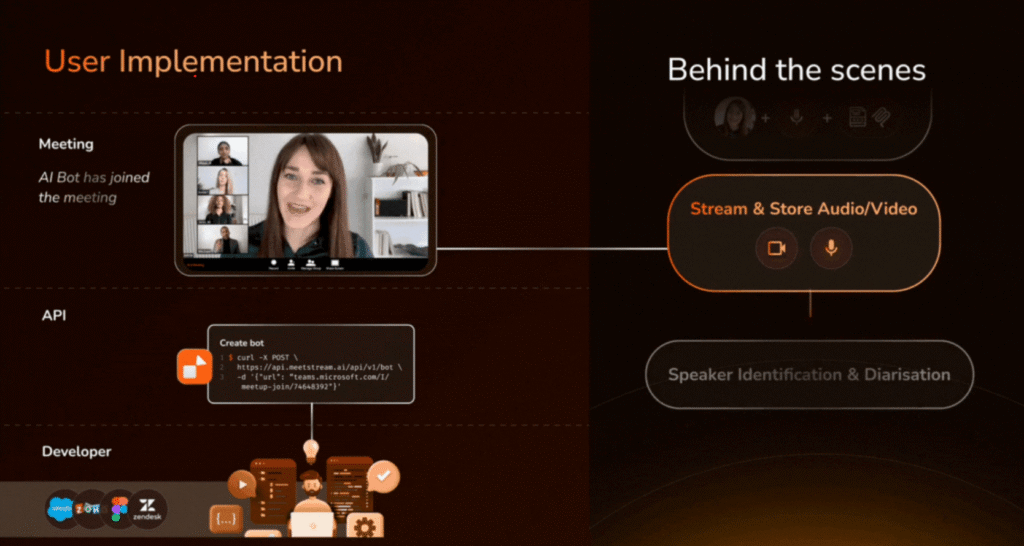

The bot participant approach is architecturally different. Rather than pulling recordings from Zoom's storage after the fact, a bot joins the meeting as a participant and captures audio and video directly. This means you don't need access to the host's Zoom account, recordings are available regardless of the host's Zoom plan, and the same architecture works identically for Zoom, Google Meet, and Microsoft Teams.

MeetStream handles the Zoom integration credentials. After a one-time configuration (documented at docs.meetstream.ai), you send meeting URLs and get recordings and transcripts back. Your code never touches Zoom OAuth directly.

The create_bot request for Zoom recording

The entry point is a single POST request. Here's a complete curl example with all the relevant parameters for a sales call recording workflow:

curl -X POST https://api.meetstream.ai/api/v1/bots/create_bot \

-H "Authorization: Token YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"meeting_link": "https://zoom.us/j/987654321",

"bot_name": "Sales Recorder",

"callback_url": "https://yourapp.com/webhooks/zoom",

"video_required": false,

"recording_config": {

"transcript": {

"provider": {

"name": "assemblyai"

}

},

"retention": {

"type": "timed",

"hours": 72

}

},

"automatic_leave": {

"waiting_room_timeout": 300,

"everyone_left_timeout": 60,

"voice_inactivity_timeout": 1800

},

"custom_attributes": {

"deal_id": "deal_abc123",

"rep_email": "sarah@company.com"

}

}'A few things worth explaining. Setting video_required: false captures audio only, which reduces processing time and storage. For transcription-focused workflows, you rarely need the video track. The custom_attributes field lets you attach arbitrary metadata to a bot session. These attributes come back in webhook payloads, making it easy to correlate recordings with CRM records, deal IDs, or user accounts without maintaining a separate lookup table.

The API response gives you the identifiers you'll need throughout the workflow:

{

"bot_id": "bot_xyz789",

"transcript_id": "txn_def456",

"meeting_url": "https://zoom.us/j/987654321",

"status": "Joining"

}Store both bot_id and transcript_id in your database immediately. You'll need bot_id to correlate webhook events and transcript_id to retrieve the final transcript.

The full webhook event sequence

Here's the complete lifecycle, with the events your callback URL receives and what to do at each step:

# Python webhook handler using Flask

from flask import Flask, request, jsonify

import requests

app = Flask(__name__)

API_KEY = "YOUR_API_KEY"

BASE_URL = "https://api.meetstream.ai/api/v1"

@app.route('/webhooks/zoom', methods=['POST'])

def handle_meetstream_webhook():

data = request.json

event = data.get('event')

bot_id = data.get('bot_id')

transcript_id = data.get('transcript_id')

if event == 'bot.joining':

db.update_session(bot_id, status='joining')

elif event == 'bot.inmeeting':

db.update_session(bot_id, status='recording')

elif event == 'bot.stopped':

bot_status = data.get('bot_status')

if bot_status == 'Stopped':

db.update_session(bot_id, status='processing')

elif bot_status == 'NotAllowed':

db.update_session(bot_id, status='failed', error='Bot not admitted')

notify_team(bot_id)

elif bot_status == 'Error':

db.update_session(bot_id, status='error')

elif event == 'transcription.processed':

transcript = fetch_transcript(transcript_id)

process_transcript(bot_id, transcript)

return jsonify({'status': 'ok'}), 200

def fetch_transcript(transcript_id):

response = requests.get(

f"{BASE_URL}/transcript/{transcript_id}/get_transcript",

headers={'Authorization': f'Token {API_KEY}'}

)

return response.json()

def process_transcript(bot_id, transcript):

session = db.get_session(bot_id)

deal_id = session['custom_attributes'].get('deal_id')

crm.update_deal_notes(deal_id, format_transcript(transcript))

summarizer.queue(transcript)A few implementation notes. The webhook handler must return 200 quickly. If process_transcript involves API calls to your CRM or LLM, move that work to a background queue and return 200 before starting it. Webhook delivery failures get retried, but keeping your handler fast prevents cascading delays.

The custom_attributes you set on the bot come back in webhook payloads. In the handler above, we use them to look up the associated CRM deal. This pattern is cleaner than maintaining a separate bot-to-deal mapping table in your application.

Retrieving the Zoom transcript

Once the transcription.processed event fires, call the transcript endpoint with the transcript_id from the original create_bot response:

curl -X GET https://api.meetstream.ai/api/v1/transcript/txn_def456/get_transcript \

-H "Authorization: Token YOUR_API_KEY"The response is a structured object with speaker-attributed segments:

{

"transcript_id": "txn_def456",

"status": "completed",

"segments": [

{

"speaker_name": "Alex Torres",

"speaker_id": "participant_001",

"text": "Thanks for joining. I wanted to walk you through our Q1 metrics.",

"start_ms": 3200,

"end_ms": 8900

},

{

"speaker_name": "Meeting Recorder",

"speaker_id": "bot_xyz789",

"text": "",

"start_ms": 0,

"end_ms": 0

}

]

}Speaker names come from the Zoom participant display names. If your organization uses consistent Zoom display names, the diarization maps cleanly to your user database. If names are inconsistent (participants joining with initials or aliases), you may need a normalization step.

Scheduling bots for future meetings

For automated pipelines where you're scheduling bots for calendar events, use the join_at parameter. Pass an ISO 8601 timestamp and the bot will join three minutes before that time:

curl -X POST https://api.meetstream.ai/api/v1/bots/create_bot \

-H "Authorization: Token YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"meeting_link": "https://zoom.us/j/111222333",

"bot_name": "Sales Recorder",

"join_at": "2026-04-05T14:00:00Z",

"callback_url": "https://yourapp.com/webhooks/zoom",

"recording_config": {

"transcript": { "provider": { "name": "deepgram" } }

}

}'Alternatively, connect MeetStream to Google Calendar for fully automatic scheduling. The calendar integration detects upcoming meetings, creates bots automatically, and handles rescheduled or cancelled events without any per-meeting API calls from your side.

Tradeoffs and what to watch for

Transcription accuracy varies by provider and recording quality. AssemblyAI tends to perform better on noisy audio. Deepgram has lower latency and works well for clean conference calls. JigsawStack is useful when you want to minimize third-party dependencies. Test across a sample of your actual meeting audio before committing to a provider for production.

The voice_inactivity_timeout in automatic_leave is a useful guard against long pauses in meetings. If a meeting has a 30-minute break with no audio (screen share only, say), setting this too low will cause the bot to leave prematurely. 1800 seconds (30 minutes) is generally safe for most meeting formats.

Speaker diarization accuracy depends on the audio quality and the number of participants. Two-person calls with good audio reliably produce accurate speaker separation. Calls with many participants, heavy background noise, or people speaking simultaneously will have lower diarization accuracy regardless of the provider.

How MeetStream fits in

MeetStream provides the recording bot infrastructure and transcription pipeline as a unified API. The Zoom recording and transcription workflow described here is the same pattern used for Google Meet and Teams, so if your application handles multiple platforms, you only need to maintain one integration. The SDK is available in Python, JavaScript, Go, Ruby, Java, PHP, C#, and Swift for teams that prefer client libraries over raw API calls.

Conclusion

Automating Zoom recording and transcription via the MeetStream API reduces the workflow to three steps: POST to create_bot with your recording configuration, handle webhook events in a callback URL, and GET the transcript once transcription.processed fires. The custom_attributes field connects bot sessions to your data model without extra lookup tables. The automatic_leave parameters make unattended bots reliable in production. This is the pattern that scales from 10 meetings a week to 10,000.

Get started free at meetstream.ai or see the full API reference at docs.meetstream.ai.

Frequently Asked Questions

How does the Zoom recording API work with multiple Zoom accounts?

Zoom's native Cloud Recording API is tied to specific account credentials and requires admin access for each account. The MeetStream bot API doesn't require credentials for the meeting host's account. The bot joins as a participant using its own credentials, so recording works regardless of which Zoom account hosted the meeting. This makes it well-suited for recording meetings across many different customer accounts.

What transcription providers does the Zoom recording API support?

MeetStream supports AssemblyAI, Deepgram, JigsawStack, and meeting_captions for post-call transcription. For real-time streaming transcription during the meeting, Deepgram Streaming and AssemblyAI Streaming are available. Different providers have different accuracy and latency characteristics, so the best choice depends on your specific meeting audio characteristics and use case requirements.

How long does automated Zoom transcription take?

Transcription processing begins as soon as the meeting ends and audio processing completes. For a typical 30 to 60-minute meeting, expect the transcription.processed webhook within five to ten minutes. The processing time scales roughly linearly with meeting length. For time-sensitive workflows, use real-time streaming transcription via live_transcription_required instead of waiting for post-call processing.

Can I attach metadata to a Zoom recording bot?

Yes. The custom_attributes parameter accepts an arbitrary key-value object that you define at create_bot time. These attributes are included in all subsequent webhook payloads for that bot session. Use this to embed CRM deal IDs, user IDs, or any application-specific context so you can correlate recordings with your own data model without maintaining a separate mapping database.

What happens if the Zoom bot isn't admitted from the waiting room?

The bot waits in the waiting room for the duration specified in waiting_room_timeout (in seconds). If it's not admitted within that window, the bot.stopped webhook fires with bot_status set to NotAllowed. Your webhook handler should watch for this status and either retry, alert your team, or mark the session as failed. Setting waiting_room_timeout to 300 seconds is a reasonable default for most use cases.