Processing Long Meeting Audio: Handling Multi-Hour Recordings

A one-hour weekly standup is straightforward. A six-hour board meeting, a full-day training session, or a quarterly all-hands creates a set of engineering problems that most documentation glosses over. Storage fills up. Transcription jobs time out. Memory pressure builds when you try to process the entire audio in one pass. Webhooks arrive long after the meeting ends.

Long meeting transcription requires thinking about resource constraints that do not matter for typical 30-minute calls. The raw PCM audio from a two-hour meeting is not trivially sized. The transcription job takes minutes to run. The polling and webhook handling need to account for extended wait times. And if your bot stays in a meeting longer than intended, you might rack up costs and storage you did not plan for.

This guide covers the practical decisions you need to make for multi-hour recordings: recording timeout configuration, storage sizing estimates, chunking strategies for post-processing, how to correctly wait for the transcription.processed event before fetching, and how to handle partial transcripts from interrupted sessions.

In this guide we work through each concern with concrete numbers and code. Let's get into it.

Storage Sizing for Long Recordings

Before you write any code, understand what you are storing. The MeetStream API records audio as PCM int16 little-endian at 48kHz mono. The math is straightforward:

- Sample rate: 48,000 samples per second

- Bit depth: 16 bits (2 bytes) per sample

- Channels: 1 (mono)

- Bytes per second: 48,000 x 2 x 1 = 96,000 bytes/sec = 93.75 KB/sec

- MB per minute: 93.75 x 60 / 1024 = 5.49 MB/min

- GB per hour: 5.49 x 60 / 1024 = 0.322 GB/hr (approximately 322 MB/hr)

For practical planning purposes, one hour of raw mono PCM at 48kHz is approximately 330 MB. Two hours is 660 MB. A full-day training session at eight hours approaches 2.6 GB of raw audio.

If MeetStream stores the recording in a compressed format (opus, AAC, MP3), the sizes are dramatically smaller: Opus at 64kbps gives you roughly 28 MB per hour. The important thing is to understand what format you are working with when estimating storage costs for your infrastructure.

Capping Recording Length with in_call_recording_timeout

If you do not want a bot to record indefinitely, configure automatic_leave.in_call_recording_timeout. This tells the bot to stop recording after a specified number of minutes, regardless of whether the meeting has ended. The bot can stay in the meeting (useful for live features) but the recording stops accumulating.

import requests

# Cap recording at 90 minutes for a meeting expected to run long

response = requests.post(

"https://api.meetstream.ai/api/v1/bots/create_bot",

json={

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "Meeting Recorder",

"recording_config": {

"transcript": {

"provider": "deepgram",

"model": "nova-3",

"diarize": True

}

},

"automatic_leave": {

"in_call_recording_timeout": 90 # minutes

}

},

headers={"Authorization": "Token YOUR_API_KEY"}

)

bot = response.json()

print(f"Bot created: {bot['bot_id']}")

You can also configure the bot to leave the meeting automatically. automatic_leave.everyone_left_timeout tells the bot to leave N seconds after all other participants have left. For long sessions where the meeting might end abruptly without the host formally ending it, this prevents the bot from sitting in an empty meeting.

Waiting for Transcription on Long Recordings

Transcription processing time scales with audio length. A 90-minute meeting might take 3 to 8 minutes to transcribe depending on the provider and server load. Never poll the transcript endpoint in a tight loop. Instead, listen for the transcription.processed webhook event and fetch the transcript only after that event fires.

from flask import Flask, request, jsonify

import requests

import json

app = Flask(__name__)

@app.route("/webhook", methods=["POST"])

def handle_webhook():

payload = request.json

event_type = payload.get("event")

if event_type == "bot.stopped":

bot_id = payload.get("bot_id")

status = payload.get("bot_status")

print(f"Bot {bot_id} stopped with status: {status}")

# Do NOT fetch transcript here, transcription may not be ready

elif event_type == "transcription.processed":

bot_id = payload.get("bot_id")

transcript_id = payload.get("transcript_id")

print(f"Transcription ready for bot {bot_id}, id: {transcript_id}")

# NOW it is safe to fetch

fetch_and_store_transcript(bot_id, transcript_id)

elif event_type == "audio.processed":

bot_id = payload.get("bot_id")

print(f"Audio recording ready for bot: {bot_id}")

elif event_type == "video.processed":

bot_id = payload.get("bot_id")

print(f"Video recording ready for bot: {bot_id}")

return jsonify({"status": "ok"})

def fetch_and_store_transcript(bot_id: str, transcript_id: str) -> None:

response = requests.get(

f"https://api.meetstream.ai/api/v1/transcript/{transcript_id}/get_transcript",

headers={"Authorization": "Token YOUR_API_KEY"}

)

transcript_data = response.json()

# Store transcript data, write to DB, S3, etc.

with open(f"transcripts/{bot_id}.json", "w") as f:

json.dump(transcript_data, f, indent=2)

print(f"Transcript stored for {bot_id}: {len(transcript_data.get('words', []))} words")

Chunking Long Transcripts for Post-Processing

A two-hour transcript can contain 15,000 to 25,000 words. Feeding the entire transcript to a language model for summarization in a single prompt will exceed context limits on most models and produce poor quality summaries regardless. Chunk the transcript before processing it.

from typing import List

def chunk_transcript_by_time(

words: list,

chunk_duration_minutes: int = 15

) -> List[dict]:

"""

Split a transcript into time-based chunks.

words: list of {word, start, end, speaker} dicts

Returns: list of {start_time, end_time, text, words}

"""

if not words:

return []

chunk_duration_seconds = chunk_duration_minutes * 60

chunks = []

current_chunk_words = []

chunk_start = words[0].get("start", 0)

for word in words:

word_start = word.get("start", 0)

if word_start - chunk_start >= chunk_duration_seconds and current_chunk_words:

chunks.append({

"start_time": chunk_start,

"end_time": current_chunk_words[-1].get("end", word_start),

"text": " ".join(w.get("word", "") for w in current_chunk_words),

"words": current_chunk_words

})

current_chunk_words = [word]

chunk_start = word_start

else:

current_chunk_words.append(word)

if current_chunk_words:

chunks.append({

"start_time": chunk_start,

"end_time": current_chunk_words[-1].get("end", chunk_start),

"text": " ".join(w.get("word", "") for w in current_chunk_words),

"words": current_chunk_words

})

return chunks

def chunk_transcript_by_speaker_turns(

segments: list,

max_words_per_chunk: int = 3000

) -> List[List[dict]]:

"""

Split a list of speaker-attributed segments into chunks

of at most max_words_per_chunk words, breaking at speaker boundaries.

segments: list of {speaker, text, start, end}

"""

chunks = []

current_chunk = []

current_word_count = 0

for segment in segments:

word_count = len(segment["text"].split())

if current_word_count + word_count > max_words_per_chunk and current_chunk:

chunks.append(current_chunk)

current_chunk = [segment]

current_word_count = word_count

else:

current_chunk.append(segment)

current_word_count += word_count

if current_chunk:

chunks.append(current_chunk)

return chunks

Handling Partial Transcripts from Interrupted Sessions

Meetings get interrupted. A participant disconnects the bot accidentally. A network failure causes the recording to stop mid-session. The bot.stopped webhook fires with a bot_status of Stopped, Error, or Denied. In these cases, you may still have a partial recording and a partial transcript.

TERMINAL_STATUSES = {"Stopped", "NotAllowed", "Denied", "Error"}

def handle_bot_stopped(payload: dict) -> None:

bot_id = payload.get("bot_id")

status = payload.get("bot_status")

if status not in TERMINAL_STATUSES:

return

if status == "Error":

print(f"Bot {bot_id} encountered an error. Check logs.")

elif status in {"NotAllowed", "Denied"}:

print(f"Bot {bot_id} was not admitted to the meeting.")

return # No recording to retrieve

# For Stopped and Error, a partial transcript may be available

# Wait for transcription.processed webhook before fetching

# Log the status for monitoring

print(f"Bot {bot_id} ended with status: {status}. Awaiting transcription webhook.")

For production systems, implement a fallback: if the transcription.processed webhook has not arrived within a reasonable window (say, 30 minutes after bot.stopped), poll the transcript endpoint once to check if a partial transcript is available. Do not poll continuously; a single retry after the timeout window is sufficient.

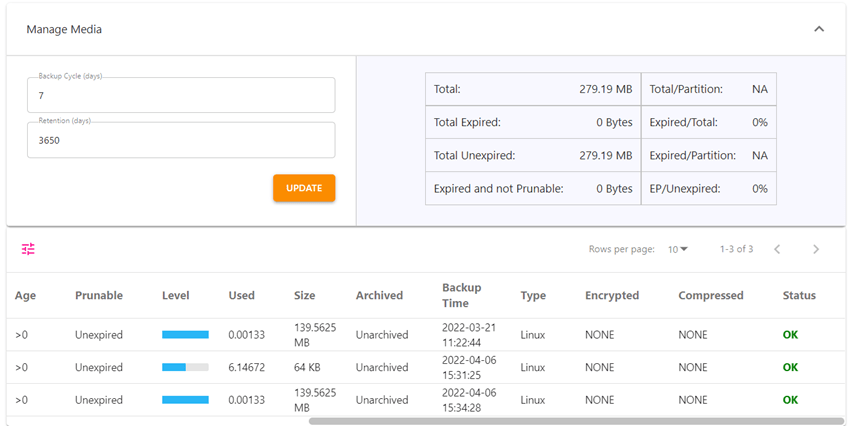

Data Retention Configuration

Long recordings consume storage indefinitely if you do not manage retention. Set a data retention policy that matches your compliance requirements and cost constraints. Most transcription use cases do not need the raw audio after the transcript has been successfully stored and verified.

A practical retention pattern: keep raw audio for 7 days (long enough to re-process if the transcript is corrupted), keep the structured transcript indefinitely in a compressed format, and archive the video recording to cold storage (S3 Glacier or equivalent) after 30 days if you need it at all.

For compliance-sensitive applications (legal, healthcare, financial services), your retention policy is typically dictated by regulation. 7-year retention for financial services, HIPAA-compliant storage for healthcare. Verify with your legal team before setting any retention parameters.

FAQ

How large is a typical long meeting transcription for a 2-hour session?

The raw PCM audio for a 2-hour mono meeting at 48kHz int16 is approximately 660 MB. A compressed recording (Opus at 64kbps) is around 56 MB. The transcript in JSON format with word-level timestamps is typically 500 KB to 2 MB depending on the number of words and metadata fields. Store compressed audio and structured transcript; the raw PCM does not need to be kept after transcription is verified.

What is the process large audio files approach for transcription that times out?

Transcription API calls on large audio files can time out at the HTTP layer even when the processing is still running server-side. The correct approach is to use the webhook-based flow: create the bot with a transcription.processed webhook configured, then wait for the webhook to fire rather than holding an open HTTP connection. The MeetStream API handles async processing natively through this webhook model.

Can I set a recording timeout without ending the bot's participation in the meeting?

Yes. The automatic_leave.in_call_recording_timeout parameter stops the recording while the bot remains present in the meeting. The bot can continue providing live features like live_transcription_required or live_audio_required even after the recording limit is reached. This is useful for all-day events where you want the first few hours recorded but the bot to remain for live participant features.

How do I handle the meeting audio processing webhook order for long recordings?

Webhooks arrive in this order for a normal bot session: bot.joining, bot.inmeeting, bot.stopped, then asynchronously: audio.processed, video.processed, transcription.processed. The async events can arrive in any order and may take minutes to hours after bot.stopped for long recordings. Always gate transcript retrieval on the transcription.processed event, not on bot.stopped.

Frequently Asked Questions

What is the recommended chunk size for processing multi-hour recordings?

Split recordings into 10-15 minute segments with a 30-second overlap between adjacent chunks. The overlap prevents word truncation at boundaries and allows you to stitch transcripts by deduplicating the overlapping region. Avoid chunks shorter than 5 minutes because model initialization overhead becomes significant relative to processing time.

How do I maintain speaker continuity across chunk boundaries?

Before stitching chunks, run a cross-chunk speaker reconciliation step. Extract speaker embeddings from the last 30 seconds of chunk N and the first 30 seconds of chunk N+1, compute cosine similarity, and merge speaker IDs that exceed a 0.85 similarity threshold. This prevents the same speaker from appearing as two different IDs in the final transcript.

What storage format is best for large meeting transcripts?

Store transcripts as JSONL (JSON Lines) rather than a single large JSON object. Each line represents a single utterance with keys for speaker, start_time, end_time, and text. JSONL is streamable, append-friendly, and works efficiently with tools like jq, pandas, and BigQuery without loading the entire file into memory.

How do I handle processing failures partway through a long recording?

Implement checkpointing: after each chunk is successfully transcribed and stored, write the chunk index and output location to a persistent store like Redis or DynamoDB. On restart, read the checkpoint and resume from the last successful chunk. This avoids reprocessing hours of audio after a transient failure.