Real-Time Actions in Meeting Bots: From Audio to Response

The demo looks effortless: a bot joins a meeting, hears a question, and responds within a second with a relevant, context-aware answer. What's not visible is the latency budget spreadsheet that made that demo possible. Audio capture: 50 ms. Network to your server: 30 ms. Streaming STT first word: 300 ms. LLM inference: 400 ms. TTS generation: 150 ms. Network back to meeting: 30 ms. Total: just under a second but only because each stage was profiled and optimized. Add 100 ms to any stage and your bot sounds like it's thinking. Add 500 ms and it sounds broken.

Building a real-time meeting bot that participates in conversations requires treating the entire pipeline as a latency-critical system. This is fundamentally different from post-call processing, where accuracy matters more than speed. In the live loop, you're racing against human conversation dynamics: people expect a response within 700, 1200 ms, and anything beyond that breaks the conversational feel that makes a bot useful rather than annoying.

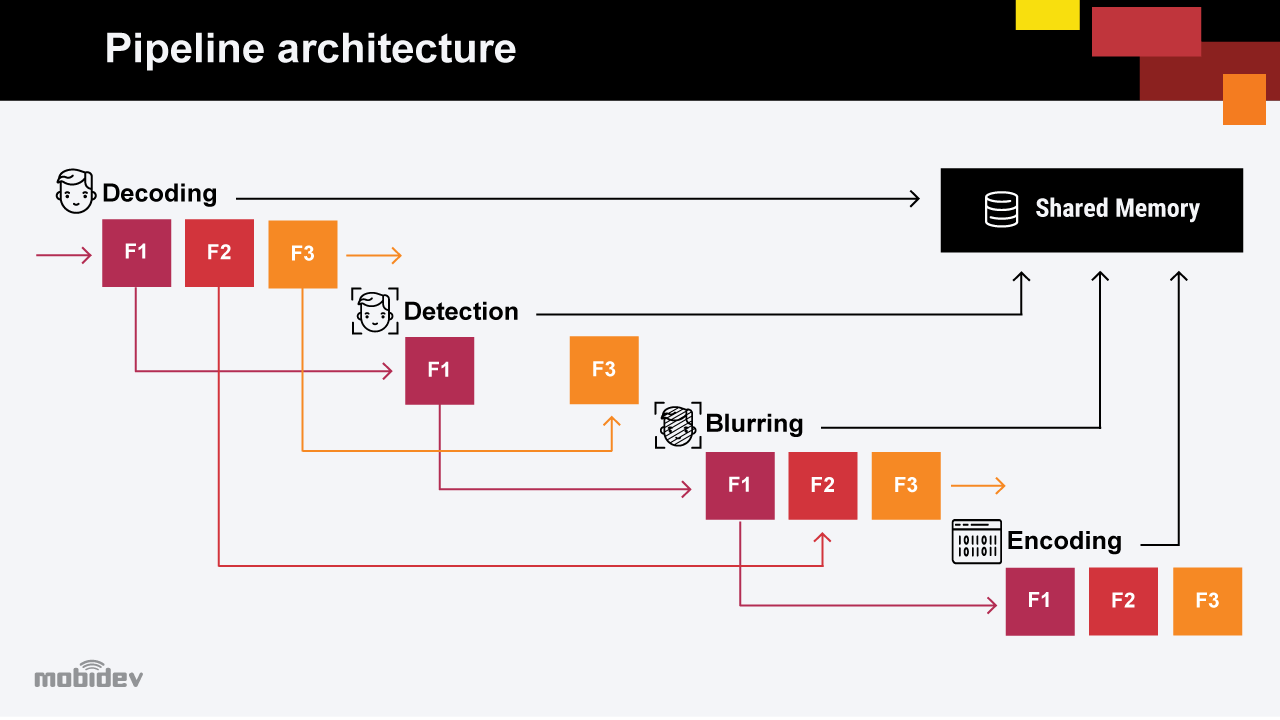

The pipeline has four stages: inbound audio via WebSocket, streaming speech-to-text, LLM processing, and bot speech via the sendaudio command. Each stage has a latency budget, a failure mode, and an optimization lever. The interrupt mechanism, stopping the bot mid-sentence when a human starts talking, is a separate concern that most implementations get wrong by handling too slowly.

In this guide, we'll cover the full live loop architecture, latency budgets per stage, streaming STT integration, LLM inference patterns, TTS-to-bot-audio, interrupt handling, and MeetStream's MIA (Meeting Intelligence Agent) Realtime mode that handles the pipeline automatically. Let's get into it.

The Live Loop Architecture

Meeting Audio (48kHz PCM16 LE)

│

▼ WebSocket (/bridge/audio)

┌───────────────────────────────┐

│ Your Server │

│ │

│ 1. Resample 48k→16k mono │

│ 2. Filter bot's own audio │

│ 3. Voice activity detection │

│ 4. Stream to STT (Deepgram) │

│ 5. Buffer final transcripts │

│ 6. Detect question/trigger │

│ 7. LLM inference │

│ 8. TTS → PCM16 LE audio │

│ 9. sendaudio → bot speaks │

│ │

└───────────────────────────────┘

│

▼ WebSocket (/bridge control)

Meeting participants hear bot

The audio flows in on the /bridge/audio WebSocket. Commands go out on the /bridge control WebSocket. These are separate connections by design, see the API documentation for connection details.

Latency Budget Per Stage

For a target end-to-end latency of under 800 ms from the end of a human utterance to the start of the bot's response:

| Stage | Budget | Optimization |

|---|---|---|

| Audio capture + network | 50, 80 ms | Co-locate server with MeetStream infra |

| Resample + VAD | 5, 10 ms | Use vectorized numpy, avoid scipy per-frame |

| Streaming STT first word | 200, 400 ms | Deepgram nova-3, enable interim_results |

| End-of-utterance detection | 100, 300 ms | VAD silence detection, not STT endpoint |

| LLM inference (first token) | 200, 600 ms | Streaming, smaller model, GPU inference |

| TTS synthesis | 100, 300 ms | Streaming TTS, start on first LLM tokens |

| sendaudio network | 20, 50 ms | Keep control WebSocket warm |

The two biggest variables are LLM inference latency and STT end-of-utterance detection. On LLM: use streaming inference and start TTS as soon as the first sentence of the LLM response is ready, don't wait for the full completion. On end-of-utterance: use voice activity detection (VAD) silence detection rather than waiting for the STT provider to signal end-of-speech, which typically adds 300, 500 ms.

Configuring Live Audio and Streaming STT

Configure the bot for real-time audio and streaming transcription simultaneously:

POST https://api.meetstream.ai/api/v1/bots/create_bot

Authorization: Token YOUR_API_KEY

Content-Type: application/json

{

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "MeetingAssistant",

"live_audio_required": {

"websocket_url": "wss://yourserver.com/audio"

},

"live_transcription_required": {

"webhook_url": "https://yourserver.com/webhooks/transcript",

"provider": {

"name": "deepgram_streaming",

"api_key": "YOUR_DEEPGRAM_KEY",

"model": "nova-3",

"diarize": true,

"interim_results": true,

"endpointing": 300

}

},

"socket_connection_url": {

"websocket_url": "wss://yourserver.com/control"

}

}

The endpointing: 300 Deepgram parameter signals end-of-utterance after 300 ms of silence. This is your primary trigger for deciding the human has finished speaking and the bot should respond.

The Python Live Loop

import asyncio, json, base64, numpy as np

from scipy.signal import resample_poly

import websockets

from openai import AsyncOpenAI

openai = AsyncOpenAI()

BOT_SPEAKER_ID = None # set on bot.inmeeting event

async def audio_handler(audio_ws, control_ws):

"""Receives PCM audio frames, streams to STT, coordinates response."""

async for message in audio_ws:

if not isinstance(message, bytes):

continue

frame = parse_audio_frame(message)

if frame is None:

continue

# Skip bot's own audio to prevent feedback loop

if frame['speaker_id'] == BOT_SPEAKER_ID:

continue

# Resample 48kHz stereo → 16kHz mono for STT

pcm_16k = resample_48k_to_16k(frame['audio'])

# Forward to your Deepgram streaming connection

await forward_to_deepgram(pcm_16k)

async def transcript_handler(event: dict, control_ws):

"""Handles final transcript segments from Deepgram streaming."""

if not event.get('is_final'):

return # skip interim results for LLM trigger

text = event.get('text', '').strip()

if not text:

return

# Simple trigger: respond to any question directed at the bot

if should_respond(text):

asyncio.create_task(generate_and_speak(text, control_ws))

async def generate_and_speak(user_text: str, control_ws):

"""LLM inference + TTS + sendaudio in one pipeline."""

sentences = []

current = ''

# Stream LLM response, send audio sentence-by-sentence

async for chunk in await openai.chat.completions.create(

model='gpt-4o-mini',

messages=[

{'role': 'system', 'content': 'You are a helpful meeting assistant.'},

{'role': 'user', 'content': user_text}

],

stream=True,

max_tokens=150

):

delta = chunk.choices[0].delta.content or ''

current += delta

# Send TTS audio when we have a complete sentence

if any(current.rstrip().endswith(p) for p in ['.', '?', '!', ',']):

if current.strip():

pcm_audio = await text_to_speech(current.strip())

await send_audio_to_bot(control_ws, pcm_audio)

current = ''

# Send any remaining text

if current.strip():

pcm_audio = await text_to_speech(current.strip())

await send_audio_to_bot(control_ws, pcm_audio)

async def send_audio_to_bot(control_ws, pcm_bytes: bytes):

"""Send PCM16 LE audio to the bot via sendaudio command."""

audio_b64 = base64.b64encode(pcm_bytes).decode()

command = json.dumps({

'action': 'sendaudio',

'audio': audio_b64,

'sample_rate': 16000,

'encoding': 'pcm16'

})

await control_ws.send(command)

def resample_48k_to_16k(pcm_bytes: bytes) -> bytes:

samples = np.frombuffer(pcm_bytes, dtype=np.int16).astype(np.float32)

left = samples[0::2]

right = samples[1::2]

mono = (left + right) / 2.0

resampled = resample_poly(mono, up=1, down=3)

return np.clip(resampled, -32768, 32767).astype(np.int16).tobytes()

Interrupt Handling

When a human starts speaking while the bot is talking, you need to stop the bot immediately. Continuing to speak over the human is the single most jarring failure mode in conversational AI. The interrupt command on the control WebSocket clears the audio queue:

async def handle_human_starts_speaking(control_ws, speaker_id: str):

"""Called when VAD detects a human speaking."""

if speaker_id == BOT_SPEAKER_ID:

return # bot itself speaking, not a human

# Clear pending audio queue immediately

interrupt_cmd = json.dumps({

'action': 'interrupt',

'type': 'clear_audio_queue'

})

await control_ws.send(interrupt_cmd)

# Cancel any in-flight LLM generation tasks

for task in active_generation_tasks:

task.cancel()

active_generation_tasks.clear()

The interrupt should fire as soon as VAD detects speech energy from a human speaker, don't wait for the STT provider to produce a transcript segment. VAD is much faster (under 20 ms) than STT (200, 400 ms) and is sufficient to detect that someone started talking.

MIA Realtime Mode

MeetStream's MIA (Meeting Intelligence Agent) system provides the full live loop as a managed feature. Configure an agent with agent_config_id when creating the bot, and MIA handles audio-to-STT-to-LLM-to-TTS automatically. Realtime mode is designed specifically for conversational bots that need to respond during meetings rather than after them.

POST https://api.meetstream.ai/api/v1/bots/create_bot

Authorization: Token YOUR_API_KEY

Content-Type: application/json

{

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "AssistantBot",

"agent_config_id": "YOUR_MIA_AGENT_CONFIG_ID",

"callback_url": "https://yourapp.com/webhooks/meetstream"

}

MIA also supports MCP (Model Context Protocol) server integration, allowing your bot to call external tools, CRM lookups, calendar checks, document retrieval, as part of its response pipeline. This eliminates the need to build and host the live loop infrastructure yourself.

How MeetStream Fits

The real-time meeting API provides everything the live loop needs: real-time audio delivery via WebSocket, streaming transcription, the sendaudio and interrupt commands for bot speech control, and MIA Realtime mode for managed end-to-end pipeline. You can build the pipeline yourself using the primitives, or use MIA to handle it automatically. Both approaches are supported; the choice depends on how much control you need over the LLM and TTS layers. See the full reference at docs.meetstream.ai.

Conclusion

Building a real-time meeting bot that participates in conversations is a latency optimization problem as much as an engineering one. The live loop, audio in, streaming STT, LLM, TTS, sendaudio, has roughly 800 ms of total budget if you want the bot to feel responsive. The key optimizations are: start TTS before LLM finishes (sentence-by-sentence streaming), use VAD for end-of-utterance detection rather than waiting for STT endpoints, and fire the interrupt command from VAD activity rather than from transcript events. MIA Realtime mode handles the full pipeline if you want to skip building it yourself. Get started free at meetstream.ai.

Frequently Asked Questions

What is the expected end-to-end latency for a real-time meeting bot response?

A well-optimized pipeline achieves 600, 900 ms from the end of a human utterance to the start of the bot's spoken response. The main variables are streaming STT first-word latency (200, 400 ms for Deepgram nova-3) and LLM first-token latency (200, 600 ms for GPT-4o-mini). Sentence-streaming TTS, starting audio playback while the LLM is still generating, is the most impactful single optimization.

How does the interrupt command work?

The interrupt command with type: clear_audio_queue, sent on the control WebSocket (/bridge), stops any queued audio the bot was about to speak and silences it immediately. It should be triggered by voice activity detection as soon as a human starts speaking, not by a transcript event, which arrives 200, 400 ms later and would cause audible overlap between the bot and the human.

What is MeetStream MIA and when should I use it?

MIA (Meeting Intelligence Agent) is MeetStream's managed agent system that handles the full real-time pipeline, audio, STT, LLM routing, TTS, and speech, through configurable agent profiles. Use MIA when you want a conversational bot without building the live loop infrastructure yourself. Use the raw audio and control WebSocket APIs when you need custom LLM logic, specific TTS voices, or integration with proprietary models or tools not supported by MIA's MCP server.

How do I prevent the bot from transcribing and responding to its own speech?

Track the bot's own speaker_id from the bot.inmeeting webhook event and filter it at the audio frame parsing stage. Apply the same filter when deciding whether to trigger an LLM response, only trigger on human speakers. This prevents both transcript pollution and feedback loops where the bot responds to its own previous responses.

What TTS provider works best for real-time meeting bot audio?

ElevenLabs (streaming WebSocket API), OpenAI TTS, and Cartesia are the lowest-latency options for real-time use. Use a streaming TTS API that can start returning audio bytes before the full text is ready, sentence-level streaming is achievable with all three. Avoid non-streaming TTS providers (those that require the complete text before generating any audio) as they add 300, 800 ms of unnecessary latency to every bot utterance.