Building Media Pipelines for Real-Time Meeting Bots

Audio from a Google Meet call arrives at your bot as 48kHz stereo PCM. Your speech-to-text provider wants 16kHz mono PCM. Between those two facts lives the entire complexity of a real-time meeting media pipeline and if you get any step wrong, you get either silence, garbled transcription, or a bot that crashes when two people speak at once. Most engineers don't think about this until they're debugging why their transcription accuracy is 40% worse than expected.

The pipeline isn't just resampling. You need to capture audio from the meeting platform's WebRTC stack, demux it per speaker, filter out your own bot's audio output (otherwise the bot transcribes itself), forward it over a WebSocket connection with the right framing, and handle all of this with latency low enough that real-time processing is actually real-time. Add video capture into the mix and you're juggling two separate media types with different codec chains and different downstream consumers.

The architecture that handles this reliably has a few non-obvious design choices: two separate WebSocket endpoints for inbound audio versus control commands, a header-framed binary protocol for speaker attribution, and careful handling of the bot's own audio to prevent feedback loops. Each of these is easy to get wrong and painful to debug after the fact.

In this guide, we'll cover each stage of the meeting media pipeline, capture, decode, process, and output, how the bridge server architecture works, how to parse PCM frames with speaker metadata, and how MeetStream's real-time audio WebSocket handles all of this. Let's get into it.

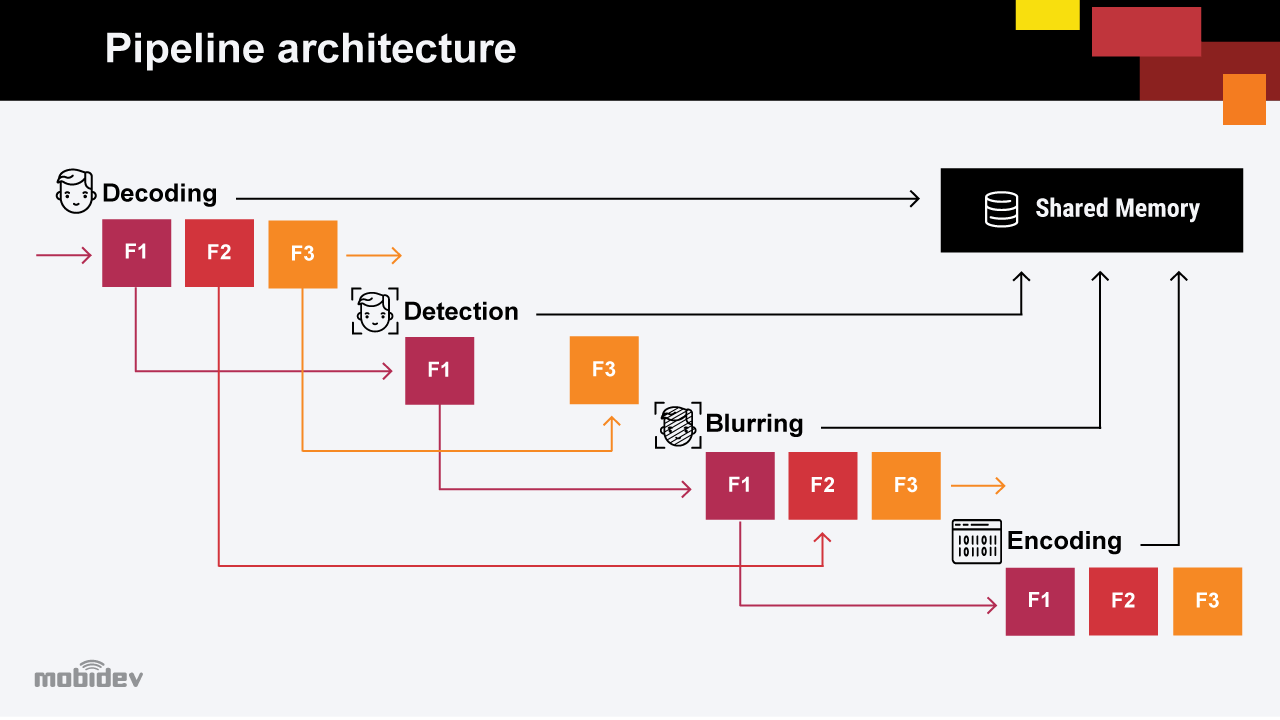

Pipeline Stages Overview

A complete media pipeline for a meeting bot has four stages:

- Capture: Extract raw audio/video bytes from the meeting platform's WebRTC stack, using a headless browser with injected JavaScript or a native SDK.

- Decode: Convert from the platform's codec (Opus for audio, VP8/H.264 for video) to raw PCM audio or YUV/RGB video frames.

- Process: Resample, normalize, diarize, and forward to downstream consumers (STT, video processing, storage).

- Output: Deliver processed data via webhooks (post-call) or WebSocket binary frames (real-time), with speaker attribution intact.

The full audio video pipeline runs continuously for the duration of the meeting. Latency accumulates at each stage, decode latency + resample latency + network latency to your processing server. For real-time applications, each stage budget is roughly 20, 50 ms, targeting under 200 ms end-to-end from audio capture to your processing code receiving the frame.

Audio Capture and Decoding

Meeting platforms transmit audio as Opus packets over WebRTC. Your capture layer intercepts these before they reach the speaker, either via browser automation (injecting JavaScript into a Chrome extension context that intercepts AudioWorklet output) or via a native SDK that provides raw PCM access.

Once decoded from Opus, raw audio is typically 48kHz stereo (two channels, left and right). Most STT providers require 16kHz mono. The resampling step is:

- Downsample from 48kHz to 16kHz (3:1 ratio) using a low-pass filter at 8kHz to prevent aliasing.

- Mix stereo to mono by averaging the left and right channels per sample.

- Normalize to PCM16 signed 16-bit little-endian format.

import numpy as np

from scipy.signal import resample_poly

def resample_audio(pcm_bytes: bytes, source_rate=48000, target_rate=16000) -> bytes:

"""

Resample PCM16 LE stereo audio to PCM16 LE mono.

Input: raw bytes, 48kHz stereo, signed 16-bit little-endian.

Output: raw bytes, 16kHz mono, signed 16-bit little-endian.

"""

# Parse bytes to int16 array

samples = np.frombuffer(pcm_bytes, dtype=np.int16).astype(np.float32)

# Deinterleave stereo (L R L R ...) to two channels

left = samples[0::2]

right = samples[1::2]

# Mix to mono

mono = (left + right) / 2.0

# Resample 48kHz -> 16kHz (downsample by factor 3)

resampled = resample_poly(mono, up=1, down=3)

# Clip and convert back to int16

resampled = np.clip(resampled, -32768, 32767).astype(np.int16)

return resampled.tobytes()

The resample_poly function from scipy uses a polyphase filter, which is more accurate and computationally efficient than naive interpolation. For production use, consider librosa.resample with the res_type='kaiser_fast' option for better performance under continuous streaming loads.

The Bridge Server Architecture

MeetStream's WebSocket audio pipeline uses a bridge server with two separate WebSocket endpoints:

wss://agent-meetstream-prd-main.meetstream.ai/bridge, control channel for commands (send audio to bot, send chat messages, interrupt, etc.)wss://agent-meetstream-prd-main.meetstream.ai/bridge/audio, inbound audio stream (binary frames from the meeting)

Separating audio from control is the right design choice. Audio frames arrive continuously at 48kHz, that's thousands of frames per second. Control commands are sparse and latency-sensitive. Mixing them on a single WebSocket creates head-of-line blocking: a burst of audio frames can delay a time-sensitive interrupt command. The separate channels mean each can be optimized independently.

To receive real-time audio, configure live_audio_required when creating the bot:

POST https://api.meetstream.ai/api/v1/bots/create_bot

Authorization: Token YOUR_API_KEY

Content-Type: application/json

{

"meeting_link": "https://meet.google.com/abc-defg-hij",

"bot_name": "Listener",

"live_audio_required": {

"websocket_url": "wss://yourserver.com/audio-ingest"

}

}

MeetStream connects to your WebSocket endpoint and pushes binary frames as audio arrives from the meeting. Your server receives the frames and processes them in your pipeline.

Parsing PCM Frames with Speaker Metadata

Real-time audio frames from MeetStream are binary WebSocket messages. Each frame includes a header with speaker attribution followed by raw PCM16 LE audio bytes. The header fields are speaker_id and speaker_name, which let you attribute audio to specific participants without running speaker diarization yourself.

import asyncio, json, struct

import websockets

async def handle_audio_stream(websocket):

async for message in websocket:

if isinstance(message, bytes):

frame = parse_audio_frame(message)

if frame:

pcm_16k = resample_audio(frame['audio'])

await forward_to_stt(frame['speaker_id'], pcm_16k)

else:

# Text frame: metadata/control JSON

meta = json.loads(message)

print(f"Control: {meta}")

def parse_audio_frame(data: bytes) -> dict | None:

"""

Parse MeetStream binary audio frame.

Frame structure: JSON header (length-prefixed) + raw PCM16 LE audio bytes.

"""

try:

# First 4 bytes: header length as uint32 big-endian

header_len = struct.unpack('>I', data[:4])[0]

header_bytes = data[4:4 + header_len]

audio_bytes = data[4 + header_len:]

header = json.loads(header_bytes.decode('utf-8'))

return {

'speaker_id': header.get('speaker_id'),

'speaker_name': header.get('speaker_name'),

'timestamp_ms': header.get('timestamp_ms'),

'audio': audio_bytes

}

except (struct.error, json.JSONDecodeError, UnicodeDecodeError):

return None

async def main():

async with websockets.serve(handle_audio_stream, '0.0.0.0', 8765):

await asyncio.Future() # run forever

if __name__ == '__main__':

asyncio.run(main())

Speaker Filtering: Ignoring Your Own Bot's Audio

When your bot speaks, playing a TTS audio clip or responding to a question, that audio goes back into the meeting, and the meeting platform echoes it back to all participants including your bot's own audio capture. Without filtering, you'll transcribe your bot's own words as if they came from a human participant. This creates obvious transcript pollution and can cause feedback loops in real-time response systems.

The fix is to track your bot's speaker ID after the first bot.inmeeting event and filter it out in your pipeline:

BOT_SPEAKER_ID = None

def on_bot_in_meeting(event: dict):

global BOT_SPEAKER_ID

BOT_SPEAKER_ID = event.get('bot_speaker_id')

def should_process_frame(speaker_id: str) -> bool:

if BOT_SPEAKER_ID and speaker_id == BOT_SPEAKER_ID:

return False # skip bot's own audio

return True

Apply this check before any STT forwarding. The same logic applies if you're doing local voice activity detection, don't run VAD on your own bot's predictable audio output.

Video Pipeline Considerations

Video adds a separate capture and processing chain. Video frames from a meeting are typically VP8 or H.264 encoded at 720p/30fps, arriving over a separate WebRTC track. Processing video in real time requires either GPU-accelerated decode (NVDEC on NVIDIA, VideoToolbox on Apple Silicon) or accepting higher CPU load for software decode.

For most meeting intelligence use cases (OCR of shared screens, speaker face recognition, slide capture), you don't need every frame. A 1, 2 fps sampling rate is sufficient for slide and whiteboard capture. Use keyframe-only decode to reduce processing load, extract I-frames from the H.264 stream rather than decoding the full inter-frame sequence.

How MeetStream Fits

MeetStream's real-time audio delivery handles the capture, decode, and framing stages of the pipeline for you. You receive clean PCM16 LE audio at 48kHz with speaker attribution over a WebSocket, without instrumenting a headless browser or reverse-engineering platform WebRTC stacks. Configure live_audio_required with your WebSocket endpoint when calling the create bot API, and your pipeline starts at the resample step rather than the capture step.

Conclusion

A production-grade meeting media pipeline requires handling each stage, capture, decode, resample, forward, with explicit attention to latency, speaker attribution, and self-echo filtering. The 48kHz stereo to 16kHz mono resampling step is the most technically dense part and deserves careful implementation with a polyphase filter rather than naive interpolation. Separating the audio stream WebSocket from the control WebSocket is the right design pattern for avoiding head-of-line blocking. If you want to skip the capture and decode stages entirely and start from clean PCM frames with speaker metadata, get started free at meetstream.ai.

Frequently Asked Questions

Why does audio resampling from 48kHz to 16kHz require a low-pass filter?

Downsampling without a low-pass filter causes aliasing, high-frequency components above the new Nyquist frequency (8kHz for 16kHz output) fold back into the audible spectrum as distortion. A low-pass filter at the new Nyquist removes these before downsampling. Polyphase filters like scipy's resample_poly handle this automatically.

What format does MeetStream deliver real-time audio in?

MeetStream delivers audio as binary WebSocket frames containing PCM16 signed 16-bit little-endian audio at 48kHz mono, with a JSON header containing speaker_id and speaker_name for speaker attribution. Your pipeline receives these frames directly from the /bridge/audio WebSocket endpoint.

How do I prevent my bot from transcribing its own speech?

Track the bot's own speaker_id from the bot.inmeeting event and filter out frames from that speaker ID before passing audio to your STT pipeline. Apply this filter at the frame parsing stage, before any CPU-intensive processing, to avoid wasting resources on self-generated audio.

What is the expected latency for real-time audio delivery?

End-to-end latency from audio capture in the meeting to your WebSocket handler receiving the frame is typically 50, 150 ms depending on network conditions and the meeting platform's internal buffering. For real-time response applications, budget 200 ms total for audio delivery plus STT plus your processing logic.

Can I capture video frames in real time from a meeting bot?

Real-time video capture is supported but computationally heavier than audio. For most use cases (slide capture, screen OCR), keyframe-only sampling at 1, 2 fps is sufficient and much cheaper than full 30fps decode. Use hardware-accelerated decode if you need full framerate video processing.