Zoom Recording Bot API: Get Recordings Programmatically

The Zoom Cloud Recording API requires a Pro account, approval from Zoom, and only works for recordings made by account owners. If you want to record meetings hosted by your customers, or meetings across multiple Zoom workspaces you don't control, you're looking at a marketplace app with security review and an OAuth consent flow per customer. There's a simpler path.

A recording bot API takes a fundamentally different approach: instead of pulling recording artifacts from Zoom's storage system after the fact, a bot joins the meeting as a participant and captures audio and video directly during the call. No host account access required. No marketplace listing. No per-customer OAuth setup.

This guide is a technical reference for the MeetStream Zoom recording bot API: the full bot lifecycle, actual webhook payload examples, and Python code for building a complete webhook handler that retrieves recordings and transcripts. If you've read the overview posts and want the implementation details, this is the one.

In this guide, we'll cover the bot lifecycle stages and status values, actual JSON webhook payloads with real field names, and a Python implementation of the complete recording retrieval flow. Let's get into it.

How Zoom recording bots differ from the Zoom Cloud Recording API

The fundamental difference is data flow direction. The Zoom Cloud Recording API is a pull model: Zoom stores the recording on their servers, you request it after the meeting ends using host credentials. The bot API is an event-driven push model: the bot captures data during the meeting and delivers it to your systems via webhook.

This distinction has practical consequences. With the pull model, you need credentials that have read access to the specific Zoom account where the meeting was hosted. With the bot model, you need a meeting URL and a bot participant slot in the meeting. The second requirement is much easier to fulfill across diverse customer environments.

The bot also gives you access to per-participant audio streams and the meeting timeline in real time, which the Cloud Recording API does not. If you want to build features that respond to what's happening in the meeting while it's in progress, the bot is the only path.

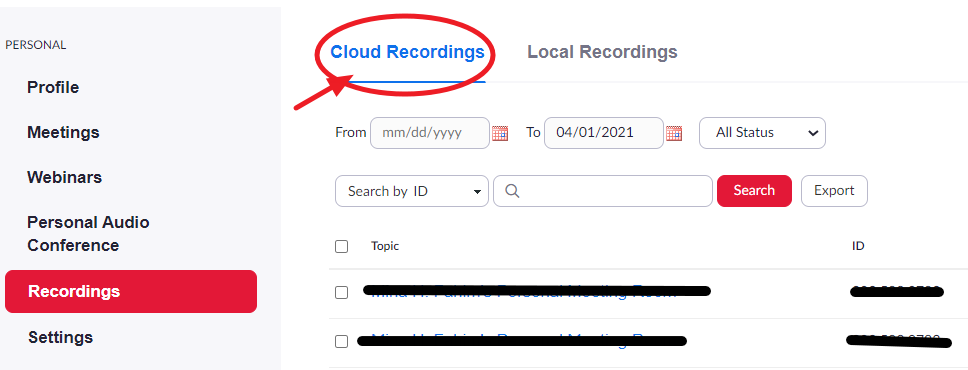

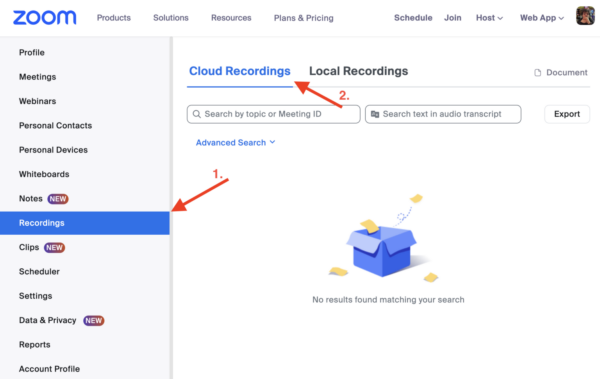

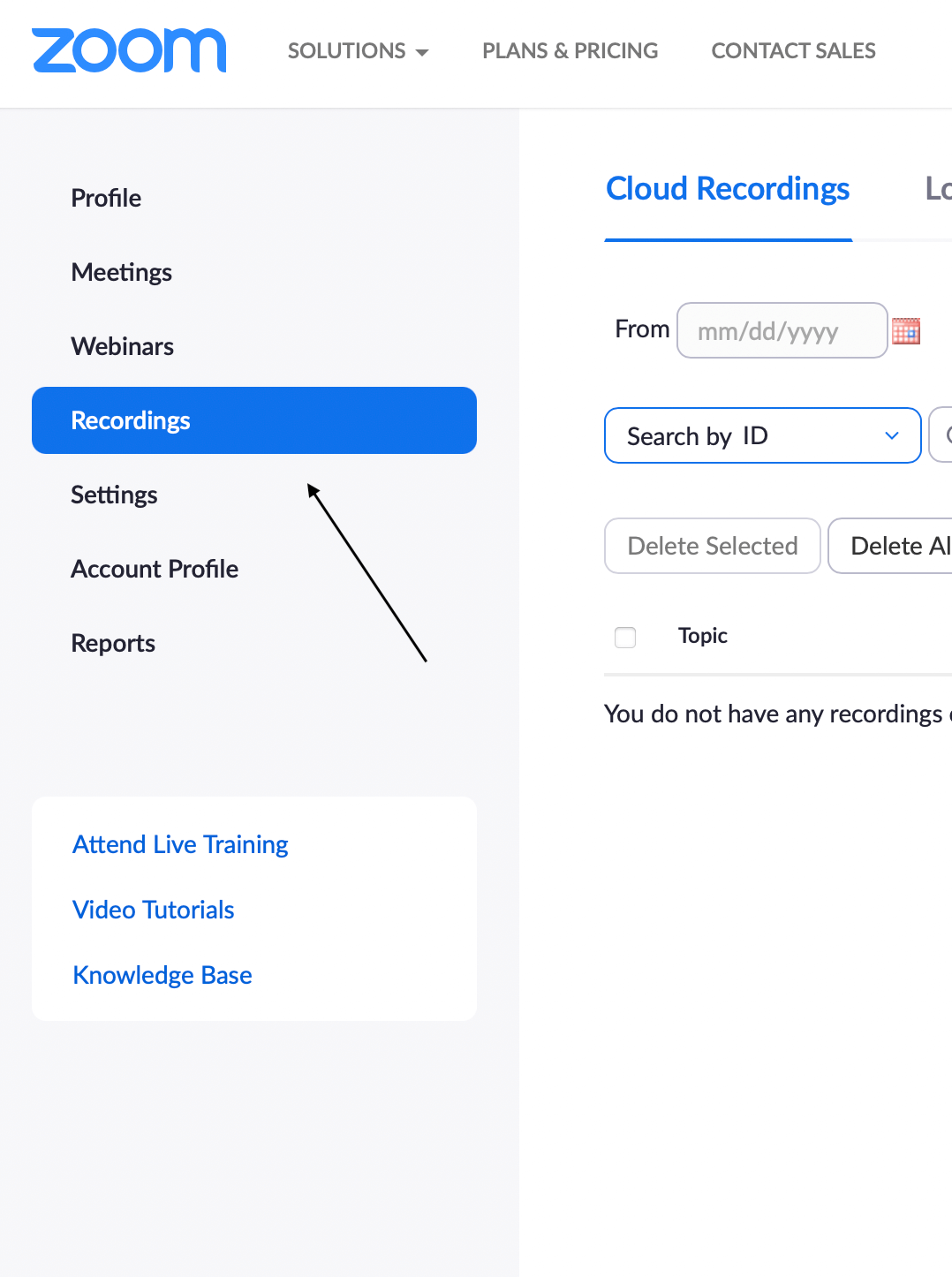

MeetStream's Zoom bot requires a one-time integration configuration. The docs.meetstream.ai setup guide walks through the Zoom app configuration. After that, every bot deployment is a single API call.

The bot lifecycle: states and transitions

Every bot session moves through a defined set of states. Understanding this lifecycle is essential for building reliable error handling.

When you call create_bot, the bot enters Joining state and attempts to enter the Zoom waiting room or meeting lobby. Once admitted, it transitions to InMeeting and begins recording. When the meeting ends (or the bot is removed, or automatic_leave conditions trigger), it transitions to Stopped.

The bot_status values in the bot.stopped webhook event tell you exactly why the session ended:

Stopped: clean exit, meeting ended normally. NotAllowed: bot waited in the waiting room past waiting_room_timeout and was not admitted. Denied: bot was removed from the meeting by the host. Error: an infrastructure error occurred on the MeetStream side. Joining: session ended while still in the joining phase (rare, usually a network issue).

Build distinct handling for each of these. Stopped means proceed to transcript retrieval. The others are all error states that warrant different responses.

Deploying a Zoom recording bot

The create_bot endpoint takes the meeting URL and your configuration. Here's a complete request with all fields relevant to recording retrieval:

curl -X POST https://api.meetstream.ai/api/v1/bots/create_bot \

-H "Authorization: Token YOUR_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"meeting_link": "https://zoom.us/j/123456789",

"bot_name": "Recorder",

"callback_url": "https://yourapp.com/webhooks/meetstream",

"video_required": true,

"recording_config": {

"transcript": {

"provider": {

"name": "deepgram"

}

},

"retention": {

"type": "timed",

"hours": 48

}

},

"automatic_leave": {

"waiting_room_timeout": 300,

"everyone_left_timeout": 60

}

}'Actual webhook payloads

Here are the exact JSON payloads your callback URL receives for each event in the recording lifecycle:

// bot.joining

{

"event": "bot.joining",

"bot_id": "bot_abc123",

"transcript_id": "txn_xyz789",

"meeting_url": "https://zoom.us/j/123456789",

"timestamp": "2026-04-02T14:00:00Z"

}

// bot.inmeeting

{

"event": "bot.inmeeting",

"bot_id": "bot_abc123",

"transcript_id": "txn_xyz789",

"meeting_url": "https://zoom.us/j/123456789",

"timestamp": "2026-04-02T14:02:15Z"

}

// bot.stopped

{

"event": "bot.stopped",

"bot_id": "bot_abc123",

"transcript_id": "txn_xyz789",

"bot_status": "Stopped",

"meeting_url": "https://zoom.us/j/123456789",

"timestamp": "2026-04-02T15:01:44Z"

}

// audio.processed

{

"event": "audio.processed",

"bot_id": "bot_abc123",

"transcript_id": "txn_xyz789",

"timestamp": "2026-04-02T15:03:20Z"

}

// transcription.processed

{

"event": "transcription.processed",

"bot_id": "bot_abc123",

"transcript_id": "txn_xyz789",

"status": "completed",

"timestamp": "2026-04-02T15:06:55Z"

}

The transcript_id is present in every event, which means you always have the retrieval key regardless of which event you're handling. The bot_id is used for artifact endpoints (audio, video). Store both from the initial create_bot response.

Python implementation: complete webhook handler and retrieval

import requests

from flask import Flask, request, jsonify

from dataclasses import dataclass

from typing import Optional

import logging

app = Flask(__name__)

logger = logging.getLogger(__name__)

API_KEY = "YOUR_API_KEY"

BASE_URL = "https://api.meetstream.ai/api/v1"

HEADERS = {"Authorization": f"Token {API_KEY}"}

@dataclass

class BotSession:

bot_id: str

transcript_id: str

status: str

audio_url: Optional[str] = None

video_url: Optional[str] = None

transcript: Optional[dict] = None

# In-memory store for demo purposes

sessions: dict[str, BotSession] = {}

@app.route('/webhooks/meetstream', methods=['POST'])

def webhook_handler():

data = request.json

event = data['event']

bot_id = data['bot_id']

transcript_id = data.get('transcript_id')

if event == 'bot.joining':

sessions[bot_id] = BotSession(

bot_id=bot_id,

transcript_id=transcript_id,

status='joining'

)

logger.info(f"Bot {bot_id} joining meeting")

elif event == 'bot.inmeeting':

if bot_id in sessions:

sessions[bot_id].status = 'recording'

logger.info(f"Bot {bot_id} recording started")

elif event == 'bot.stopped':

bot_status = data.get('bot_status', '')

handle_stopped(bot_id, bot_status)

elif event == 'audio.processed':

handle_audio_ready(bot_id)

elif event == 'transcription.processed':

handle_transcript_ready(bot_id, transcript_id)

# Always return 200 immediately

return jsonify({'received': True}), 200

def handle_stopped(bot_id: str, bot_status: str):

session = sessions.get(bot_id)

if not session:

return

if bot_status == 'Stopped':

session.status = 'processing'

logger.info(f"Meeting ended cleanly for {bot_id}, awaiting artifacts")

elif bot_status == 'NotAllowed':

session.status = 'failed'

logger.warning(f"Bot {bot_id} not admitted from waiting room")

# Queue retry or alert

elif bot_status == 'Denied':

session.status = 'removed'

logger.warning(f"Bot {bot_id} removed by host")

elif bot_status == 'Error':

session.status = 'error'

logger.error(f"Infrastructure error for bot {bot_id}")

def handle_audio_ready(bot_id: str):

"""Audio artifact is downloadable at GET /bots/{bot_id}/audio"""

audio_url = f"{BASE_URL}/bots/{bot_id}/audio"

response = requests.get(audio_url, headers=HEADERS)

if response.status_code == 200:

# Save audio file or upload to your storage

with open(f"/tmp/{bot_id}_audio.mp3", 'wb') as f:

f.write(response.content)

logger.info(f"Audio saved for {bot_id}")

def handle_transcript_ready(bot_id: str, transcript_id: str):

"""Fetch and store the completed transcript"""

url = f"{BASE_URL}/transcript/{transcript_id}/get_transcript"

response = requests.get(url, headers=HEADERS)

if response.status_code == 200:

transcript = response.json()

if bot_id in sessions:

sessions[bot_id].transcript = transcript

sessions[bot_id].status = 'complete'

# Process the transcript

segments = transcript.get('segments', [])

full_text = ' '.join(s['text'] for s in segments if s['text'])

logger.info(f"Transcript ready: {len(segments)} segments, {len(full_text)} chars")

# Pass to downstream systems

send_to_crm(bot_id, transcript)

queue_summarization(transcript_id, full_text)

else:

logger.error(f"Failed to fetch transcript {transcript_id}: {response.status_code}")

def send_to_crm(bot_id: str, transcript: dict):

# Your CRM integration here

pass

def queue_summarization(transcript_id: str, text: str):

# Your summarization pipeline here

passRetrieving audio and video artifacts

Beyond the transcript, the audio and video files are accessible via bot ID once the corresponding processed events fire. The audio.processed event precedes transcription.processed since transcription depends on the audio artifact.

# Get audio file (after audio.processed webhook)

response = requests.get(

f"https://api.meetstream.ai/api/v1/bots/{bot_id}/audio",

headers={"Authorization": "Token YOUR_API_KEY"}

)

with open("meeting_audio.mp3", "wb") as f:

f.write(response.content)

# Get video file (after video.processed webhook)

response = requests.get(

f"https://api.meetstream.ai/api/v1/bots/{bot_id}/video",

headers={"Authorization": "Token YOUR_API_KEY"}

)

with open("meeting_video.mp4", "wb") as f:

f.write(response.content)Audio is returned as MP3. Video is returned as MP4. Both are the full recording from the bot's perspective inside the meeting. If you only need transcripts, set video_required: false in the create_bot request to skip video capture and processing entirely.

Removing a bot mid-meeting

If you need to stop recording before the meeting ends, use the remove_bot endpoint:

curl -X POST https://api.meetstream.ai/api/v1/bots/BOT_ID/remove_bot \

-H "Authorization: Token YOUR_API_KEY"This triggers the bot.stopped webhook with bot_status: Stopped, followed by the normal audio and transcription processing events. The recording covers only the time between bot.inmeeting and when remove_bot was called.

Tradeoffs and production considerations

Webhook delivery is best-effort with retries. Your handler should be idempotent. If you receive the same transcription.processed event twice and call the transcript retrieval twice, it should be safe. A simple check against the transcript ID before processing handles this.

The retention configuration controls how long artifacts remain available for download. Set retention.hours to a value that gives your processing pipeline time to retrieve artifacts even if there's a temporary outage. 48 to 72 hours is a practical default for most workflows.

How MeetStream fits in

MeetStream provides the bot infrastructure, webhook delivery, transcription pipeline, and artifact storage so you don't have to build any of those layers. The API surface is intentionally small: one endpoint to create bots, one to remove them, one to fetch transcripts. The same bot works for Zoom, Google Meet, and Teams with the same request structure. If you're building recording functionality across multiple platforms, maintaining one integration is significantly simpler than three.

Conclusion

The Zoom recording bot API provides a programmatic path to meeting recordings without requiring host account credentials or Zoom Marketplace approval. The full flow is: POST create_bot with callback_url, handle five webhook events (joining, inmeeting, stopped, audio.processed, transcription.processed), and GET the transcript using the transcript_id. The Python implementation above covers all edge cases including waiting room timeouts, host removals, and infrastructure errors. Use custom_attributes to correlate bot sessions with your own data model from the start.

Get started free at meetstream.ai or see the full API reference at docs.meetstream.ai.

Frequently Asked Questions

How does the Zoom recording bot API differ from the Zoom Cloud Recording API?

Zoom's Cloud Recording API requires host account credentials and admin-level Zoom access for each account you want to retrieve recordings from. A recording bot joins the meeting as a participant and captures audio and video directly, without needing access to the host's Zoom account. This makes the bot approach practical for recording meetings across many different Zoom accounts that you don't control.

What webhook events fire during a Zoom recording bot session?

Five events fire in sequence: bot.joining when the bot enters the waiting room, bot.inmeeting when the bot is admitted and recording begins, bot.stopped when the meeting ends (check bot_status for reason), audio.processed when the audio file is ready for download, and transcription.processed when the transcript is complete. Build your webhook handler to respond to each event in the sequence rather than polling the API.

How do I retrieve the transcript from a Zoom recording bot?

Wait for the transcription.processed webhook event, then call GET /api/v1/transcript/{transcript_id}/get_transcript with your API key. The transcript_id is returned in the initial create_bot response and is also present in all webhook payloads. The response includes speaker-attributed segments with timestamps. Do not poll this endpoint before receiving the transcription.processed event.

Can I record just audio without video from a Zoom meeting?

Yes. Set video_required to false in the create_bot request. This captures audio only, skips video processing, and reduces both processing time and storage. For transcription-focused use cases like sales coaching or meeting notes, audio-only recording is the recommended configuration. You'll receive audio.processed and transcription.processed events but not video.processed.

What happens if the Zoom recording bot is removed from the meeting?

If the host removes the bot, the bot.stopped webhook fires with bot_status set to Denied. The recording covers the time from when the bot joined (bot.inmeeting) to when it was removed. Audio and transcription processing proceed normally for the recorded portion, so you'll still receive audio.processed and transcription.processed for whatever was captured before the removal.