Meeting Bot APIs: The Complete Developer Guide

Building a product that processes meeting data sounds straightforward until you try to actually get audio out of a live Zoom or Google Meet session. There is no official media extraction API for Google Meet. Zoom's SDK requires desktop client context and App Marketplace approval. Teams requires a Graph API integration. The platform complexity is the problem, and a meeting bot API is the abstraction that solves it.

A meeting bot API is developer infrastructure: you provide a meeting URL, and the API handles joining the call, capturing audio and video, running transcription, and delivering results to your server. What you build on top is your product. This guide covers everything you need to evaluate, integrate, and build production-quality features with a meeting bot API.

In this guide, we'll cover what to look for in a meeting bot API, the key capabilities to evaluate, a complete working example, and how to structure a real implementation. Let's get into it.

What a Meeting Bot API Actually Does

At its core, a meeting bot SDK or API abstracts the platform integration layer. Here is what happens when you call a bot creation endpoint:

The service spins up a bot process in a containerized environment. That process uses a headless browser or platform SDK to join the meeting URL you provided as a participant. Once in the call, it captures audio (and optionally video) from the virtual audio devices. Audio is either stored for post-call processing or streamed to your endpoint in real time. Transcription runs through the configured provider. When the meeting ends or the bot is instructed to leave, it processes any remaining media and delivers the results to your webhook.

The bot's lifecycle from your perspective is a series of webhook events: the bot joining, the bot entering the meeting, the bot leaving, and then post-processing events as transcript and media become available. Your integration code handles these events and routes the data to wherever it needs to go.

Key Capabilities to Evaluate

Not all meeting bot APIs are equivalent. Here is what to examine before committing to one.

Platform coverage. Zoom, Google Meet, and Teams are the three that matter for most products. Each platform has different technical and policy requirements. Zoom requires a registered App Marketplace application, any platform claiming Zoom support should specify that their integration is compliant and uses the official SDK path, not browser automation that violates Zoom's terms. Google Meet and Teams are more accessible.

Transcription provider choice. Transcription quality, latency, and language support vary significantly across providers. Deepgram nova-3 is optimized for real-time streaming with low latency. AssemblyAI is stronger for post-call batch processing with better entity detection. JigsawStack adds automatic language detection. The ability to configure this per call matters if you have different quality requirements for different call types, or if you are building for a multilingual user base.

Real-time audio streaming. If your product needs to process meeting audio during the call rather than after it ends, you need a meeting bot API that delivers audio frames over WebSocket as the meeting progresses. The format matters: PCM16 little-endian at 48kHz mono is the standard input format for most STT streaming APIs. Per-speaker streams let you process individual participants separately without running your own diarization.

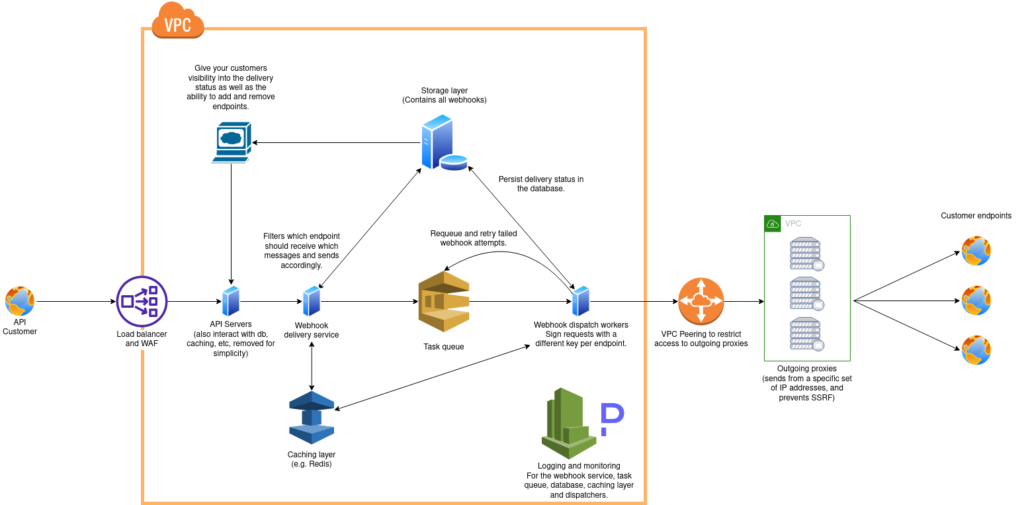

Webhook reliability. Results get delivered to your server via webhooks. Look for: guaranteed delivery with retry logic, event ordering guarantees, a delivery log you can query for debugging, and clear documentation of all event types and payload schemas.

Calendar integration. For products where users connect their work calendar and want the bot to join meetings automatically, a calendar integration endpoint removes the need to call the create_bot endpoint manually for every meeting.

AI agent support. If you want to deploy an AI agent that can speak, send messages, or take action inside the meeting, this requires specific API support. Not all meeting bot APIs provide this capability.

The MeetStream API: Reference

The MeetStream API is available at https://api.meetstream.ai/api/v1/. Authentication uses a token header:

Authorization: Token YOUR_API_KEYAll requests use JSON bodies. The SDK covers Python, JavaScript, Go, Ruby, Java, PHP, C#, and Swift.

Creating a Bot

The core endpoint is POST /api/v1/bots/create_bot. Required parameters are meeting_link and bot_name. The most important optional parameters:

callback_url, your webhook endpoint for all bot lifecycle and media events. Required in practice unless you are polling.

recording_config, controls transcription provider, retention, and real-time delivery endpoints.

live_audio_required, set to {"websocket_url": "wss://your-server/audio"} to stream raw PCM audio in real time.

live_transcription_required, set to {"webhook_url": "https://your-server/transcript"} to stream transcript segments in real time.

socket_connection_url, set to {"websocket_url": "wss://your-server/control"} to enable in-meeting control (send audio, send messages, interrupt).

custom_attributes, arbitrary key-value pairs that pass through on every webhook event. Use this to attach deal IDs, user IDs, or routing keys so your webhook handler does not need to do a lookup.

join_at, ISO 8601 datetime to schedule the bot for a future meeting.

automatic_leave, configure timeout behavior for waiting room, everyone leaving, voice inactivity, and in-call recording duration.

Webhook Event Reference

Your callback URL receives these events:

bot.joining fires up to three times as the bot attempts to enter the meeting. This is the waiting room / lobby phase. bot.inmeeting fires once when the bot successfully enters the active call. bot.stopped fires when the bot exits with a bot_status field: Stopped (normal exit), NotAllowed (meeting denied the bot), Denied (platform-level denial), or Error.

After the meeting: audio.processed when the audio file is available, transcription.processed when the transcript is ready (includes a transcript_id), video.processed when video is available, and data_deletion when media has been purged per the retention policy.

Fetching a Transcript

Once you receive the transcription.processed event with the transcript_id, retrieve the full speaker-attributed transcript:

GET /api/v1/transcript/{transcript_id}/get_transcript

Authorization: Token YOUR_API_KEYThe response includes an array of segments, each with speaker name, start timestamp, end timestamp, and text.

Complete Working Example: Python

Here is a complete Python implementation: create a bot, receive the webhook, fetch the transcript, and run basic processing.

import requests

import json

from flask import Flask, request

API_KEY = "your_api_key"

BASE_URL = "https://api.meetstream.ai/api/v1"

HEADERS = {"Authorization": f"Token {API_KEY}", "Content-Type": "application/json"}

app = Flask(__name__)

def create_bot(meeting_link, callback_url, custom_attributes=None):

payload = {

"meeting_link": meeting_link,

"bot_name": "MeetStream Bot",

"callback_url": callback_url,

"recording_config": {

"transcript": {

"provider": {

"name": "deepgram",

"model": "nova-3"

}

},

"retention": {

"type": "timed",

"hours": 24

}

}

}

if custom_attributes:

payload["custom_attributes"] = custom_attributes

response = requests.post(

f"{BASE_URL}/bots/create_bot",

headers=HEADERS,

json=payload

)

response.raise_for_status()

return response.json()

def get_transcript(transcript_id):

response = requests.get(

f"{BASE_URL}/transcript/{transcript_id}/get_transcript",

headers=HEADERS

)

response.raise_for_status()

return response.json()

@app.route("/webhooks/meetstream", methods=["POST"])

def handle_webhook():

event = request.json

event_type = event.get("event")

data = event.get("data", {})

if event_type == "bot.inmeeting":

bot_id = data.get("bot_id")

print(f"Bot {bot_id} is in the meeting")

elif event_type == "bot.stopped":

bot_status = data.get("bot_status")

custom = data.get("custom_attributes", {})

deal_id = custom.get("crm_deal_id")

print(f"Bot stopped with status {bot_status} for deal {deal_id}")

elif event_type == "transcription.processed":

transcript_id = data.get("transcript_id")

custom = data.get("custom_attributes", {})

transcript = get_transcript(transcript_id)

segments = transcript.get("segments", [])

# Speaker-attributed segments ready for LLM processing

formatted = "\n".join(

f"{seg['speaker_name']} [{seg['start_time']}]: {seg['text']}"

for seg in segments

)

print(formatted)

# Pass to your LLM for summarization, action item extraction, etc.

return {"status": "ok"}, 200

if __name__ == "__main__":

# Deploy a bot

bot = create_bot(

meeting_link="https://meet.google.com/abc-defg-hij",

callback_url="https://your-server.com/webhooks/meetstream",

custom_attributes={"crm_deal_id": "hubspot_deal_123"}

)

print(f"Bot created: {bot['bot_id']}")

# Start webhook server

app.run(port=5000)Real-Time Audio Streaming

For real-time use cases, add live_audio_required to your bot creation request. Your WebSocket server receives binary frames with this structure:

msg_type (1 byte) + sid_length (2 bytes, little-endian) + speaker ID bytes + sname_length (2 bytes, little-endian) + speaker name bytes + PCM data

Audio is PCM16 little-endian, 48kHz, mono. This format is directly compatible with Deepgram's streaming API, AssemblyAI's streaming WebSocket, and most other STT streaming endpoints. Parse the header fields to get the speaker identity, then forward the PCM data to your STT provider or run your own processing pipeline.

In-Meeting Control

The socket_connection_url WebSocket supports three control message types:

{"type": "sendaudio", "audiochunk": "base64_encoded_pcm", ...}, play audio through the bot's microphone to all participants.

{"type": "sendmsg", "message": "text"}, send a chat message in the meeting.

{"type": "interrupt", "action": "clear_audio_queue"}, clear any queued audio the bot has not yet played.

These primitives are the foundation for building voice AI agents that participate in meetings.

Calendar Integration

For automatic bot deployment on scheduled meetings, use POST /api/v1/calendar/create_calendar with the user's calendar credentials. MeetStream auto-joins meetings 3 minutes before start and detects real-time calendar changes (cancellations, reschedules).

How MeetStream Fits the Developer Stack

MeetStream handles the infrastructure layer: Zoom, Google Meet, and Teams integration, virtual display management, audio extraction, transcription orchestration, and webhook delivery. Your team works above the abstraction boundary, analysis logic, downstream integrations, product experience. See the full API reference at docs.meetstream.ai or get started free at meetstream.ai.

Conclusion

A meeting bot API gives you a clean interface to meeting media without maintaining platform integrations. The core pattern, create bot, receive webhooks, fetch transcript, process, is straightforward to implement. The depth is in the configuration: transcription provider routing, real-time audio streaming, custom attributes for webhook routing, and in-meeting control for AI agents. Get started free at meetstream.ai.

Frequently Asked Questions

What is a meeting bot API and what can I build with one?

A meeting bot API is a managed service that joins video conferences programmatically and delivers structured media data, transcripts, audio, video, to your server via webhook. You can build meeting recording and archival systems, AI notetakers, real-time coaching tools, sales intelligence pipelines, compliance monitoring, and in-meeting AI agents. The API abstracts the platform integration complexity so your team builds product logic, not browser automation infrastructure.

How do I get started with a meeting bot SDK?

The minimal implementation is: call the create_bot endpoint with a meeting link and callback URL, set up a webhook handler that receives the transcription.processed event, fetch the transcript via the transcript retrieval endpoint, and pass the speaker-attributed segments to your analysis layer. MeetStream provides SDKs in Python, JavaScript, Go, Ruby, Java, PHP, C#, and Swift. You can have a working integration in less than a day.

What is the meeting recording API webhook event lifecycle?

The event sequence is: bot.joining (up to 3x as the bot attempts to enter), bot.inmeeting (once when the bot enters the active call), bot.stopped (when the bot exits with a status code), then post-processing events: audio.processed, transcription.processed (includes transcript_id for retrieval), video.processed, and data_deletion when media is purged per retention policy.

How does real-time audio streaming work in a meeting bot API?

Real-time audio streaming delivers PCM16 little-endian audio at 48kHz mono over a WebSocket as the meeting progresses. MeetStream delivers per-speaker streams in binary frames containing msg_type, speaker ID length, speaker ID, speaker name length, speaker name, and PCM audio data. This format is directly compatible with Deepgram's and AssemblyAI's streaming APIs. You specify the WebSocket endpoint in the live_audio_required parameter at bot creation time.

Can I use a meeting bot developer guide to build an AI agent?

Yes. In-meeting AI agents use three capabilities: real-time audio streaming for listening, streaming transcription for understanding, and in-meeting control WebSocket for responding. MeetStream's control WebSocket supports sending audio (bot speaks), sending chat messages, and clearing the audio queue. For higher-level agent orchestration, MeetStream's MIA framework supports voice, chat, and action response modes with MCP server connectivity for external tool access.